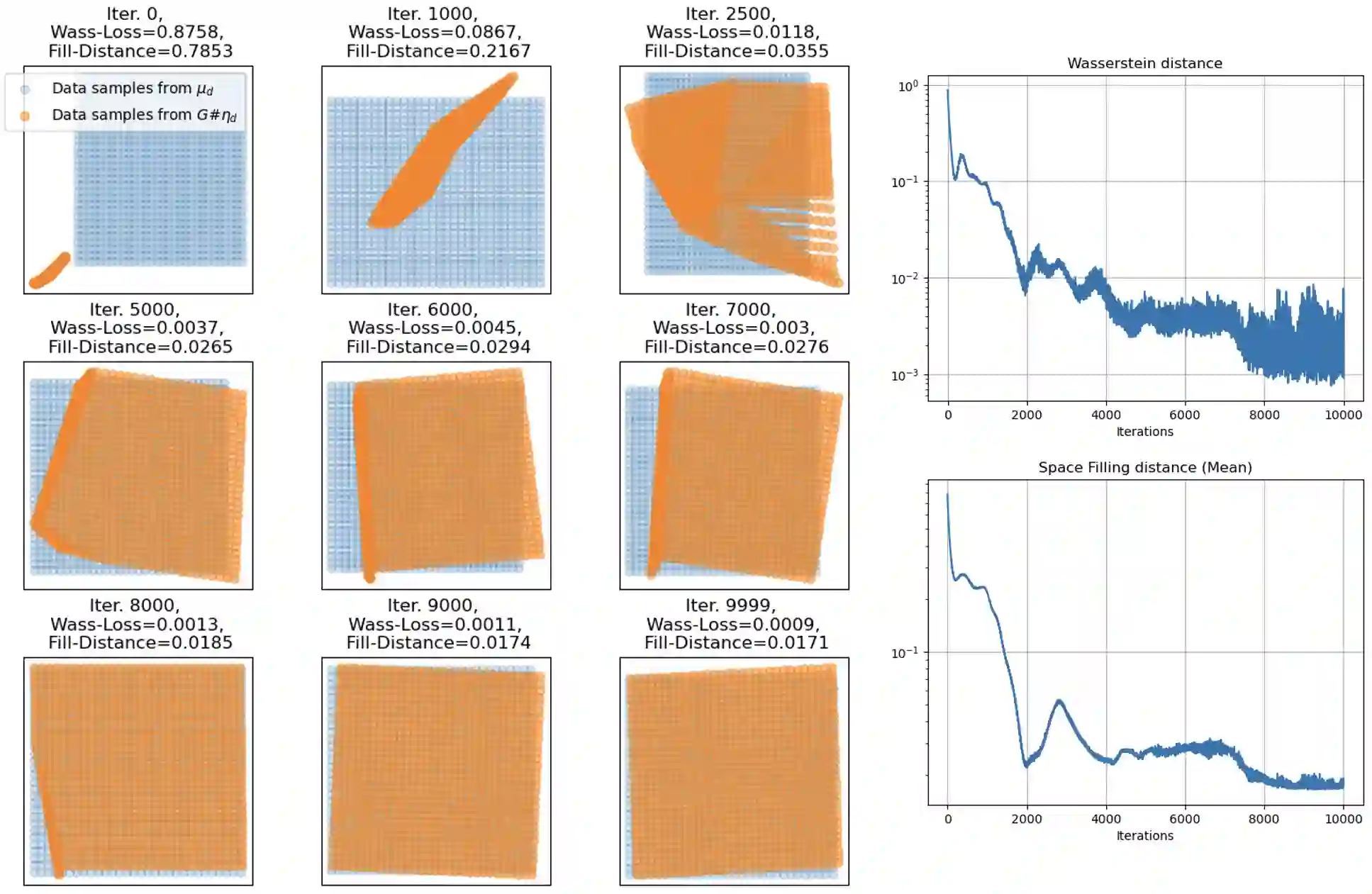

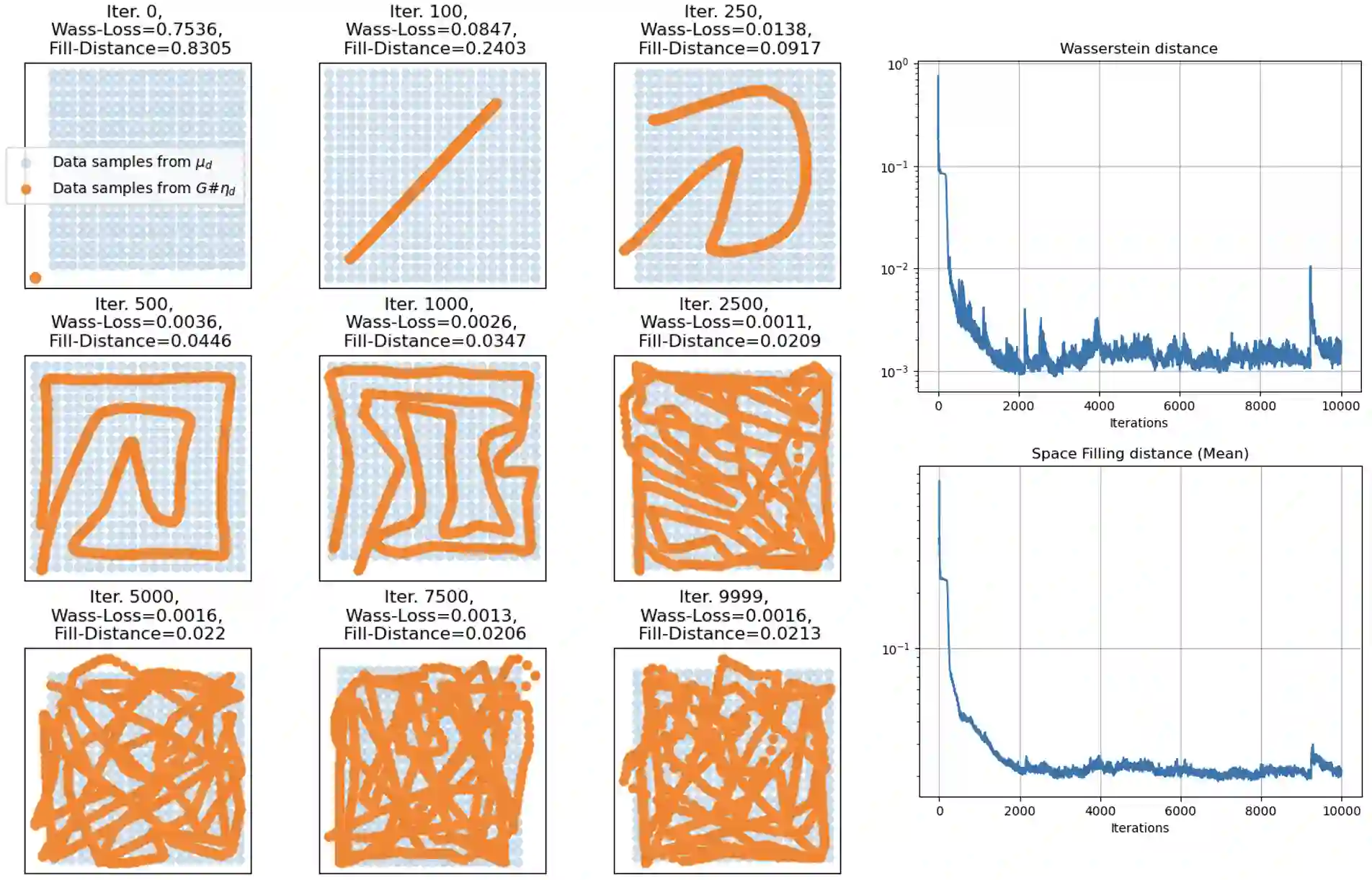

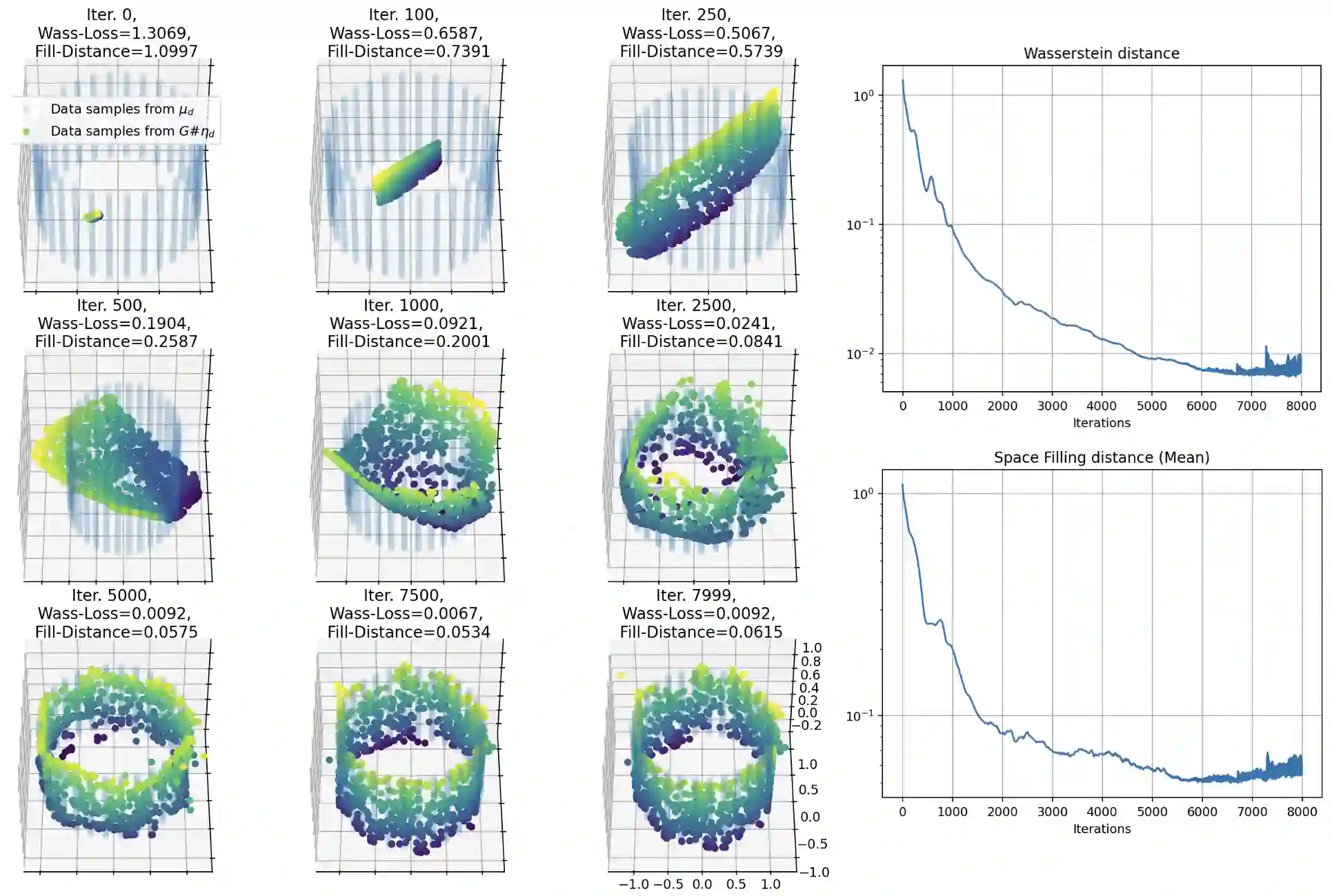

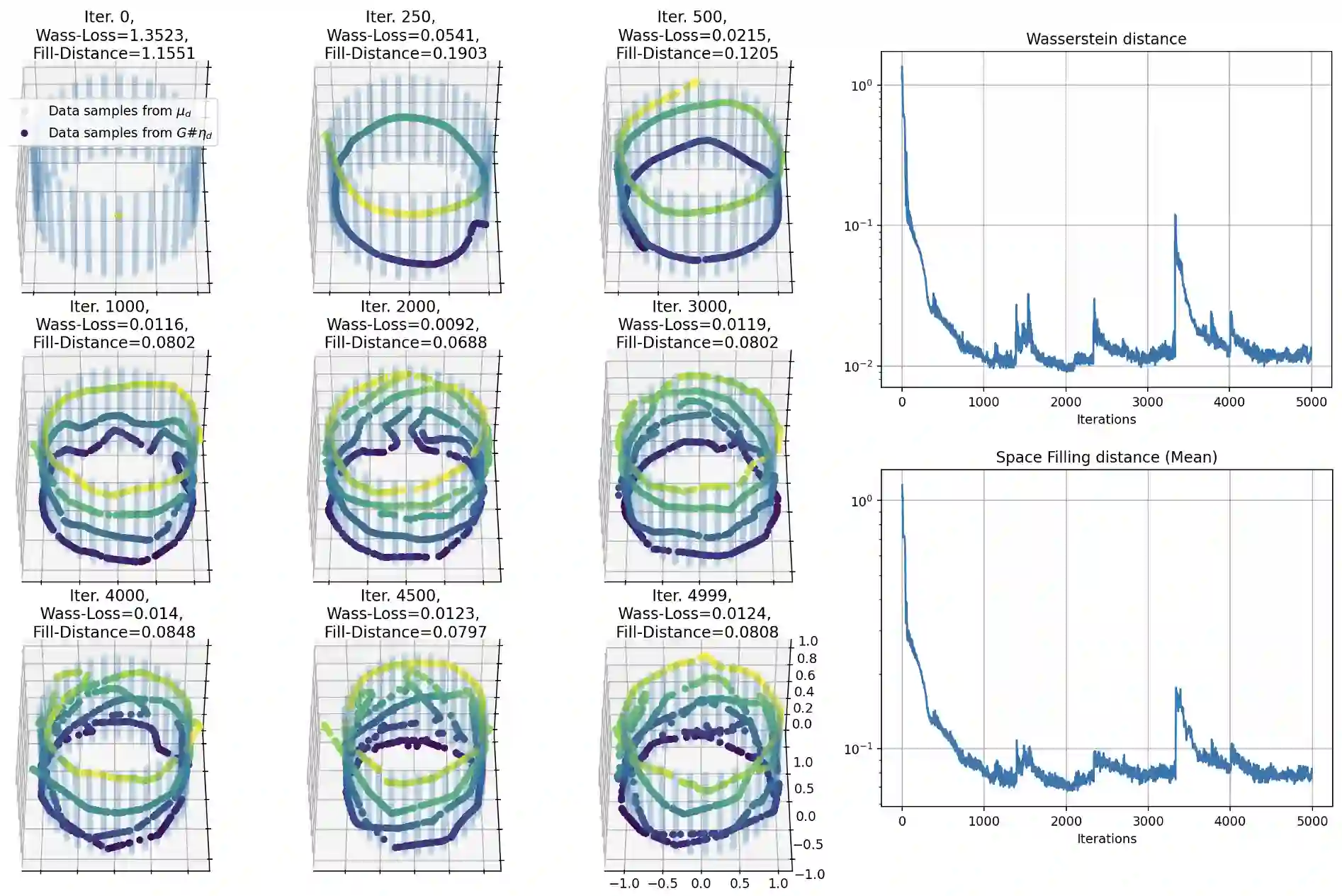

Generative networks have shown remarkable success in learning complex data distributions, particularly in generating high-dimensional data from lower-dimensional inputs. While this capability is well-documented empirically, its theoretical underpinning remains unclear. One common theoretical explanation appeals to the widely accepted manifold hypothesis, which suggests that many real-world datasets, such as images and signals, often possess intrinsic low-dimensional geometric structures. Under this manifold hypothesis, it is widely believed that to approximate a distribution on a $d$-dimensional Riemannian manifold, the latent dimension needs to be at least $d$ or $d+1$. In this work, we show that this requirement on the latent dimension is not necessary by demonstrating that generative networks can approximate distributions on $d$-dimensional Riemannian manifolds from inputs of any arbitrary dimension, even lower than $d$, taking inspiration from the concept of space-filling curves. This approach, in turn, leads to a super-exponential complexity bound of the deep neural networks through expanded neurons. Our findings thus challenge the conventional belief on the relationship between input dimensionality and the ability of generative networks to model data distributions. This novel insight not only corroborates the practical effectiveness of generative networks in handling complex data structures, but also underscores a critical trade-off between approximation error, dimensionality, and model complexity.

翻译:生成网络在学习复杂数据分布方面展现出显著成功,尤其是在从低维输入生成高维数据方面。尽管这一能力在实证上已得到充分记录,但其理论基础仍不明确。一种常见的理论解释援引被广泛接受的流形假设,该假设认为许多现实世界数据集(如图像和信号)通常具有内在的低维几何结构。在此流形假设下,学界普遍认为要近似$d$维黎曼流形上的分布,潜在维度至少需要达到$d$或$d+1$。本研究中,我们受空间填充曲线概念的启发,通过证明生成网络能够从任意维度(甚至低于$d$)的输入中近似$d$维黎曼流形上的分布,表明对潜在维度的这一要求并非必要。该方法进而通过扩展神经元推导出深度神经网络的超指数级复杂度界限。因此,我们的研究结果对关于输入维度与生成网络建模数据分布能力之间关系的传统认知提出了挑战。这一新颖见解不仅证实了生成网络在处理复杂数据结构时的实际有效性,同时揭示了近似误差、维度与模型复杂度之间关键性的权衡关系。