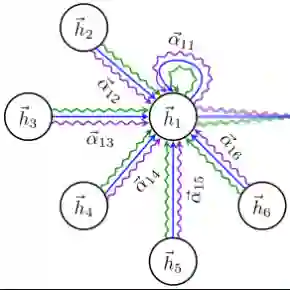

Federated training methods have gained popularity for graph learning with applications including friendship graphs of social media sites and customer-merchant interaction graphs of huge online marketplaces. However, privacy regulations often require locally generated data to be stored on local clients. The graph is then naturally partitioned across clients, with no client permitted access to information stored on another. Cross-client edges arise naturally in such cases and present an interesting challenge to federated training methods, as training a graph model at one client requires feature information of nodes on the other end of cross-client edges. Attempting to retain such edges often incurs significant communication overhead, and dropping them altogether reduces model performance. In simpler models such as Graph Convolutional Networks, this can be fixed by communicating a limited amount of feature information across clients before training, but GATs (Graph Attention Networks) require additional information that cannot be pre-communicated, as it changes from training round to round. We introduce the Federated Graph Attention Network (FedGAT) algorithm for semi-supervised node classification, which approximates the behavior of GATs with provable bounds on the approximation error. FedGAT requires only one pre-training communication round, significantly reducing the communication overhead for federated GAT training. We then analyze the error in the approximation and examine the communication overhead and computational complexity of the algorithm. Experiments show that FedGAT achieves nearly the same accuracy as a GAT model in a centralised setting, and its performance is robust to the number of clients as well as data distribution.

翻译:联邦训练方法在图学习领域日益受到关注,其应用场景包括社交媒体平台的友谊图及大型在线市场的客户-商户交互图。然而,隐私法规通常要求本地生成的数据存储在本地客户端。此时图会自然地跨客户端分区,且任何客户端均无权访问其他客户端存储的信息。在此类场景中,跨客户端边会自然产生,并对联邦训练方法提出了独特挑战:客户端训练图模型时需要获取跨客户端边另一端节点的特征信息。若尝试保留此类边,通常会产生显著的通信开销;而完全舍弃这些边则会降低模型性能。对于图卷积网络等较简单的模型,可通过训练前在客户端间传输有限的特征信息来解决此问题,但图注意力网络需要额外信息,且这些信息会随训练轮次动态变化,无法预先传输。本文提出用于半监督节点分类的联邦图注意力网络算法FedGAT,该算法能以可证明的近似误差界逼近GAT的行为。FedGAT仅需一轮训练前通信,显著降低了联邦GAT训练的通信开销。我们进一步分析了算法的近似误差,并考察了其通信开销与计算复杂度。实验表明,FedGAT在集中式设置下能达到与GAT模型几乎相同的准确率,且其性能对客户端数量及数据分布具有鲁棒性。