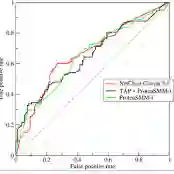

In supervised machine learning (SML) research, large training datasets are essential for valid results. However, obtaining primary data in learning analytics (LA) is challenging. Data augmentation can address this by expanding and diversifying data, though its use in LA remains underexplored. This paper systematically compares data augmentation techniques and their impact on prediction performance in a typical LA task: prediction of academic outcomes. Augmentation is demonstrated on four SML models, which we successfully replicated from a previous LAK study based on AUC values. Among 21 augmentation techniques, SMOTE-ENN sampling performed the best, improving the average AUC by 0.01 and approximately halving the training time compared to the baseline models. In addition, we compared 99 combinations of chaining 21 techniques, and found minor, although statistically significant, improvements across models when adding noise to SMOTE-ENN (+0.014). Notably, some augmentation techniques significantly lowered predictive performance or increased performance fluctuation related to random chance. This paper's contribution is twofold. Primarily, our empirical findings show that sampling techniques provide the most statistically reliable performance improvements for LA applications of SML, and are computationally more efficient than deep generation methods with complex hyperparameter settings. Second, the LA community may benefit from validating a recent study through independent replication.

翻译:在监督机器学习研究中,大规模训练数据集对于获得有效结果至关重要。然而,在学习分析领域获取原始数据具有挑战性。数据增强技术通过扩展和多样化数据能够解决这一问题,但其在学习分析中的应用仍未得到充分探索。本文系统比较了数据增强技术及其在典型学习分析任务——学业成果预测——中对预测性能的影响。我们在四种监督机器学习模型上演示了增强效果,这些模型基于AUC值成功复现了先前LAK研究的结果。在21种增强技术中,SMOTE-ENN采样方法表现最佳,与基线模型相比,平均AUC提升0.01,训练时间减少约一半。此外,我们比较了21种技术链式组合的99种方案,发现当在SMOTE-ENN基础上添加噪声时,所有模型均获得微小但具有统计显著性的改进(+0.014)。值得注意的是,某些增强技术显著降低了预测性能或增加了与随机因素相关的性能波动。本文的贡献主要体现在两个方面:首先,实证结果表明采样技术能为监督机器学习在学习分析中的应用提供最统计可靠的性能提升,且比具有复杂超参数设置的深度生成方法计算效率更高;其次,学习分析领域可通过独立复现验证近期研究成果而获益。