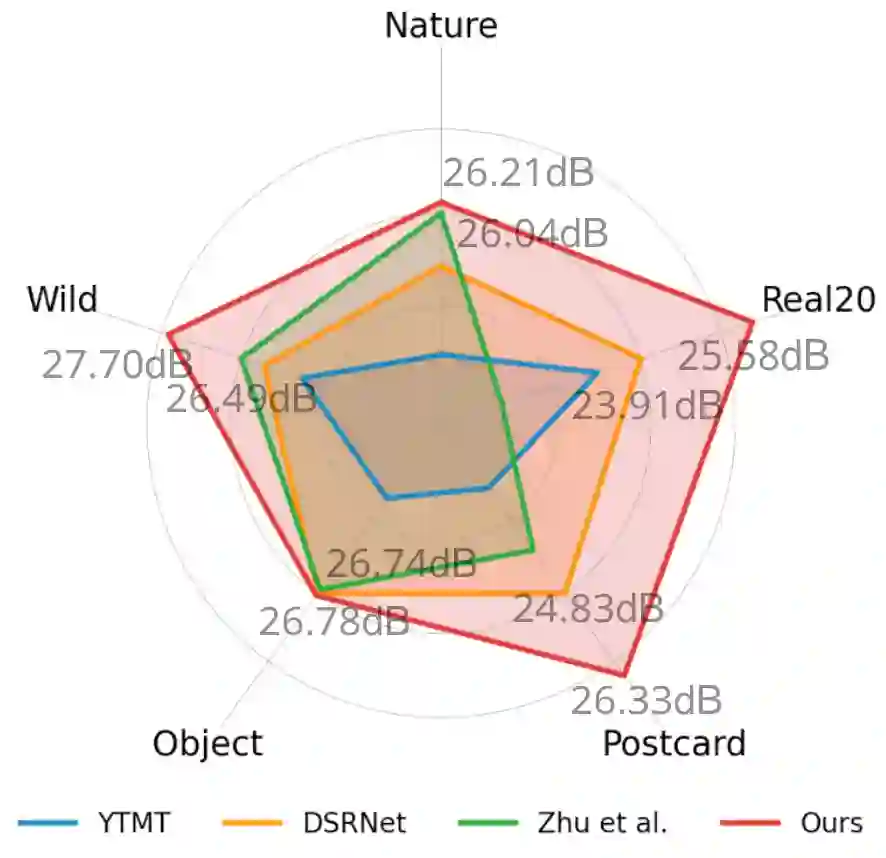

Recent deep-learning-based approaches to single-image reflection removal have shown promising advances, primarily for two reasons: 1) the utilization of recognition-pretrained features as inputs, and 2) the design of dual-stream interaction networks. However, according to the Information Bottleneck principle, high-level semantic clues tend to be compressed or discarded during layer-by-layer propagation. Additionally, interactions in dual-stream networks follow a fixed pattern across different layers, limiting overall performance. To address these limitations, we propose a novel architecture called Reversible Decoupling Network (RDNet), which employs a reversible encoder to secure valuable information while flexibly decoupling transmission- and reflection-relevant features during the forward pass. Furthermore, we customize a transmission-rate-aware prompt generator to dynamically calibrate features, further boosting performance. Extensive experiments demonstrate the superiority of RDNet over existing SOTA methods on five widely-adopted benchmark datasets. RDNet achieves the best performance in the NTIRE 2025 Single Image Reflection Removal in the Wild Challenge in both fidelity and perceptual comparison. Our code is available at https://github.com/lime-j/RDNet

翻译:近年来,基于深度学习的单幅图像反射去除方法取得了显著进展,这主要归因于两个原因:1)利用识别预训练特征作为输入;2)设计了双流交互网络。然而,根据信息瓶颈原理,高层语义线索在逐层传播过程中往往会被压缩或丢弃。此外,双流网络中的交互在不同层间遵循固定模式,限制了整体性能。为应对这些局限,我们提出了一种称为可逆解耦网络(RDNet)的新型架构,该架构采用可逆编码器在前向传播过程中安全保留有价值信息,同时灵活解耦透射相关特征与反射相关特征。此外,我们定制了一个透射率感知提示生成器,以动态校准特征,进一步提升性能。大量实验表明,在五个广泛采用的基准数据集上,RDNet优于现有的SOTA方法。在NTIRE 2025野外单幅图像反射去除挑战赛中,RDNet在保真度和感知比较方面均取得了最佳性能。我们的代码发布于 https://github.com/lime-j/RDNet