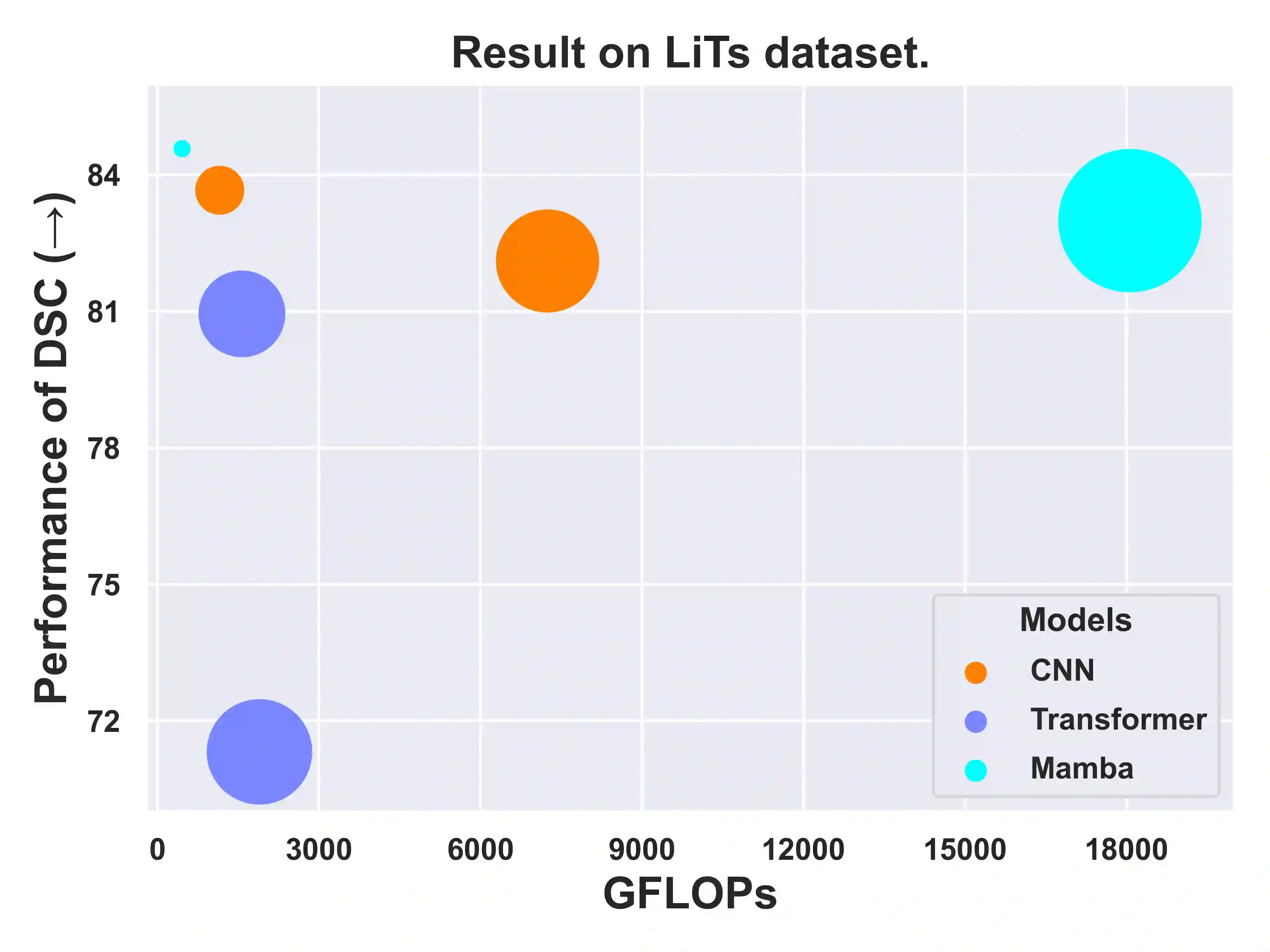

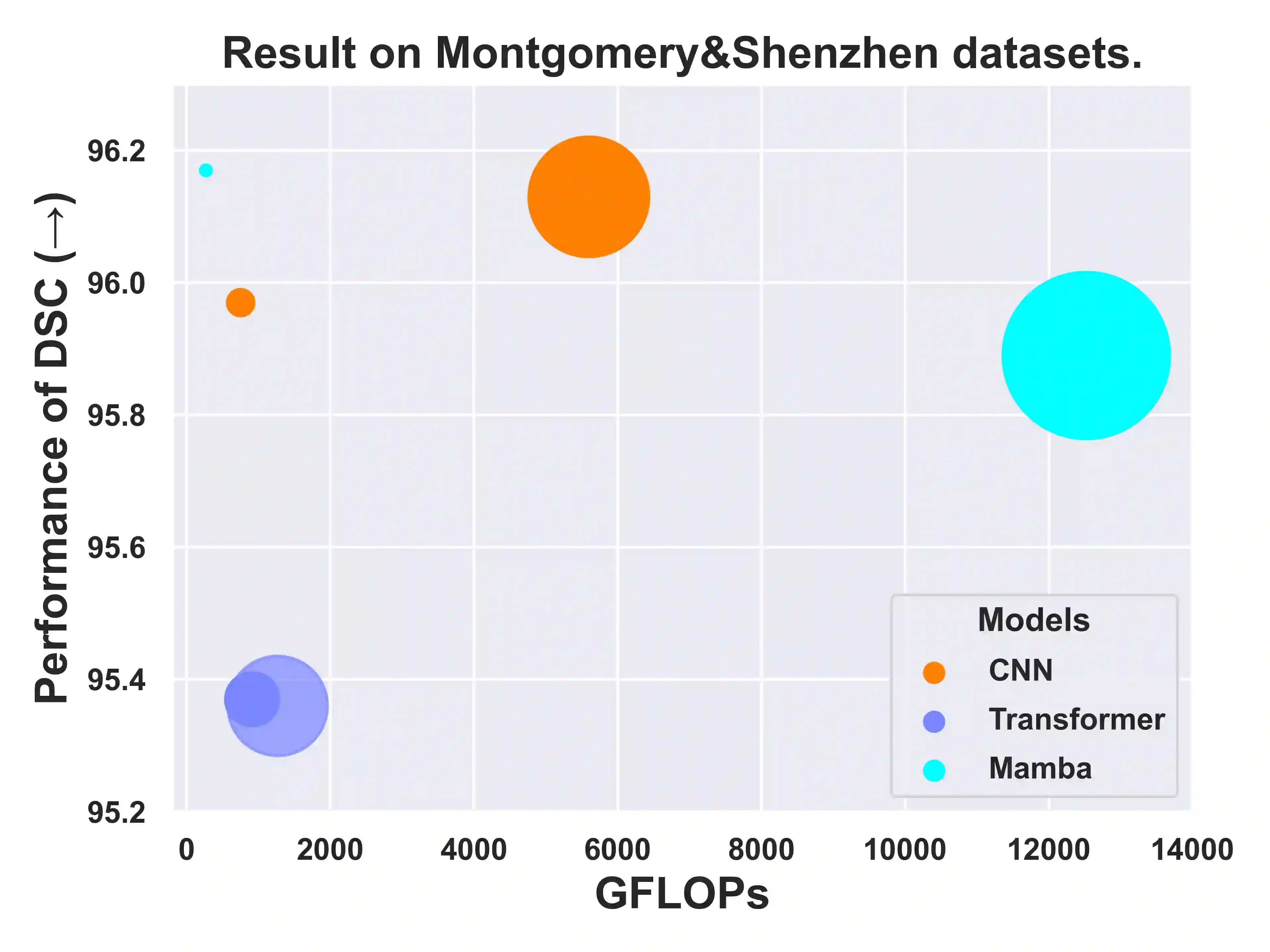

UNet and its variants have been widely used in medical image segmentation. However, these models, especially those based on Transformer architectures, pose challenges due to their large number of parameters and computational loads, making them unsuitable for mobile health applications. Recently, State Space Models (SSMs), exemplified by Mamba, have emerged as competitive alternatives to CNN and Transformer architectures. Building upon this, we employ Mamba as a lightweight substitute for CNN and Transformer within UNet, aiming at tackling challenges stemming from computational resource limitations in real medical settings. To this end, we introduce the Lightweight Mamba UNet (LightM-UNet) that integrates Mamba and UNet in a lightweight framework. Specifically, LightM-UNet leverages the Residual Vision Mamba Layer in a pure Mamba fashion to extract deep semantic features and model long-range spatial dependencies, with linear computational complexity. Extensive experiments conducted on two real-world 2D/3D datasets demonstrate that LightM-UNet surpasses existing state-of-the-art literature. Notably, when compared to the renowned nnU-Net, LightM-UNet achieves superior segmentation performance while drastically reducing parameter and computation costs by 116x and 21x, respectively. This highlights the potential of Mamba in facilitating model lightweighting. Our code implementation is publicly available at https://github.com/MrBlankness/LightM-UNet.

翻译:UNet及其变体已被广泛应用于医学图像分割。然而,这些模型(尤其是基于Transformer架构的模型)因其参数量大、计算负载高而面临挑战,难以适用于移动医疗应用。近期,以Mamba为代表的状态空间模型(SSMs)已成为CNN和Transformer架构的有力替代方案。基于此,我们将Mamba作为CNN和Transformer的轻量级替代品引入UNet,旨在解决真实医疗场景中因计算资源有限而带来的挑战。为此,我们提出了轻量级Mamba UNet(LightM-UNet),它在一轻量级框架中融合了Mamba与UNet。具体而言,LightM-UNet以纯Mamba形式利用残差视觉Mamba层来提取深层语义特征并建模长距离空间依赖性,且计算复杂度为线性。在两个真实2D/3D数据集上进行的大量实验表明,LightM-UNet超越了现有最新文献。值得注意的是,与著名的nnU-Net相比,LightM-UNet在实现更优分割性能的同时,将参数和计算成本分别大幅降低了116倍和21倍。这凸显了Mamba在促进模型轻量化方面的潜力。我们的代码实现已公开于https://github.com/MrBlankness/LightM-UNet。