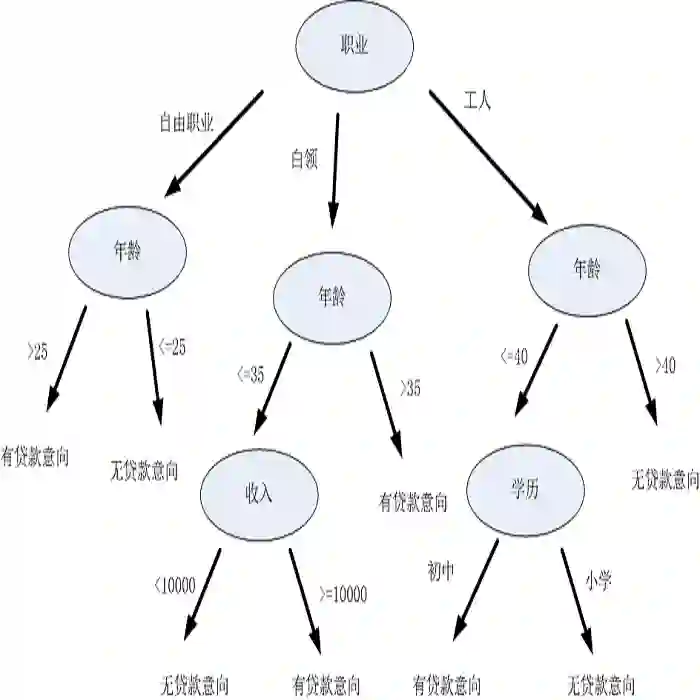

There is a widespread and longstanding belief that machine learning models are biased towards the majority class when learning from imbalanced binary response data, leading them to neglect or ignore the minority class. Motivated by a recent simulation study that found that decision trees can be biased towards the minority class, our paper aims to reconcile the conflict between that study and other published works. First, we critically evaluate past literature on this problem, finding that failing to consider the conditional distribution of the outcome given the predictors has led to incorrect conclusions about the bias in decision trees. We then show that, under specific conditions, decision trees fit to purity are biased towards the minority class, debunking the belief that decision trees are always biased towards the majority class. This bias can be reduced by adjusting the tree-fitting process to include regularization methods like pruning and setting a maximum tree depth, and/or by using post-hoc calibration methods. Our findings have implications on the use of popular tree-based models, such as random forests. Although random forests are often composed of decision trees fit to purity, our work adds to recent literature indicating that this may not be the best approach.

翻译:长期以来普遍存在一种观点,即机器学习模型在处理不平衡二元响应数据时偏向多数类,导致其忽视或忽略少数类。受近期一项发现决策树可能偏向少数类的模拟研究启发,本文旨在调和该研究与其他已发表成果之间的冲突。首先,我们对以往关于此问题的文献进行批判性评估,发现由于未能考虑给定预测变量条件下结果的条件分布,导致了对决策树偏向性的错误结论。随后我们证明,在特定条件下,拟合至纯度的决策树确实会偏向少数类,这打破了"决策树始终偏向多数类"的固有认知。通过调整决策树拟合过程(如采用剪枝、设置最大树深度等正则化方法)和/或使用后校准方法,可以有效减少这种偏向。我们的研究对随机森林等常用树型模型的应用具有启示意义。尽管随机森林通常由拟合至纯度的决策树构成,但本文的研究进一步佐证了近期文献的观点:这或许并非最优方案。