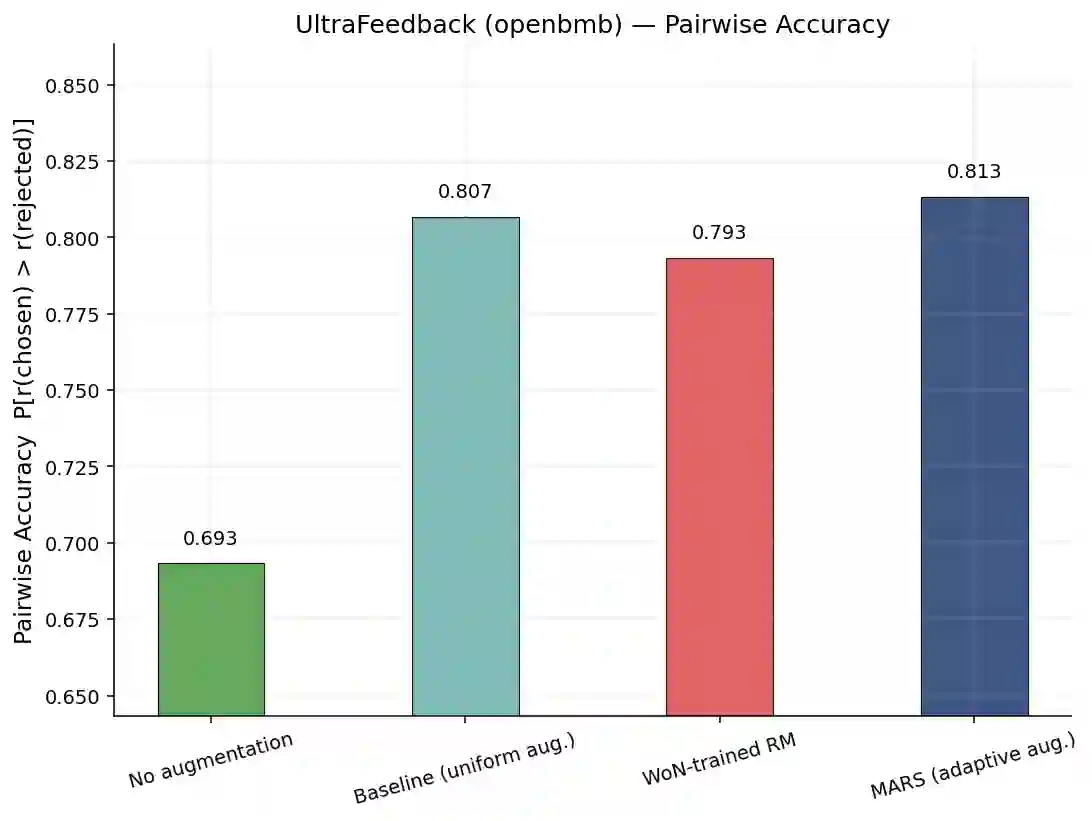

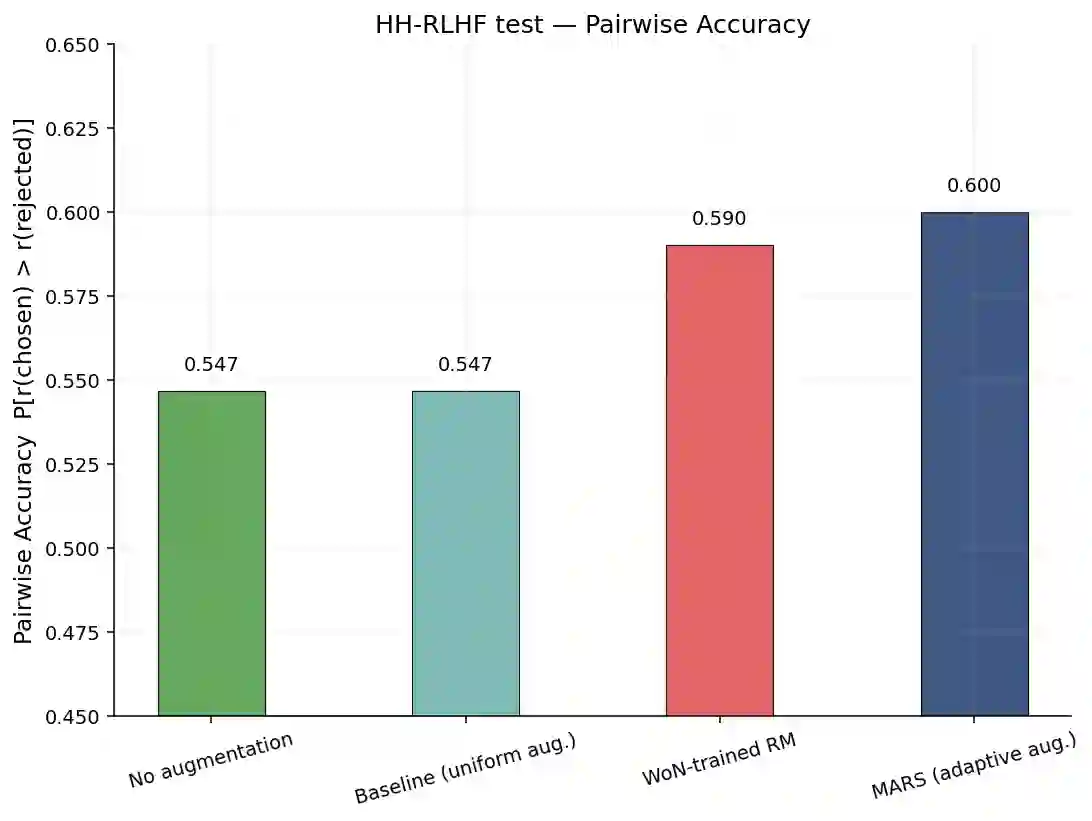

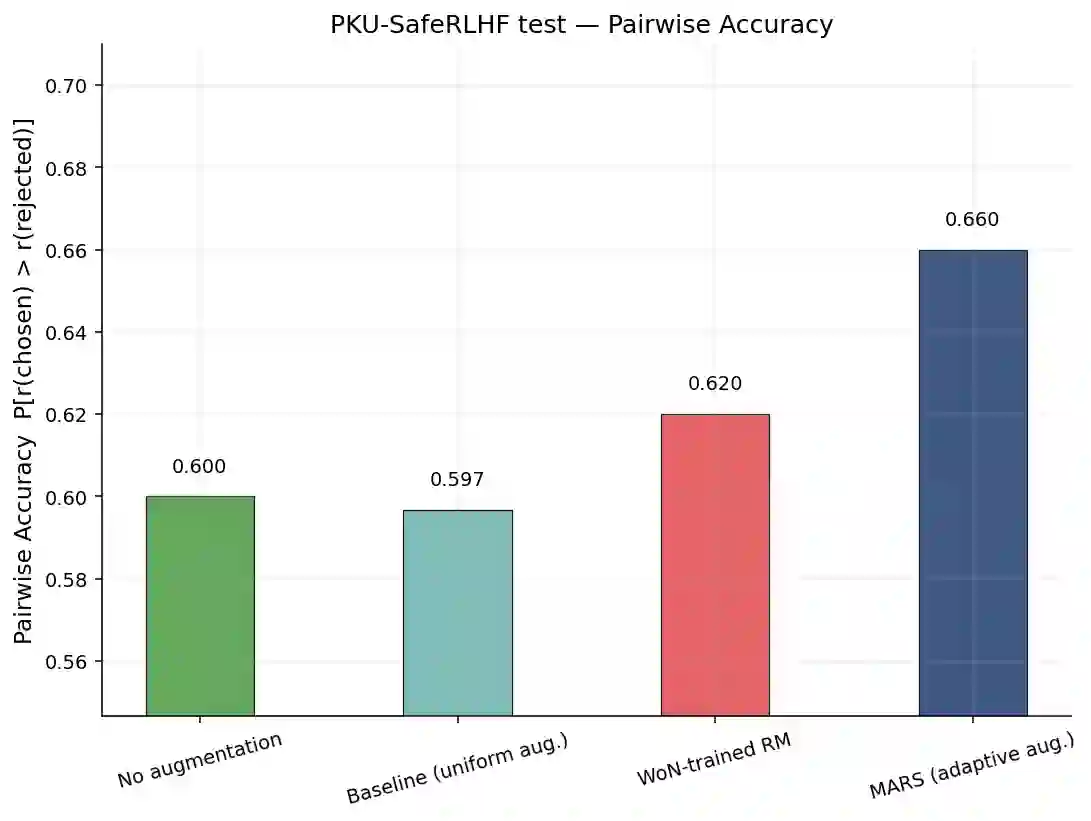

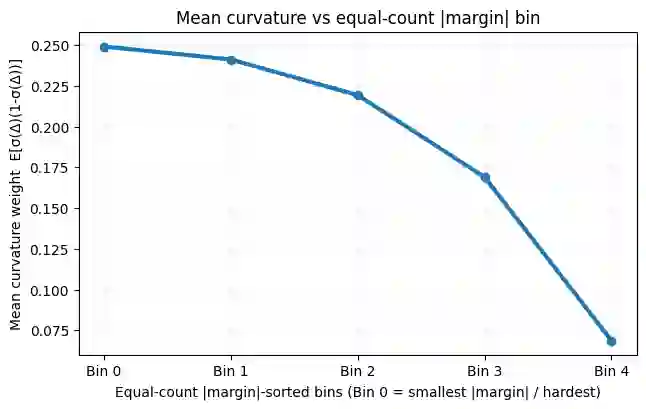

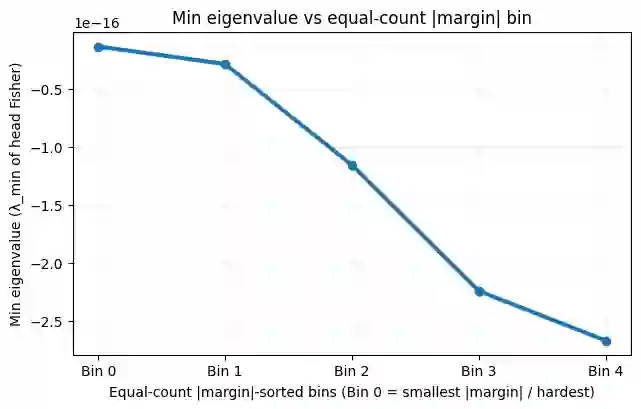

Reward modeling is a core component of modern alignment pipelines including RLHF and RLAIF, underpinning policy optimization methods including PPO and TRPO. However, training reliable reward models relies heavily on human-labeled preference data, which is costly and limited, motivating the use of data augmentation. Existing augmentation approaches typically operate at the representation or semantic level and remain agnostic to the reward model's estimation difficulty. In this paper, we propose MARS, an adaptive, margin-aware augmentation and sampling strategy that explicitly targets ambiguous and failure modes of the reward model. Our proposed framework, MARS, concentrates augmentation on low-margin (ambiguous) preference pairs where the reward model is most uncertain, and iteratively refines the training distribution via hard-sample augmentation. We provide theoretical guarantees showing that this strategy increases the average curvature of the loss function hence enhance information and improves conditioning, along with empirical results demonstrating consistent gains over uniform augmentation for robust reward modeling.

翻译:奖励建模是现代对齐流程(包括RLHF和RLAIF)的核心组成部分,为PPO和TRPO等策略优化方法提供基础。然而,训练可靠的奖励模型严重依赖于人工标注的偏好数据,这些数据成本高昂且数量有限,这促使了数据增强方法的应用。现有的增强方法通常在表示或语义层面操作,且未考虑奖励模型的估计难度。本文提出MARS,一种自适应的、边界感知的数据增强与采样策略,该策略明确针对奖励模型的模糊区域和失效模式。我们提出的MARS框架将增强集中在奖励模型最不确定的低边界(模糊)偏好对上,并通过困难样本增强迭代优化训练分布。我们提供了理论保证,表明该策略能增加损失函数的平均曲率,从而增强信息量并改善条件数,同时通过实证结果证明,相较于均匀增强方法,MARS在鲁棒奖励建模方面能带来持续的性能提升。