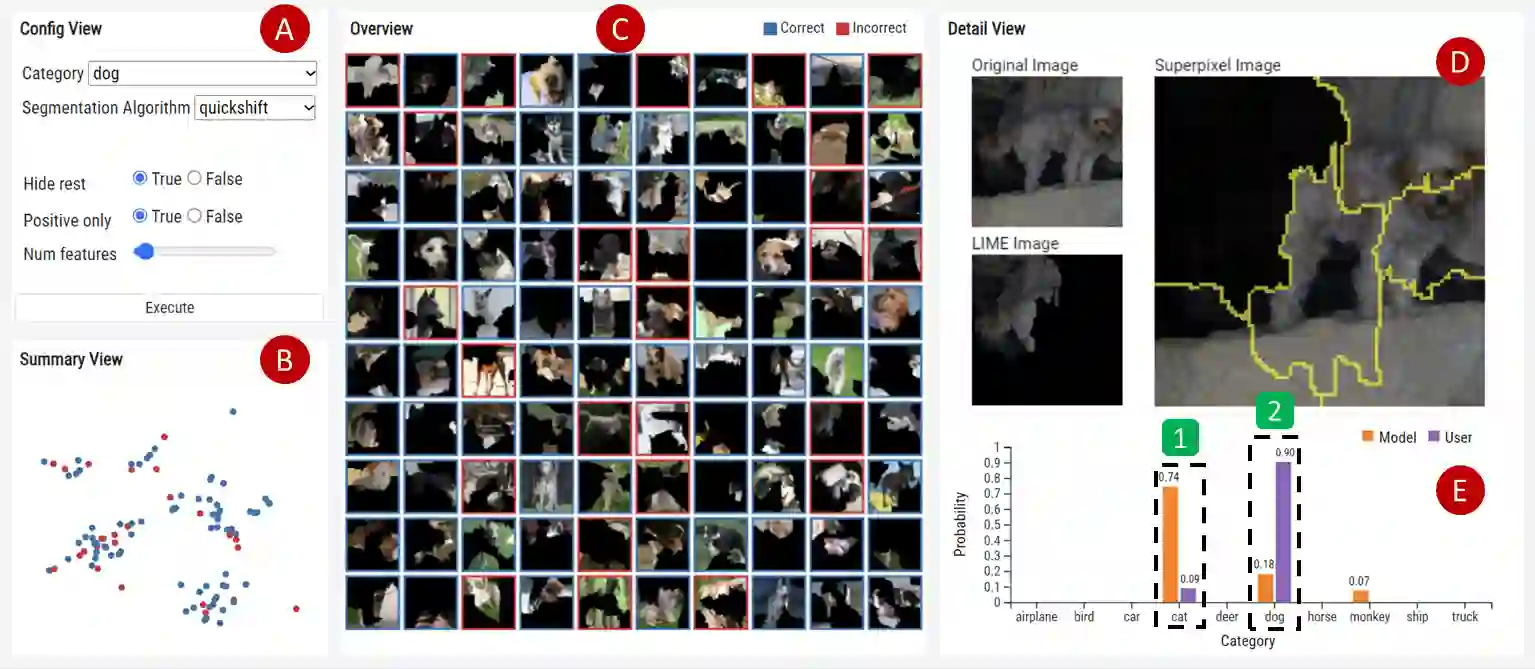

Explainable Artificial Intelligence (XAI) has gained importance in interpreting model predictions. Among leading techniques for XAI, Local Interpretable Model-agnostic Explanations (LIME) is most frequently utilized as it notably helps people's understanding of complex models. However, LIME's analysis is constrained to a single image at a time. Besides, it lacks interaction mechanisms for observing the LIME's results and direct manipulations of factors affecting the results. To address these issues, we introduce an interactive visualization tool, LIMEVis, which improves the analysis workflow of LIME by enabling users to explore multiple LIME results simultaneously and modify them directly. With LIMEVis, we could conveniently identify common features in images that a model seems to mainly consider for category classification. Additionally, by interactively modifying the LIME results, we could determine which segments in an image influence the model's classification.

翻译:可解释人工智能(XAI)在解释模型预测方面日益重要。在XAI的主流技术中,局部可解释模型无关解释(LIME)因其显著提升人们对复杂模型的理解能力而成为最常用的方法。然而,LIME的分析目前仅限于单张图像处理,且缺乏用于观察LIME结果的交互机制以及对影响结果因素的直接操控功能。为解决这些问题,我们开发了交互式可视化工具LIMEVis,该工具支持用户同时探索多个LIME结果并进行直接修改,从而优化了LIME的分析工作流程。通过LIMEVis,我们能够便捷地识别图像中模型进行类别分类时主要关注的共性特征。此外,通过对LIME结果进行交互式修改,我们可以确定图像中哪些区域会影响模型的分类决策。