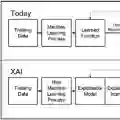

The evaluation of eXplainable Artificial Intelligence (XAI) methods is a rapidly growing field, characterized by a wide variety of approaches. This diversity highlights the complexity of the XAI evaluation, which, unlike traditional AI assessment, lacks a universally correct ground truth for the explanation, making objective evaluation challenging. One promising direction to address this issue involves the use of what we term Synthetic Artificial Intelligence Ground truth (SAIG) methods, which generate artificial ground truths to enable the direct evaluation of XAI techniques. This paper presents the first review and analysis of SAIG methods. We introduce a novel taxonomy to classify these approaches, identifying seven key features that distinguish different SAIG methods. Our comparative study reveals a concerning lack of consensus on the most effective XAI evaluation techniques, underscoring the need for further research and standardization in this area.

翻译:可解释人工智能(XAI)方法的评估是一个快速发展的领域,其特点在于评估方法的多样性。这种多样性凸显了XAI评估的复杂性——与传统人工智能评估不同,XAI评估缺乏普遍正确的解释基准真值,这使得客观评估颇具挑战性。解决此问题的一个有前景的方向涉及使用我们称之为合成人工智能基准真值(SAIG)的方法,该方法通过生成人工基准真值来实现对XAI技术的直接评估。本文首次对SAIG方法进行了综述与分析。我们提出了一种新颖的分类法来对这些方法进行分类,并识别出区分不同SAIG方法的七个关键特征。我们的比较研究表明,目前对于最有效的XAI评估技术缺乏共识,这令人担忧,并凸显了在该领域进行进一步研究和标准化的必要性。