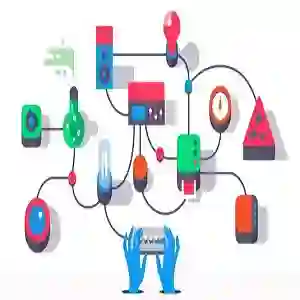

Large Language Models (LLMs) have demonstrated significant reasoning capabilities in robotic systems. However, their deployment in multi-robot systems remains fragmented and struggles to handle complex task dependencies and parallel execution. This study introduces the DART-LLM (Dependency-Aware Multi-Robot Task Decomposition and Execution using Large Language Models) system, designed to address these challenges. DART-LLM utilizes LLMs to parse natural language instructions, decomposing them into multiple subtasks with dependencies to establish complex task sequences, thereby enhancing efficient coordination and parallel execution in multi-robot systems. The system includes the QA LLM module, Breakdown Function modules, Actuation module, and a Vision-Language Model (VLM)-based object detection module, enabling task decomposition and execution from natural language instructions to robotic actions. Experimental results demonstrate that DART-LLM excels in handling long-horizon tasks and collaborative tasks with complex dependencies. Even when using smaller models like Llama 3.1 8B, the system achieves good performance, highlighting DART-LLM's robustness in terms of model size. Please refer to the project website \url{https://wyd0817.github.io/project-dart-llm/} for videos and code.

翻译:大语言模型(LLMs)已在机器人系统中展现出强大的推理能力。然而,其在多机器人系统中的部署仍较为零散,难以处理复杂的任务依赖关系和并行执行。本研究提出了DART-LLM(基于大语言模型的依赖感知多机器人任务分解与执行)系统,旨在应对这些挑战。DART-LLM利用大语言模型解析自然语言指令,将其分解为具有依赖关系的多个子任务,从而建立复杂的任务序列,以此提升多机器人系统中的高效协调与并行执行能力。该系统包含问答大语言模型模块、任务分解功能模块、执行模块以及基于视觉语言模型(VLM)的目标检测模块,实现了从自然语言指令到机器人动作的任务分解与执行。实验结果表明,DART-LLM在处理长周期任务和具有复杂依赖关系的协作任务方面表现优异。即使使用如Llama 3.1 8B等较小模型,该系统仍能取得良好性能,凸显了DART-LLM在模型规模方面的鲁棒性。视频与代码请参见项目网站 \url{https://wyd0817.github.io/project-dart-llm/}。