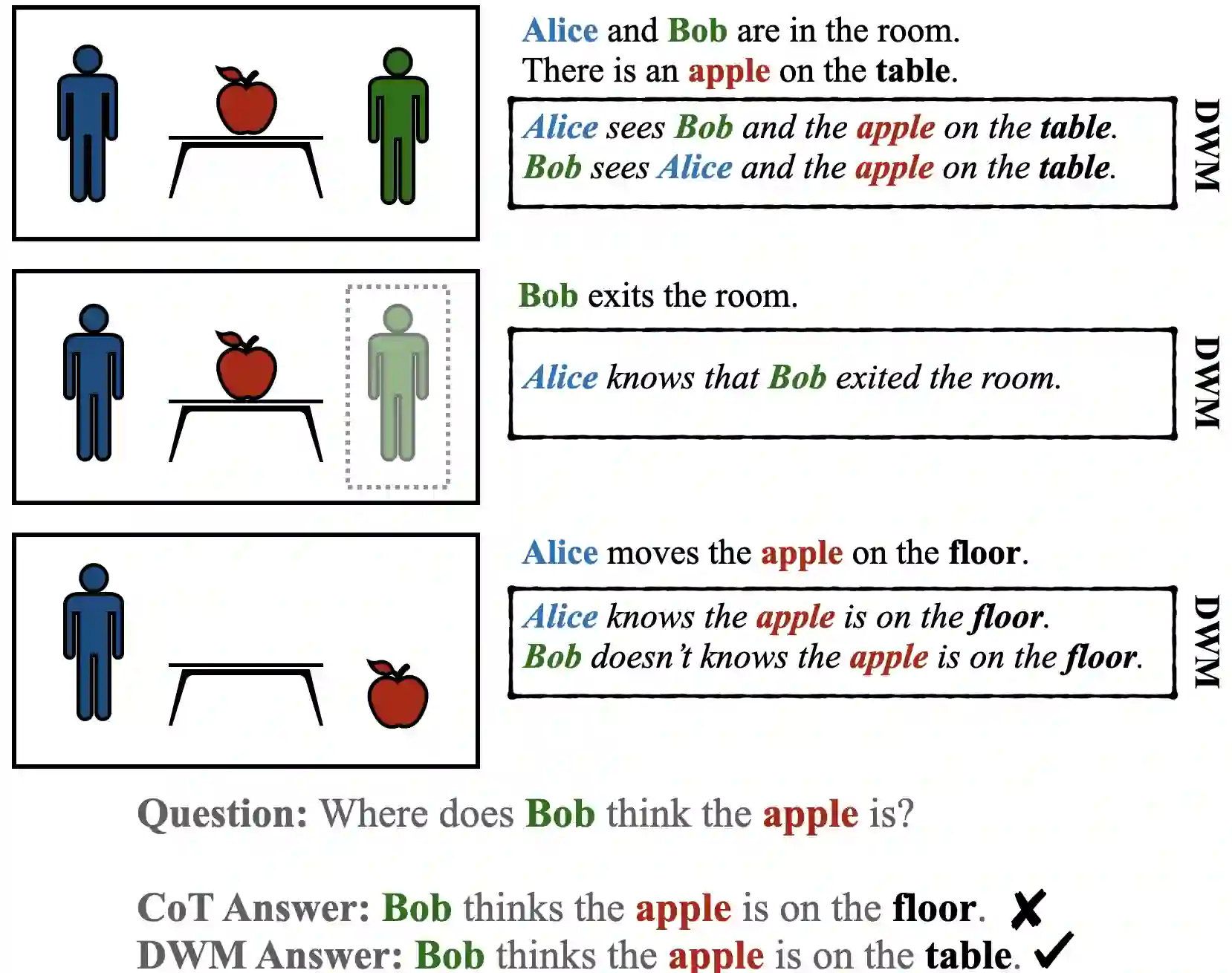

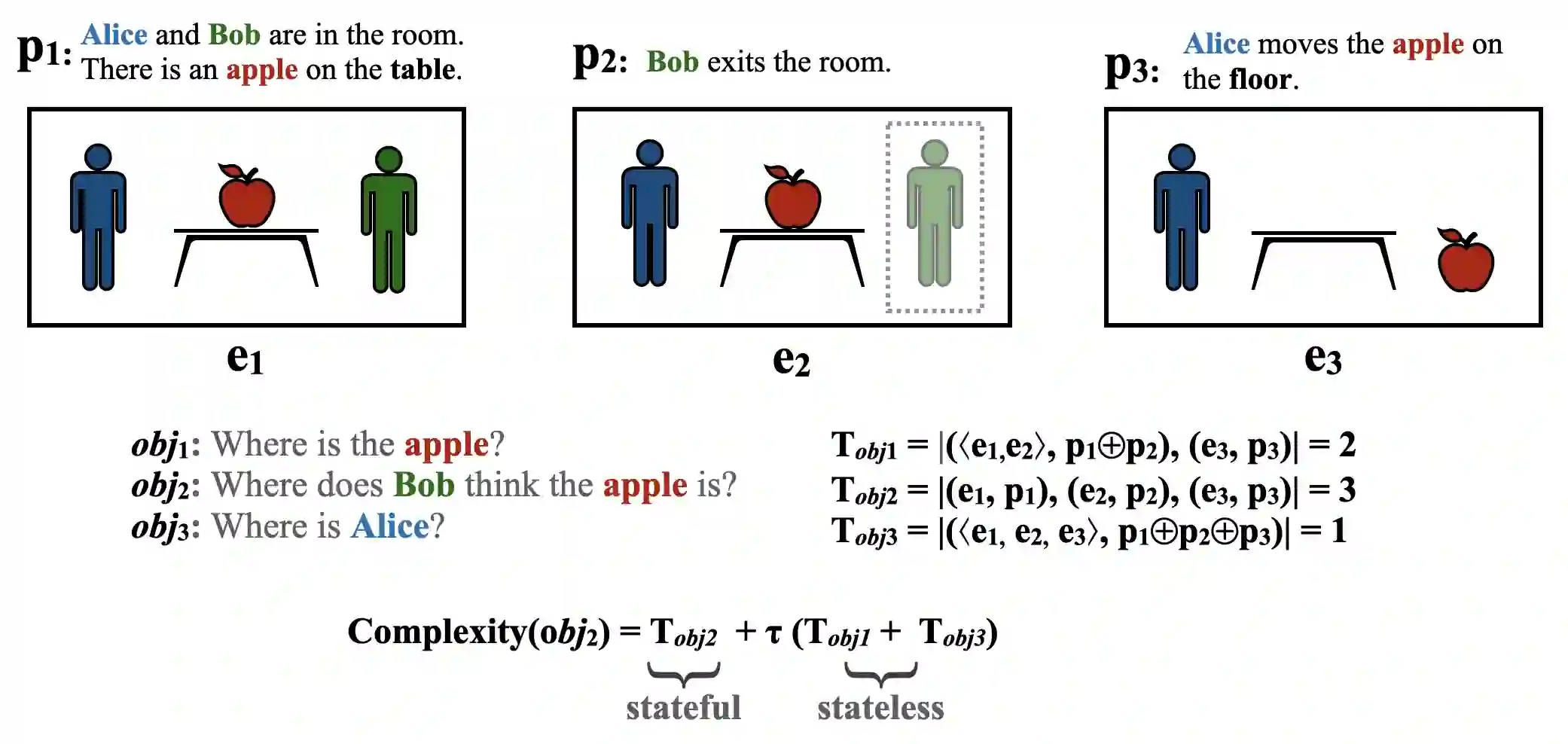

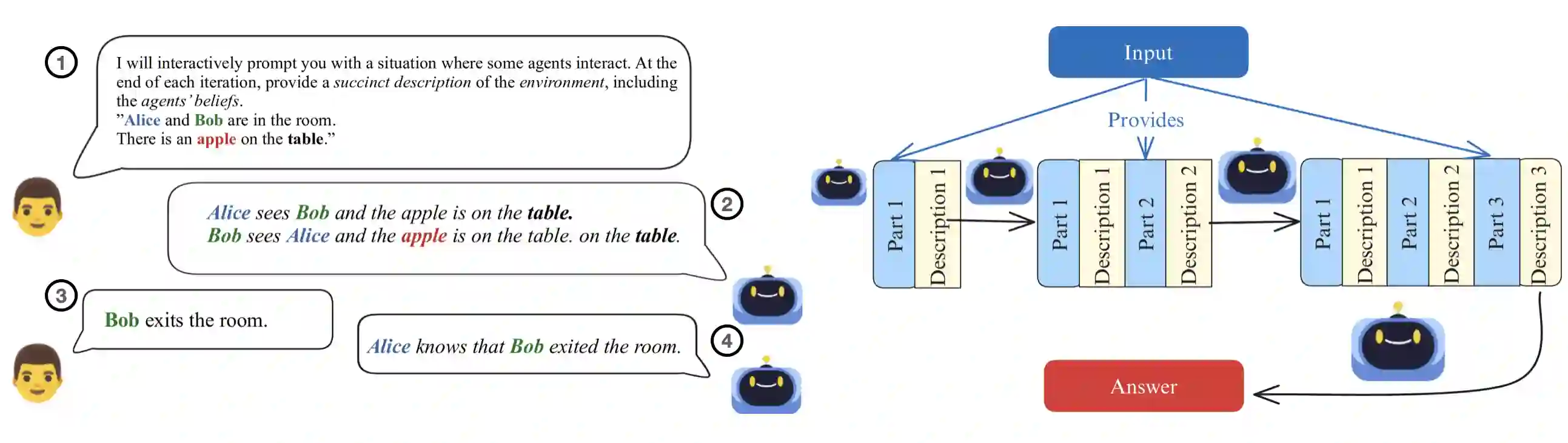

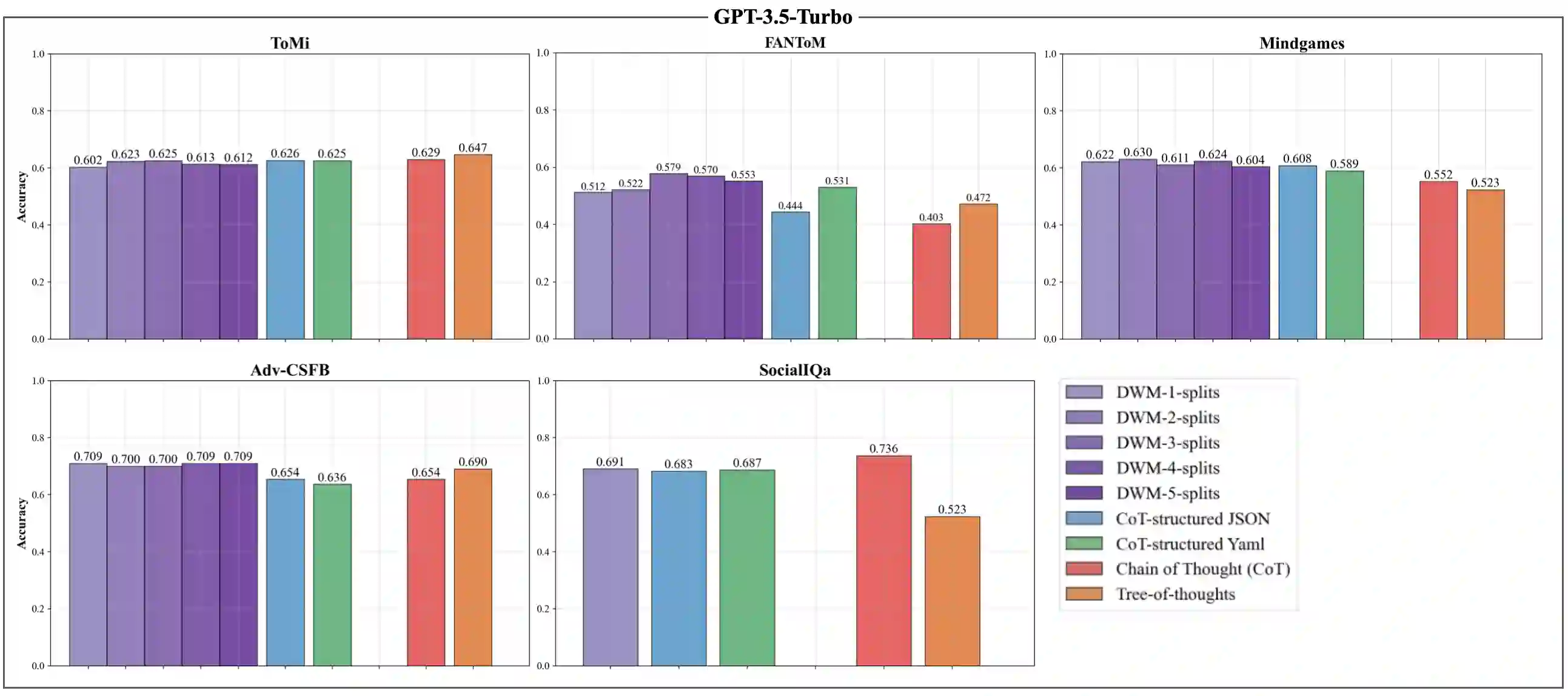

Theory of Mind (ToM) can be used to assess the capabilities of Large Language Models (LLMs) in complex scenarios where social reasoning is required. While the research community has proposed many ToM benchmarks, their hardness varies greatly, and their complexity is not well defined. This work proposes a framework to measure the complexity of ToM tasks. We quantify a problem's complexity as the number of states necessary to solve it correctly. Our complexity measure also accounts for spurious states of a ToM problem designed to make it apparently harder. We use our method to assess the complexity of five widely adopted ToM benchmarks. On top of this framework, we design a prompting technique that augments the information available to a model with a description of how the environment changes with the agents' interactions. We name this technique Discrete World Models (DWM) and show how it elicits superior performance on ToM tasks.

翻译:心智理论(ToM)可用于评估大型语言模型(LLM)在需要社会推理的复杂场景中的能力。尽管研究界已提出许多ToM基准测试,但其难度差异显著,且复杂度缺乏明确定义。本研究提出一个衡量ToM任务复杂度的框架。我们将问题的复杂度量化为正确解决问题所需的状态数量。该复杂度度量还考虑了ToM问题中为增加表面难度而设计的伪状态。我们运用该方法评估了五个广泛采用的ToM基准的复杂度。在此框架基础上,我们设计了一种提示技术,通过描述环境如何随智能体互动而变化来增强模型可获得的信息。我们将此技术命名为离散世界模型(DWM),并证明其能显著提升ToM任务的表现。