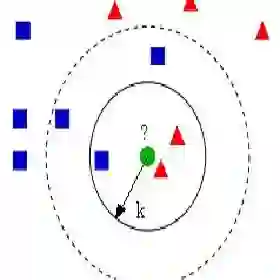

Approximate $k$-nearest neighbor (AKNN) search is a fundamental problem with wide applications. To reduce memory and accelerate search, vector quantization is widely adopted. However, existing quantization methods either rely on codebooks -- whose query speed is limited by costly table lookups -- or adopt dimension-wise quantization, which maps each vector dimension to a small quantized code for fast search. The latter, however, suffers from a fixed compression ratio because the quantized code length is inherently tied to the original dimensionality. To overcome these limitations, we propose MRQ, a new approach that integrates projection with quantization. The key insight is that, after projection, high-dimensional vectors tend to concentrate most of their information in the leading dimensions. MRQ exploits this property by quantizing only the information-dense projected subspace -- whose size is fully user-tunable -- thereby decoupling the quantized code length from the original dimensionality. The remaining tail dimensions are captured using lightweight statistical summaries. By doing so, MRQ boosts the query efficiency of existing quantization methods while achieving arbitrary compression ratios enabled by the projection step. Extensive experiments show that MRQ substantially outperforms the state-of-the-art method, achieving up to 3x faster search with only one-third the quantization bits for comparable accuracy.

翻译:近似k最近邻(AKNN)搜索是一个具有广泛应用的基础性问题。为降低内存消耗并加速搜索过程,向量量化技术被广泛采用。然而,现有量化方法要么依赖码本——其查询速度受限于昂贵的查表操作——要么采用逐维度量化,即将每个向量维度映射为短量化编码以实现快速搜索。但后者的压缩比是固定的,因为量化编码长度本质上与原数据维度绑定。为克服这些局限,我们提出MRQ,一种将投影与量化相结合的新方法。其核心洞见在于:高维向量经过投影后,其信息往往集中在投影空间的前几个维度。MRQ利用这一特性,仅对信息密集的投影子空间(其大小完全可由用户调节)进行量化,从而将量化编码长度从原始维度中解耦。剩余的尾部维度则通过轻量级统计摘要进行捕捉。通过这种方式,MRQ在提升现有量化方法查询效率的同时,借助投影步骤实现了任意压缩比。大量实验表明,MRQ显著优于当前最优方法,在保持相近精度的前提下,仅需三分之一的量化比特即可实现高达3倍的搜索加速。