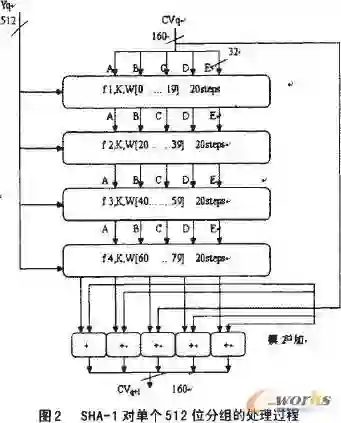

Generative pre-trained transformers (GPT's) are a type of large language machine learning model that are unusually adept at producing novel, and coherent, natural language. In this study the ability of GPT models to generate novel and correct versions, and notably very insecure versions, of implementations of the cryptographic hash function SHA-1 is examined. The GPT models Llama-2-70b-chat-h, Mistral-7B-Instruct-v0.1, and zephyr-7b-alpha are used. The GPT models are prompted to re-write each function using a modified version of the localGPT framework and langchain to provide word embedding context of the full source code and header files to the model, resulting in over 150,000 function re-write GPT output text blocks, approximately 50,000 of which were able to be parsed as C code and subsequently compiled. The generated code is analyzed for being compilable, correctness of the algorithm, memory leaks, compiler optimization stability, and character distance to the reference implementation. Remarkably, several generated function variants have a high implementation security risk of being correct for some test vectors, but incorrect for other test vectors. Additionally, many function implementations were not correct to the reference algorithm of SHA-1, but produced hashes that have some of the basic characteristics of hash functions. Many of the function re-writes contained serious flaws such as memory leaks, integer overflows, out of bounds accesses, use of uninitialised values, and compiler optimization instability. Compiler optimization settings and SHA-256 hash checksums of the compiled binaries are used to cluster implementations that are equivalent but may not have identical syntax - using this clustering over 100,000 novel and correct versions of the SHA-1 codebase were generated where each component C function of the reference implementation is different from the original code.

翻译:生成式预训练Transformer(GPT)是一类擅长生成新颖且连贯自然语言的大型语言机器学习模型。本研究探讨了GPT模型生成密码哈希函数SHA-1实现的新颖正确版本(尤其是极不安全版本)的能力。实验采用了Llama-2-70b-chat-h、Mistral-7B-Instruct-v0.1和zephyr-7b-alpha三种GPT模型。通过改进版localGPT框架与langchain工具为模型提供完整源代码及头文件的词嵌入上下文,引导模型重写每个函数,最终获得超过15万个函数重写GPT输出文本块,其中约5万条可解析为C代码并成功编译。生成代码从可编译性、算法正确性、内存泄漏、编译器优化稳定性及与参考实现的字符距离等方面进行分析。值得注意的是,部分生成函数变体存在较高的实现安全风险:它们能通过某些测试向量,却在其他测试向量上失效。此外,许多函数实现虽不符合SHA-1参考算法,却产生了具备哈希函数基本特征的哈希值。大量重写函数存在严重缺陷,包括内存泄漏、整数溢出、越界访问、未初始化值使用及编译器优化不稳定等问题。通过编译器优化设置与编译二进制文件的SHA-256哈希校验和,对语法不同但功能等价的实现进行聚类分析。基于该聚类方法,成功生成了超过10万个SHA-1代码库的新颖正确版本,其中每个参考实现的C语言组件函数均与原始代码不同。