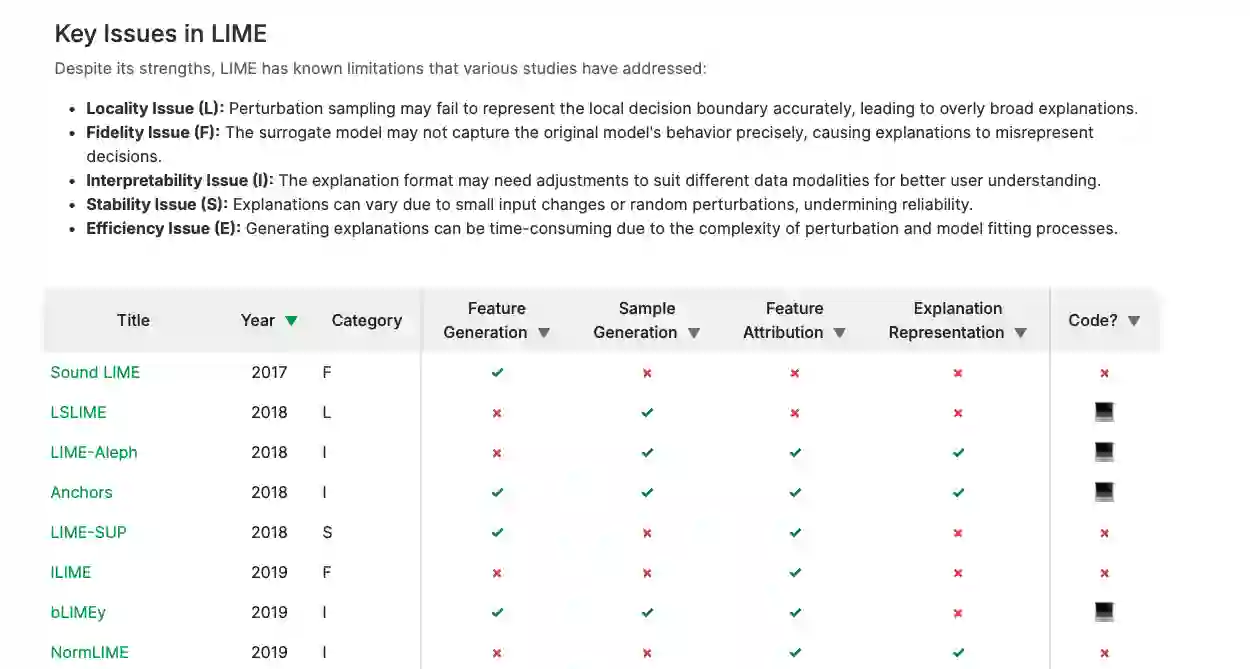

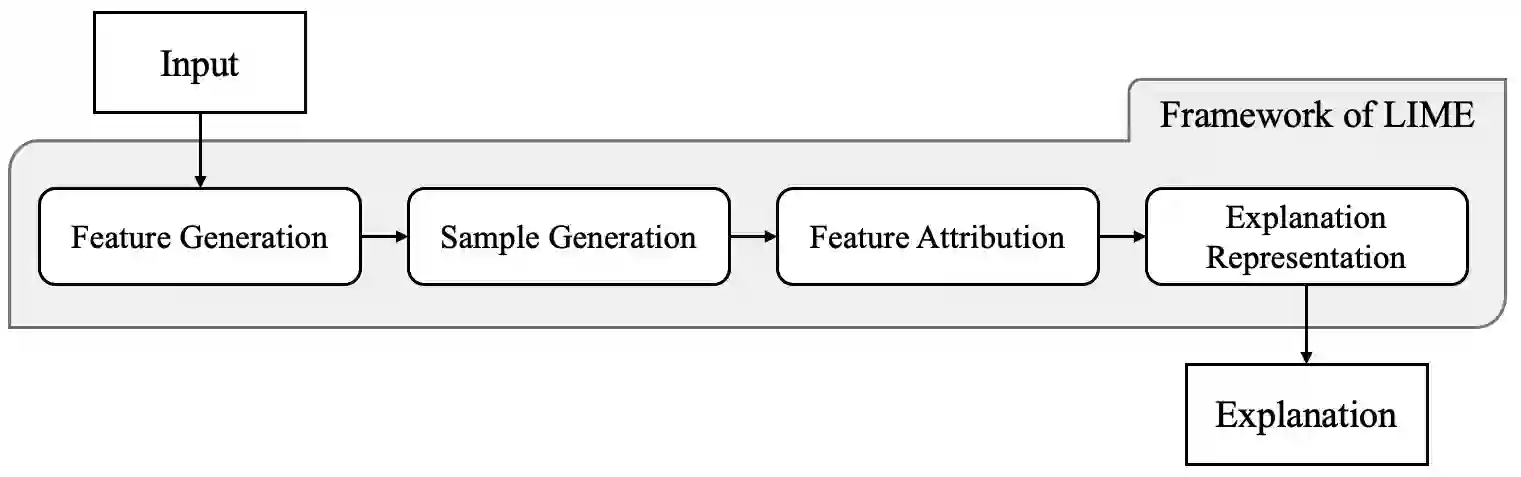

As neural networks become dominant in essential systems, Explainable Artificial Intelligence (XAI) plays a crucial role in fostering trust and detecting potential misbehavior of opaque models. LIME (Local Interpretable Model-agnostic Explanations) is among the most prominent model-agnostic approaches, generating explanations by approximating the behavior of black-box models around specific instances. Despite its popularity, LIME faces challenges related to fidelity, stability, and applicability to domain-specific problems. Numerous adaptations and enhancements have been proposed to address these issues, but the growing number of developments can be overwhelming, complicating efforts to navigate LIME-related research. To the best of our knowledge, this is the first survey to comprehensively explore and collect LIME's foundational concepts and known limitations. We categorize and compare its various enhancements, offering a structured taxonomy based on intermediate steps and key issues. Our analysis provides a holistic overview of advancements in LIME, guiding future research and helping practitioners identify suitable approaches. Additionally, we provide a continuously updated interactive website (https://patrick-knab.github.io/which-lime-to-trust/), offering a concise and accessible overview of the survey.

翻译:随着神经网络在关键系统中占据主导地位,可解释人工智能(XAI)在建立信任和检测不透明模型的潜在异常行为方面发挥着至关重要的作用。LIME(局部可解释模型无关解释)是最突出的模型无关方法之一,它通过在特定实例附近近似黑盒模型的行为来生成解释。尽管LIME广受欢迎,但其在保真度、稳定性以及对领域特定问题的适用性方面仍面临挑战。为应对这些问题,研究者提出了大量改进与增强方案,但日益增长的研究成果可能令人应接不暇,增加了梳理LIME相关研究的难度。据我们所知,本文是首个全面探讨并梳理LIME基础概念与已知局限性的综述研究。我们对各类增强方法进行了系统分类与比较,并基于中间步骤和关键问题构建了结构化分类体系。本分析为LIME的进展提供了整体性概览,既能指导未来研究方向,也可帮助实践者选择合适的方法。此外,我们建立了持续更新的交互式网站(https://patrick-knab.github.io/which-lime-to-trust/),为本文综述提供简明易懂的可视化概览。