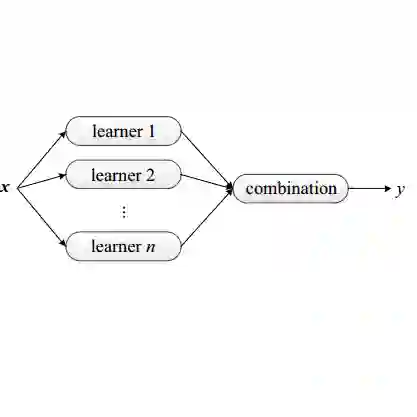

We present a theory of ensemble diversity, explaining the nature of diversity for a wide range of supervised learning scenarios. This challenge has been referred to as the holy grail of ensemble learning, an open research issue for over 30 years. Our framework reveals that diversity is in fact a hidden dimension in the bias-variance decomposition of the ensemble loss. We prove a family of exact bias-variance-diversity decompositions, for a wide range of losses in both regression and classification, e.g., squared, cross-entropy, and Poisson losses. For losses where an additive bias-variance decomposition is not available (e.g., 0/1 loss) we present an alternative approach: quantifying the effects of diversity, which turn out to be dependent on the label distribution. Overall, we argue that diversity is a measure of model fit, in precisely the same sense as bias and variance, but accounting for statistical dependencies between ensemble members. Thus, we should not be maximising diversity as so many works aim to do -- instead, we have a bias/variance/diversity trade-off to manage.

翻译:我们提出了一种集成多样性的理论,解释了广泛监督学习场景中多样性的本质。这一挑战被称为集成学习的“圣杯”,是三十多年来悬而未决的研究问题。我们的框架揭示,多样性实际上是集成损失偏差-方差分解中的一个隐藏维度。我们证明了在回归和分类中广泛损失函数(如平方损失、交叉熵损失和泊松损失)的精确偏差-方差-多样性分解族。对于无法进行加性偏差-方差分解的损失函数(例如0/1损失),我们提出了一种替代方法:量化多样性的影响,结果表明这依赖于标签分布。总体而言,我们认为多样性与偏差和方差在精确意义上同属模型拟合的度量,但计及了集成成员之间的统计依赖性。因此,我们不应像许多研究那样追求最大化多样性,而需管理偏差/方差/多样性的权衡。