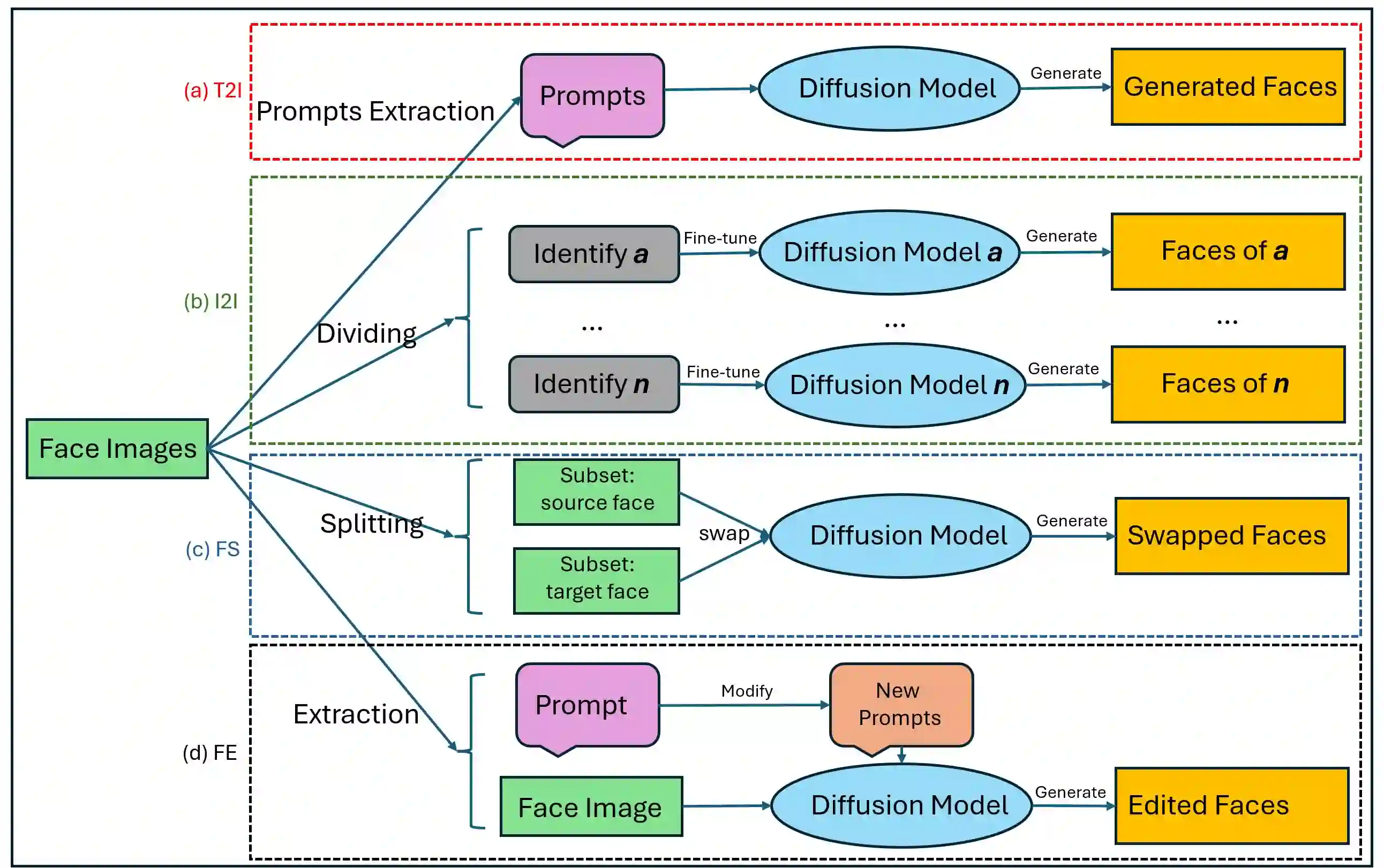

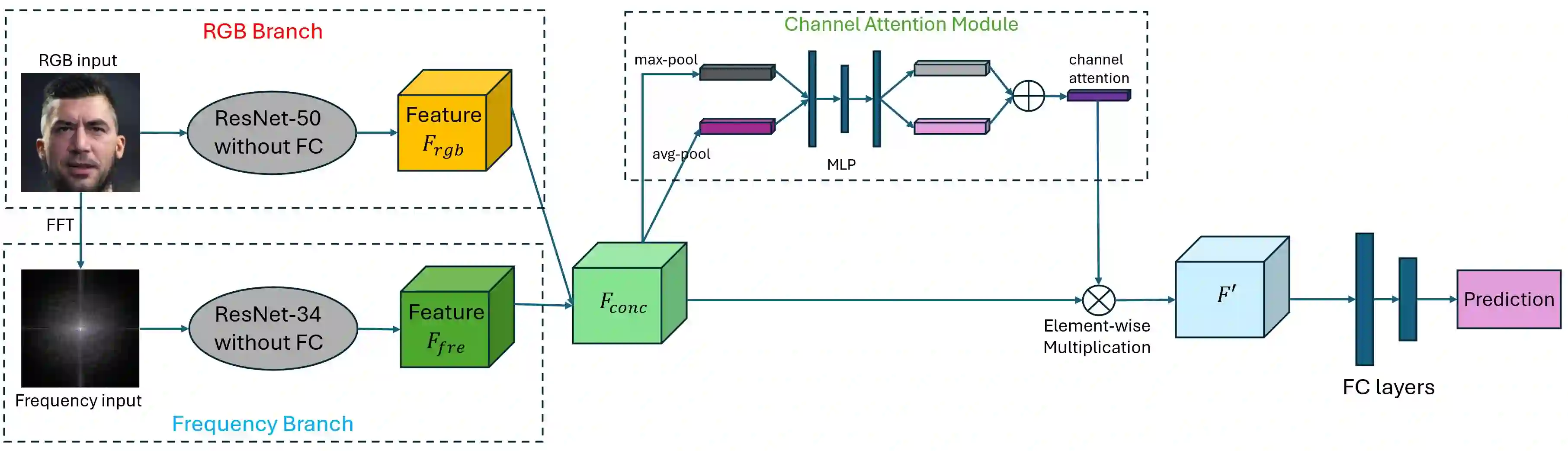

The rapid advancement of generative AI has enabled the creation of highly realistic forged facial images, posing significant threats to AI security, digital media integrity, and public trust. Face forgery techniques, ranging from face swapping and attribute editing to powerful diffusion-based image synthesis, are increasingly being used for malicious purposes such as misinformation, identity fraud, and defamation. This growing challenge underscores the urgent need for robust and generalizable face forgery detection methods as a critical component of AI security infrastructure. In this work, we propose a novel dual-branch convolutional neural network for face forgery detection that leverages complementary cues from both spatial and frequency domains. The RGB branch captures semantic information, while the frequency branch focuses on high-frequency artifacts that are difficult for generative models to suppress. A channel attention module is introduced to adaptively fuse these heterogeneous features, highlighting the most informative channels for forgery discrimination. To guide the network's learning process, we design a unified loss function, FSC Loss, that combines focal loss, supervised contrastive loss, and a frequency center margin loss to enhance class separability and robustness. We evaluate our model on the DiFF benchmark, which includes forged images generated from four representative methods: text-to-image, image-to-image, face swap, and face edit. Our method achieves strong performance across all categories and outperforms average human accuracy. These results demonstrate the model's effectiveness and its potential contribution to safeguarding AI ecosystems against visual forgery attacks.

翻译:生成式人工智能的快速发展使得能够创建高度逼真的伪造面部图像,这对AI安全、数字媒体完整性和公众信任构成了重大威胁。面部伪造技术,从面部交换、属性编辑到基于扩散的强效图像合成,正日益被用于恶意目的,如虚假信息传播、身份欺诈和诽谤。这一日益严峻的挑战凸显了对鲁棒且可泛化的面部伪造检测方法的迫切需求,作为AI安全基础设施的关键组成部分。在本工作中,我们提出了一种新颖的双分支卷积神经网络用于面部伪造检测,该网络利用了空间域和频域的互补线索。RGB分支捕获语义信息,而频率分支则专注于生成模型难以抑制的高频伪影。我们引入了一个通道注意力模块来自适应地融合这些异构特征,突出对伪造判别最具信息量的通道。为了指导网络的学习过程,我们设计了一个统一的损失函数FSC Loss,该函数结合了焦点损失、监督对比损失和频率中心边界损失,以增强类别的可分性和鲁棒性。我们在DiFF基准上评估了我们的模型,该基准包含了由四种代表性方法生成的伪造图像:文本到图像、图像到图像、面部交换和面部编辑。我们的方法在所有类别上都取得了强劲的性能,并超越了人类的平均准确率。这些结果证明了模型的有效性及其在保护AI生态系统免受视觉伪造攻击方面的潜在贡献。