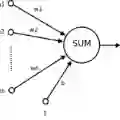

We investigate the robustness of sparse artificial neural networks trained with adaptive topology. We focus on a simple yet effective architecture consisting of three sparse layers with 99% sparsity followed by a dense layer, applied to image classification tasks such as MNIST and Fashion MNIST. By updating the topology of the sparse layers between each epoch, we achieve competitive accuracy despite the significantly reduced number of weights. Our primary contribution is a detailed analysis of the robustness of these networks, exploring their performance under various perturbations including random link removal, adversarial attack, and link weight shuffling. Through extensive experiments, we demonstrate that adaptive topology not only enhances efficiency but also maintains robustness. This work highlights the potential of adaptive sparse networks as a promising direction for developing efficient and reliable deep learning models.

翻译:本研究探讨了采用自适应拓扑训练的稀疏人工神经网络的鲁棒性。我们聚焦于一种简单而有效的架构,该架构由三个稀疏度达99%的稀疏层和一个稠密层组成,并应用于MNIST和Fashion MNIST等图像分类任务。通过在每个训练周期之间更新稀疏层的拓扑结构,我们在权重数量显著减少的情况下仍获得了具有竞争力的分类精度。我们的主要贡献是对这些网络鲁棒性的详细分析,探究了其在多种扰动下的性能,包括随机连接移除、对抗性攻击以及连接权重重排。通过大量实验,我们证明自适应拓扑不仅能提升效率,还能保持网络的鲁棒性。这项工作凸显了自适应稀疏网络作为开发高效可靠深度学习模型的一个有前景的研究方向。