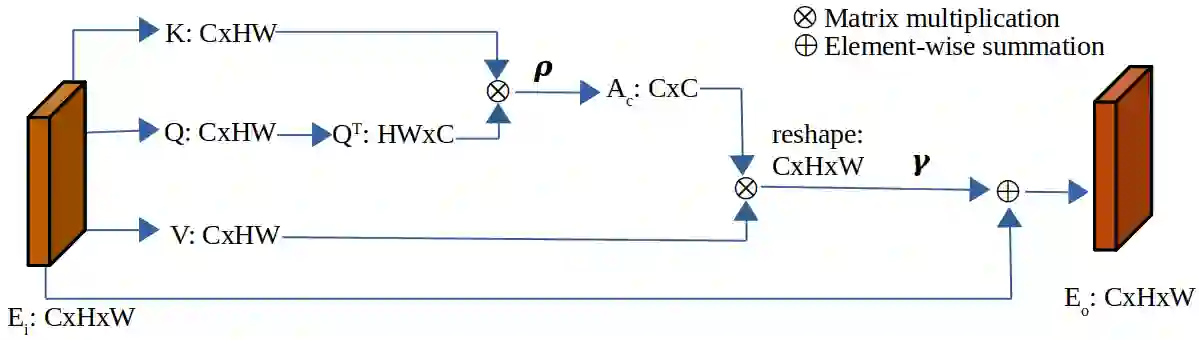

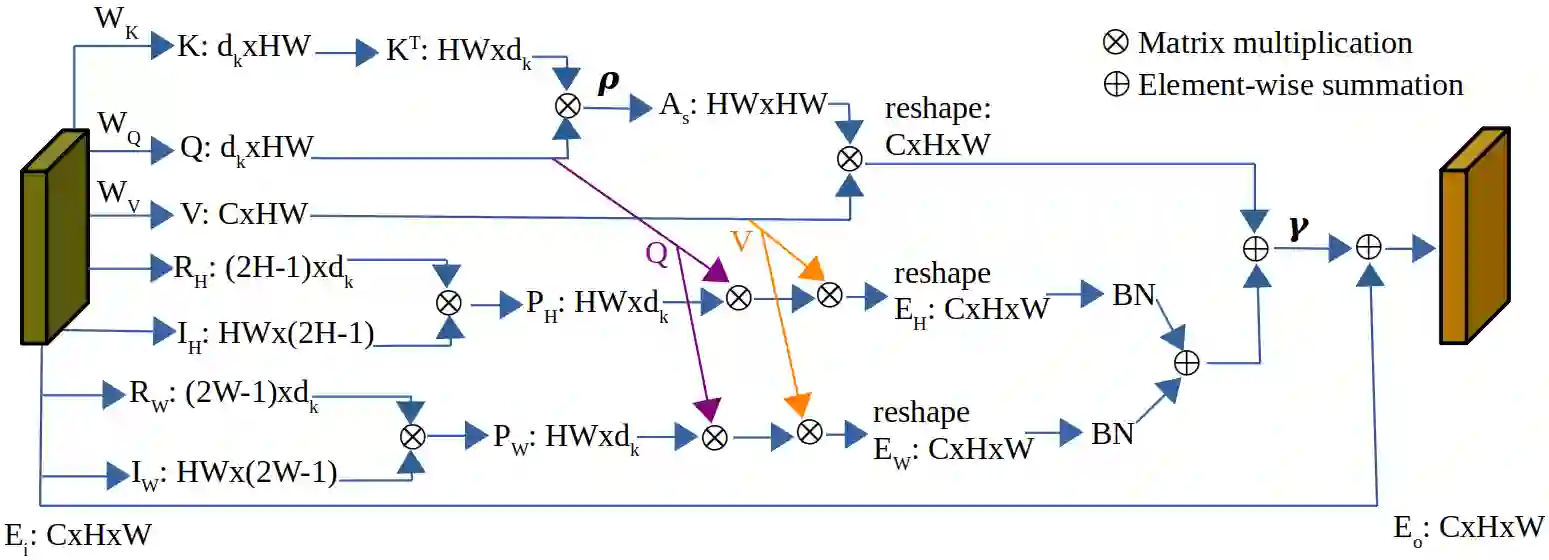

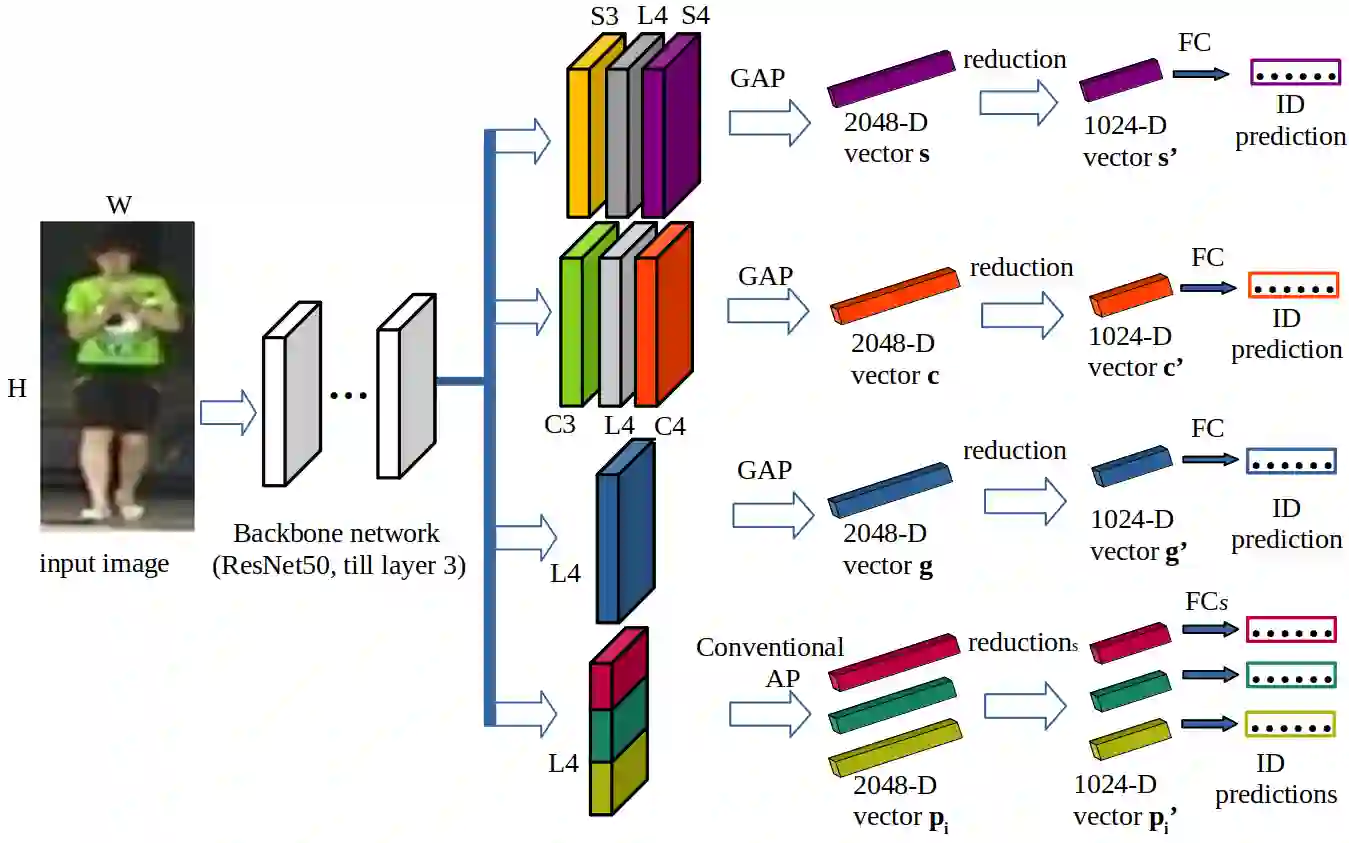

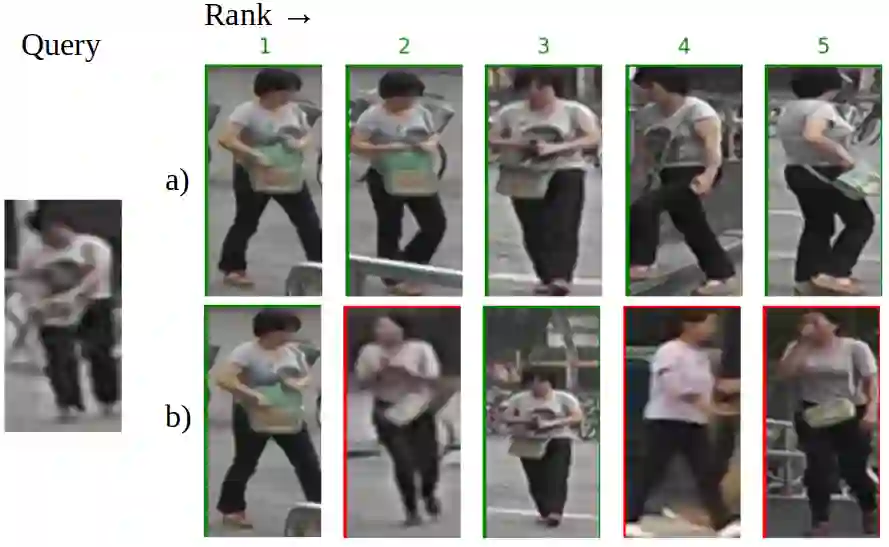

Learning representative, robust and discriminative information from images is essential for effective person re-identification (Re-Id). In this paper, we propose a compound approach for end-to-end discriminative deep feature learning for person Re-Id based on both body and hand images. We carefully design the Local-Aware Global Attention Network (LAGA-Net), a multi-branch deep network architecture consisting of one branch for spatial attention, one branch for channel attention, one branch for global feature representations and another branch for local feature representations. The attention branches focus on the relevant features of the image while suppressing the irrelevant backgrounds. In order to overcome the weakness of the attention mechanisms, equivariant to pixel shuffling, we integrate relative positional encodings into the spatial attention module to capture the spatial positions of pixels. The global branch intends to preserve the global context or structural information. For the the local branch, which intends to capture the fine-grained information, we perform uniform partitioning to generate stripes on the conv-layer horizontally. We retrieve the parts by conducting a soft partition without explicitly partitioning the images or requiring external cues such as pose estimation. A set of ablation study shows that each component contributes to the increased performance of the LAGA-Net. Extensive evaluations on four popular body-based person Re-Id benchmarks and two publicly available hand datasets demonstrate that our proposed method consistently outperforms existing state-of-the-art methods.

翻译:从图像中学习具有代表性、鲁棒性及判别力的信息对于有效的行人重识别至关重要。本文提出一种复合方法,基于身体与手部图像进行端到端的判别性深度特征学习。我们精心设计了局部感知全局注意力网络,这是一种多分支深度网络架构,包含一个空间注意力分支、一个通道注意力分支、一个全局特征表示分支以及一个局部特征表示分支。注意力分支专注于图像的相关特征,同时抑制无关背景。为了克服注意力机制对像素重排具有等变性的弱点,我们将相对位置编码集成到空间注意力模块中,以捕捉像素的空间位置。全局分支旨在保留全局上下文或结构信息。对于旨在捕获细粒度信息的局部分支,我们在卷积层上水平进行均匀分区以生成条带。我们通过软分区的方式检索部件,无需显式分割图像或依赖如姿态估计等外部线索。一系列消融实验表明,每个组件都对提升LAGA-Net的性能有所贡献。在四个主流基于身体的行人重识别基准数据集及两个公开手部数据集上的广泛评估表明,我们提出的方法 consistently 优于现有的最先进方法。