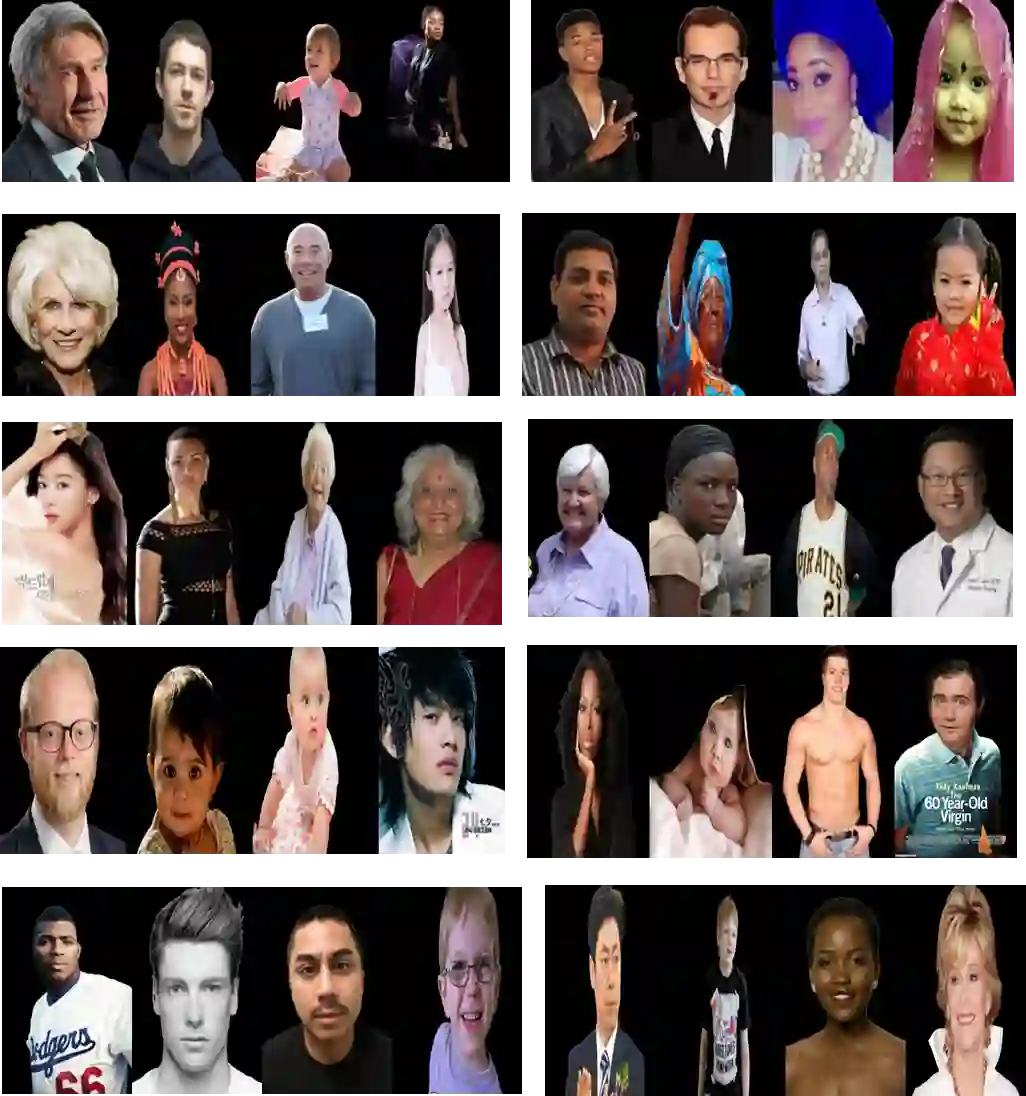

Technologies for recognizing facial attributes like race, gender, age, and emotion have several applications, such as surveillance, advertising content, sentiment analysis, and the study of demographic trends and social behaviors. Analyzing demographic characteristics based on images and analyzing facial expressions have several challenges due to the complexity of humans' facial attributes. Traditional approaches have employed CNNs and various other deep learning techniques, trained on extensive collections of labeled images. While these methods demonstrated effective performance, there remains potential for further enhancements. In this paper, we propose to utilize vision language models (VLMs) such as generative pre-trained transformer (GPT), GEMINI, large language and vision assistant (LLAVA), PaliGemma, and Microsoft Florence2 to recognize facial attributes such as race, gender, age, and emotion from images with human faces. Various datasets like FairFace, AffectNet, and UTKFace have been utilized to evaluate the solutions. The results show that VLMs are competitive if not superior to traditional techniques. Additionally, we propose "FaceScanPaliGemma"--a fine-tuned PaliGemma model--for race, gender, age, and emotion recognition. The results show an accuracy of 81.1%, 95.8%, 80%, and 59.4% for race, gender, age group, and emotion classification, respectively, outperforming pre-trained version of PaliGemma, other VLMs, and SotA methods. Finally, we propose "FaceScanGPT", which is a GPT-4o model to recognize the above attributes when several individuals are present in the image using a prompt engineered for a person with specific facial and/or physical attributes. The results underscore the superior multitasking capability of FaceScanGPT to detect the individual's attributes like hair cut, clothing color, postures, etc., using only a prompt to drive the detection and recognition tasks.

翻译:识别种族、性别、年龄和情绪等面部属性的技术在多个领域具有应用价值,例如监控、广告内容、情感分析以及人口趋势和社会行为研究。基于图像分析人口特征和面部表情面临着诸多挑战,这主要源于人类面部属性的复杂性。传统方法通常采用卷积神经网络(CNN)及其他多种深度学习技术,并在大量标注图像数据集上进行训练。尽管这些方法已展现出良好的性能,但仍存在进一步改进的空间。本文提出利用视觉语言模型(如生成式预训练Transformer(GPT)、GEMINI、大型语言视觉助手(LLAVA)、PaliGemma和Microsoft Florence2)从包含人脸的图像中识别种族、性别、年龄和情绪等面部属性。研究使用了FairFace、AffectNet和UTKFace等多个数据集对解决方案进行评估。结果表明,视觉语言模型的性能与传统技术相当甚至更优。此外,我们提出了“FaceScanPaliGemma”——一个针对种族、性别、年龄和情绪识别任务进行微调的PaliGemma模型。该模型在种族、性别、年龄组和情绪分类任务上分别达到了81.1%、95.8%、80%和59.4%的准确率,其性能优于预训练版本的PaliGemma、其他视觉语言模型以及现有最优方法。最后,我们提出了“FaceScanGPT”,这是一个基于GPT-4o的模型,能够通过针对特定面部及/或身体属性设计的提示词,在图像中存在多个个体时识别上述属性。结果凸显了FaceScanGPT卓越的多任务处理能力,仅通过提示词即可驱动检测与识别任务,准确识别个体的发型、服装颜色、姿态等属性。