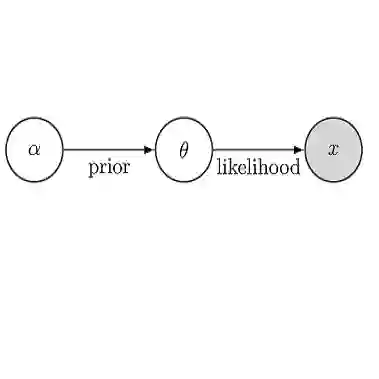

Since the turn of the century, approximate Bayesian inference has steadily evolved as new computational techniques have been incorporated to handle increasingly complex and large-scale predictive problems. The recent success of deep neural networks and foundation models has now given rise to a new paradigm in statistical modeling, in which Bayesian inference can be amortized through large-scale learned predictors. In amortized inference, substantial computation is invested upfront to train a neural network that can subsequently produce approximate posterior or predictions at negligible marginal cost across a wide range of tasks. At deployment, amortized inference offers substantial computational savings compared with traditional Bayesian procedures, which generally require repeated likelihood evaluations or Monte Carlo simulations for predictions for each new dataset. Despite the growing popularity of amortized inference, its statistical interpretation and its role within Bayesian inference remain poorly understood. This paper presents statistical perspectives on the working principles of several major neural architectures, including feedforward networks, Deep Sets, and Transformers, and examines how these architectures naturally support amortized Bayesian inference. We discuss how these models perform structured approximation and probabilistic reasoning in ways that yield controlled generalization error across a wide range of deployment scenarios, and how these properties can be harnessed for Bayesian computation. Through simulation studies, we evaluate the accuracy, robustness, and uncertainty quantification of amortized inference under varying signal-to-noise ratios and distributional shifts, highlighting both its strengths and its limitations.

翻译:自世纪之交以来,随着新的计算技术被引入以处理日益复杂和大规模的预测问题,近似贝叶斯推断已稳步发展。深度神经网络和基础模型的近期成功催生了统计建模的新范式,其中贝叶斯推断可通过大规模学习预测器实现摊销。在摊销推断中,前期投入大量计算训练神经网络,使其后续能够在广泛任务中以可忽略的边际成本生成近似后验或预测。在部署阶段,与传统贝叶斯方法相比,摊销推断提供了显著的计算节省,因为传统方法通常需要对每个新数据集进行重复似然评估或蒙特卡洛模拟以进行预测。尽管摊销推断日益流行,其统计解释及其在贝叶斯推断中的作用仍鲜为人知。本文从统计视角探讨了几种主要神经架构(包括前馈网络、Deep Sets 和 Transformer)的工作原理,并研究这些架构如何自然支持摊销贝叶斯推断。我们讨论了这些模型如何以结构化近似和概率推理的方式,在广泛的部署场景中产生可控的泛化误差,以及如何利用这些特性进行贝叶斯计算。通过模拟研究,我们评估了在不同信噪比和分布偏移下摊销推断的准确性、鲁棒性和不确定性量化,同时强调了其优势与局限性。