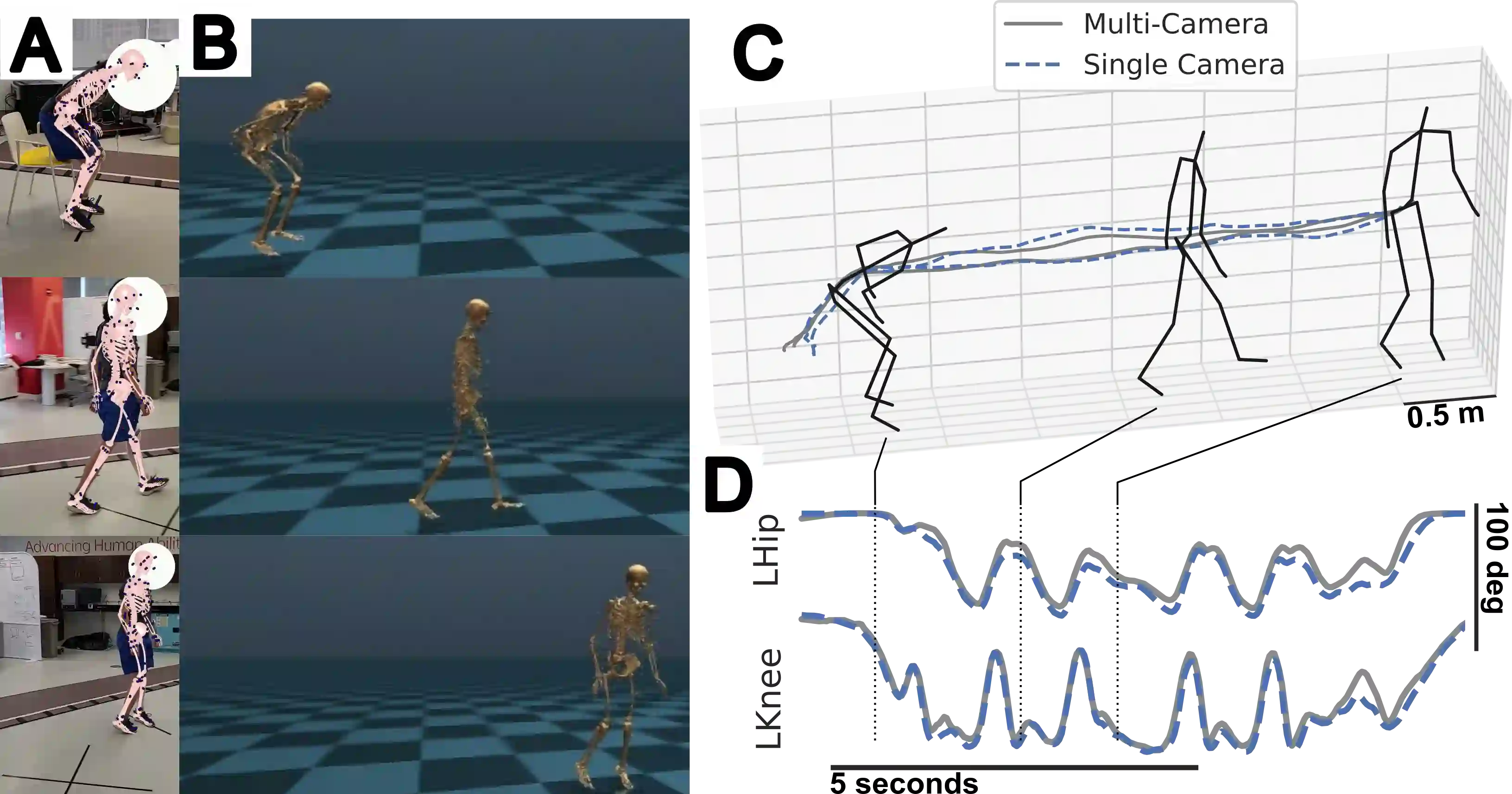

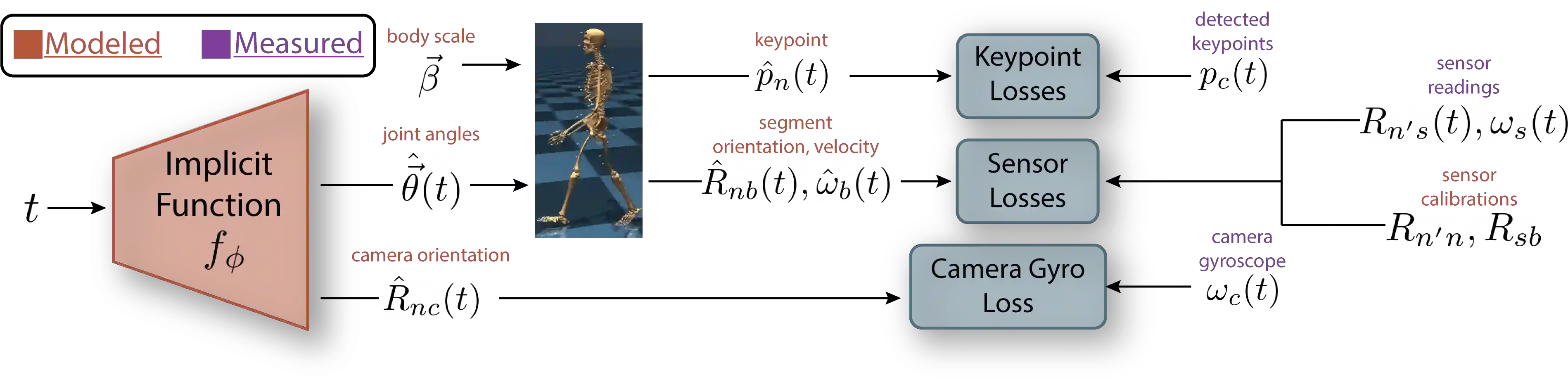

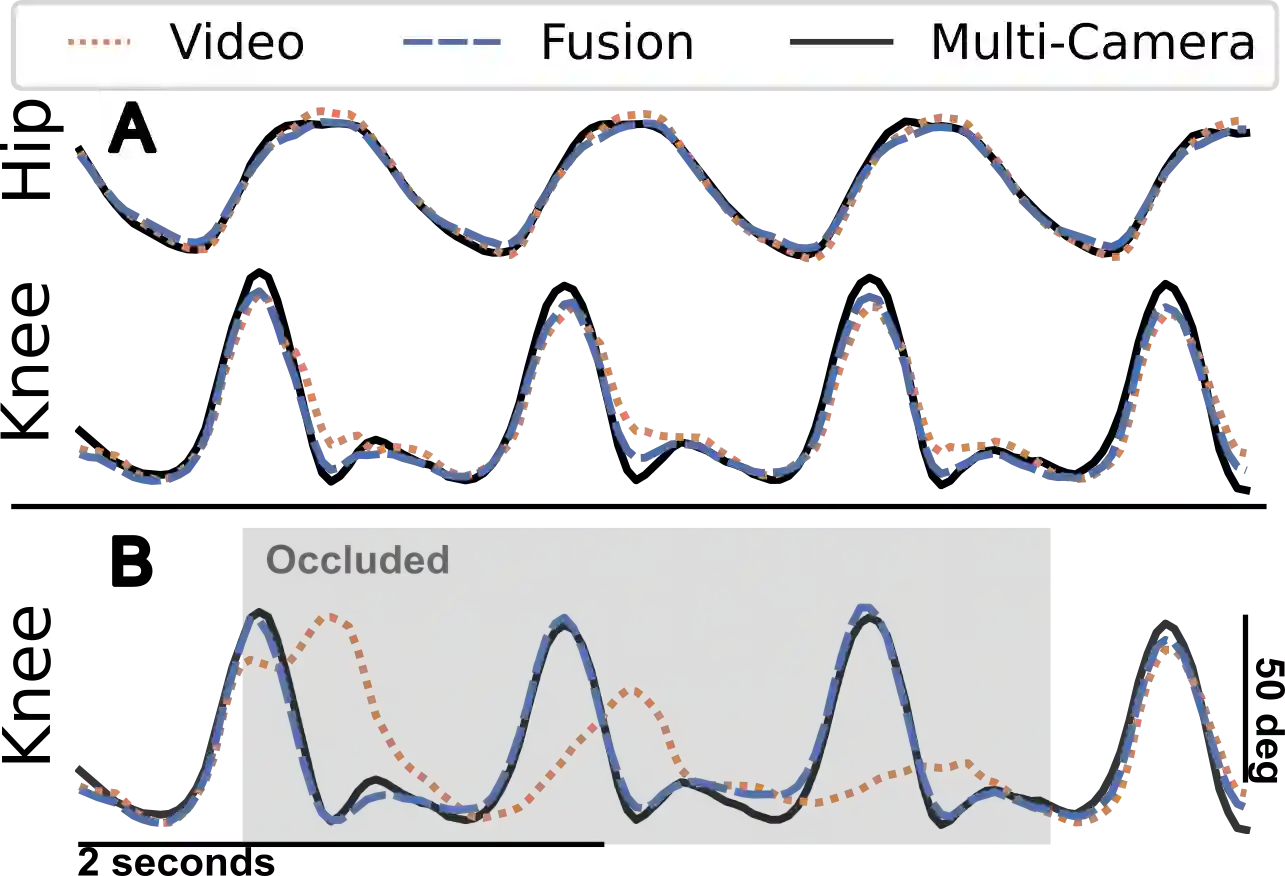

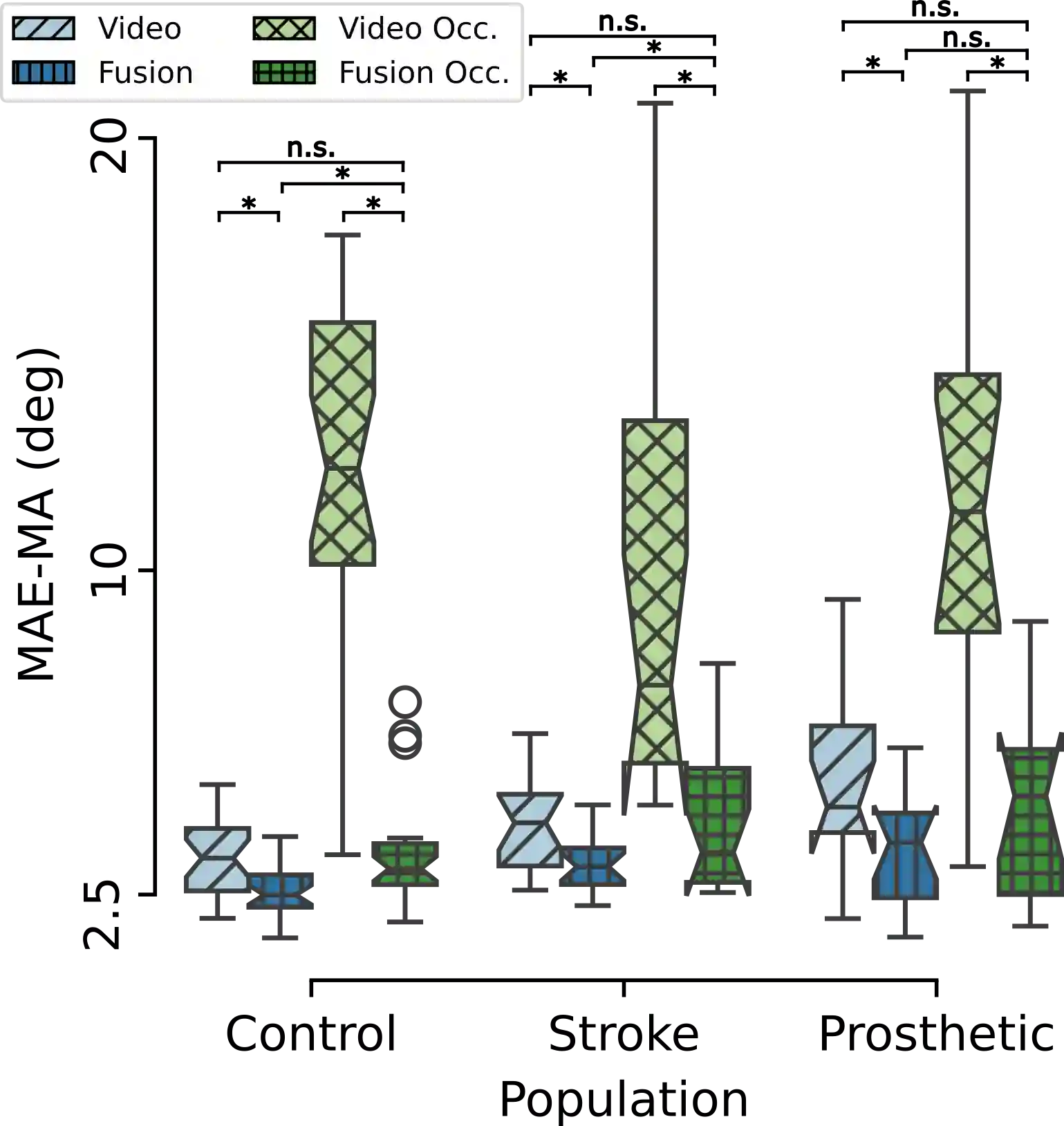

Video and wearable sensor data provide complementary information about human movement. Video provides a holistic understanding of the entire body in the world while wearable sensors provide high-resolution measurements of specific body segments. A robust method to fuse these modalities and obtain biomechanically accurate kinematics would have substantial utility for clinical assessment and monitoring. While multiple video-sensor fusion methods exist, most assume that a time-intensive, and often brittle, sensor-body calibration process has already been performed. In this work, we present a method to combine handheld smartphone video and uncalibrated wearable sensor data at their full temporal resolution. Our monocular, video-only, biomechanical reconstruction already performs well, with only several degrees of error at the knee during walking compared to markerless motion capture. Reconstructing from a fusion of video and wearable sensor data further reduces this error. We validate this in a mixture of people with no gait impairments, lower limb prosthesis users, and individuals with a history of stroke. We also show that sensor data allows tracking through periods of visual occlusion.

翻译:视频与可穿戴传感器数据为人体运动提供了互补信息。视频提供了人体在空间中的整体理解,而可穿戴传感器则能对特定身体节段进行高分辨率测量。若能开发一种鲁棒的方法融合这两种模态并获取生物力学精确的运动学数据,将对临床评估与监测具有重要价值。尽管已存在多种视频-传感器融合方法,但大多数方法均假设已完成耗时且通常脆弱的传感器-身体标定过程。本研究提出一种方法,可在全时间分辨率下融合手持智能手机视频与未校准的可穿戴传感器数据。我们的单目纯视频生物力学重建方法已表现出良好性能,在步行时膝关节角度误差仅为数度,与无标记动作捕捉系统相当。通过融合视频与可穿戴传感器数据进行重建可进一步降低该误差。我们在无步态障碍者、下肢假肢使用者及有中风病史的个体混合人群中验证了该方法的有效性。研究还表明,传感器数据能够实现视觉遮挡期间的运动追踪。