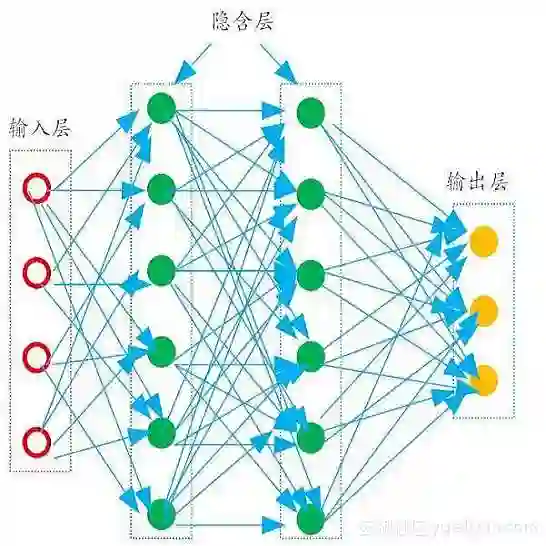

The remarkable capabilities of modern large language models are rooted in their vast repositories of knowledge encoded within their parameters, enabling them to perceive the world and engage in reasoning. The inner workings of how these models store knowledge have long been a subject of intense interest and investigation among researchers. To date, most studies have concentrated on isolated components within these models, such as the Multilayer Perceptrons and attention head. In this paper, we delve into the computation graph of the language model to uncover the knowledge circuits that are instrumental in articulating specific knowledge. The experiments, conducted with GPT2 and TinyLLAMA, have allowed us to observe how certain information heads, relation heads, and Multilayer Perceptrons collaboratively encode knowledge within the model. Moreover, we evaluate the impact of current knowledge editing techniques on these knowledge circuits, providing deeper insights into the functioning and constraints of these editing methodologies. Finally, we utilize knowledge circuits to analyze and interpret language model behaviors such as hallucinations and in-context learning. We believe the knowledge circuits hold potential for advancing our understanding of Transformers and guiding the improved design of knowledge editing. Code and data are available in https://github.com/zjunlp/KnowledgeCircuits.

翻译:现代大规模语言模型的卓越能力源于其参数中编码的庞大知识库,使其能够感知世界并进行推理。这些模型如何存储知识的内部机制长期以来一直是研究者高度关注和深入研究的课题。迄今为止,大多数研究集中于模型中的孤立组件,例如多层感知机和注意力头。本文通过深入探究语言模型的计算图,揭示了在表达特定知识中起关键作用的知识电路。基于GPT2和TinyLLAMA的实验使我们能够观察到某些信息头、关系头与多层感知机如何协同在模型中编码知识。此外,我们评估了当前知识编辑技术对这些知识电路的影响,从而更深入地理解这些编辑方法的运作机制与局限性。最后,我们利用知识电路分析和解释语言模型的行为,如幻觉和上下文学习。我们相信知识电路在深化对Transformer的理解以及指导知识编辑技术的改进设计方面具有潜力。代码与数据可在 https://github.com/zjunlp/KnowledgeCircuits 获取。