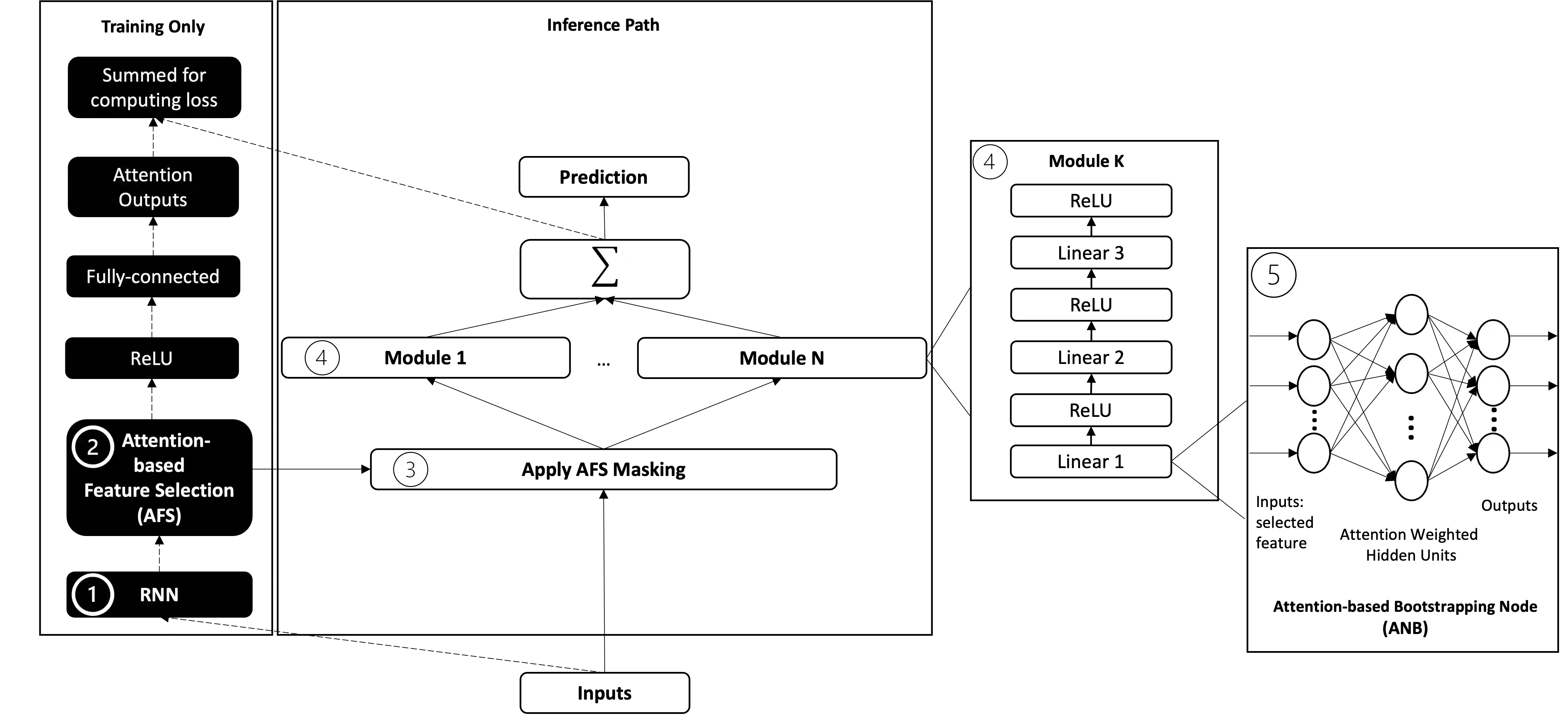

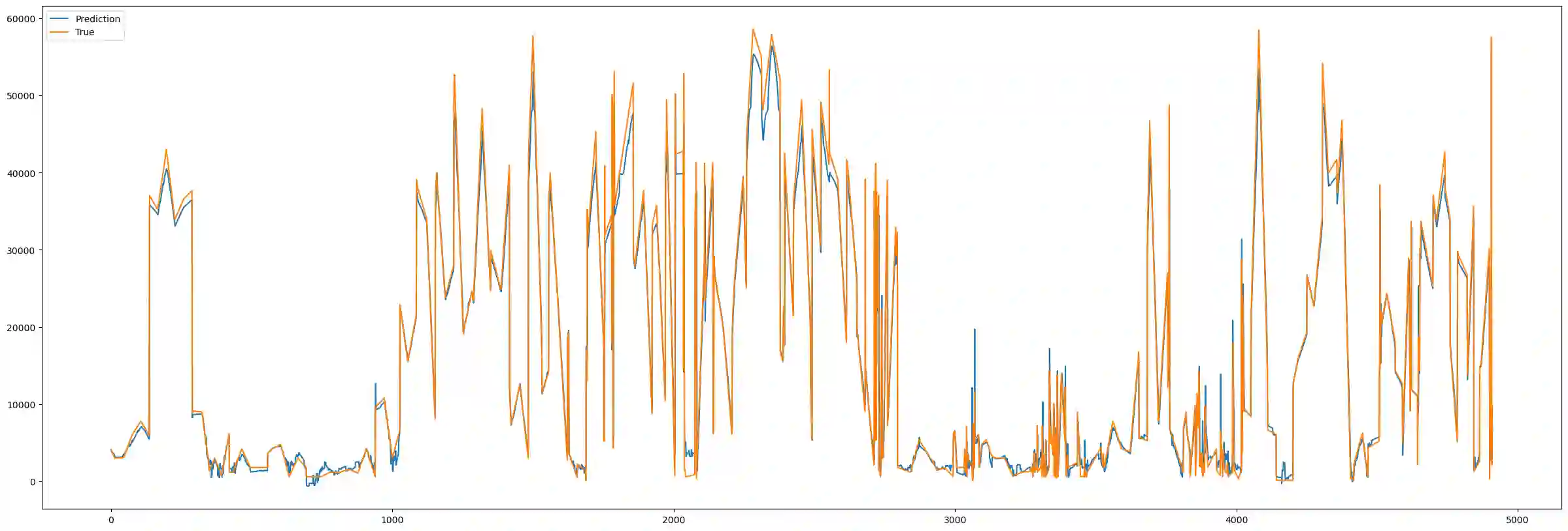

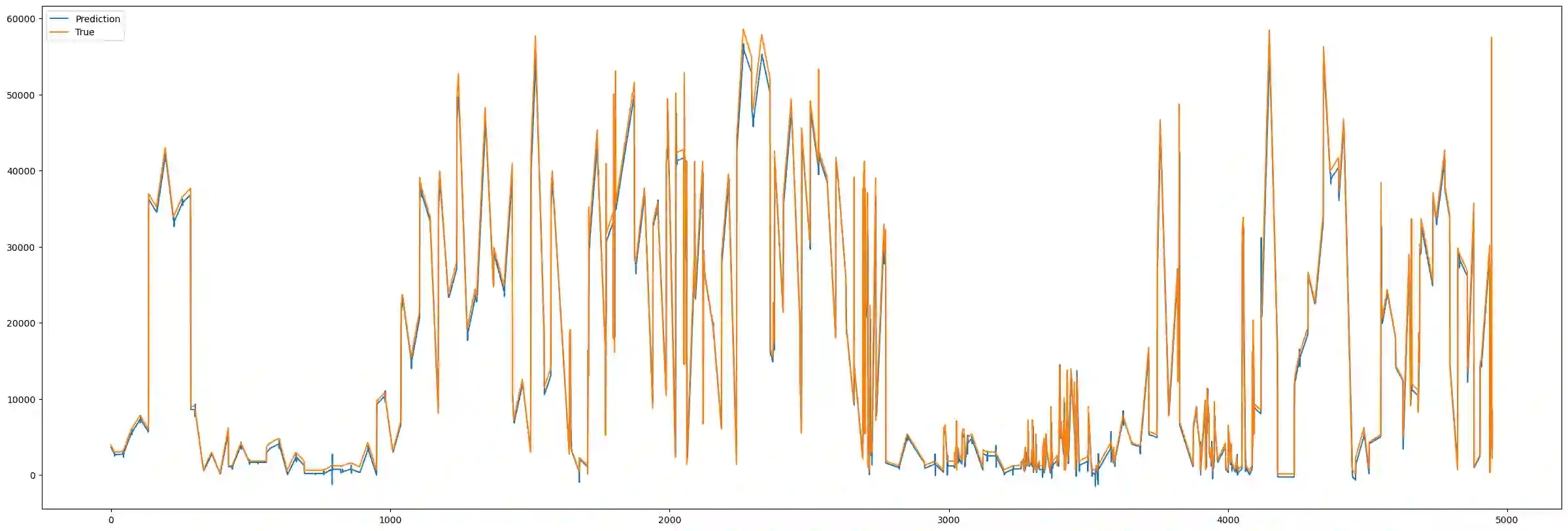

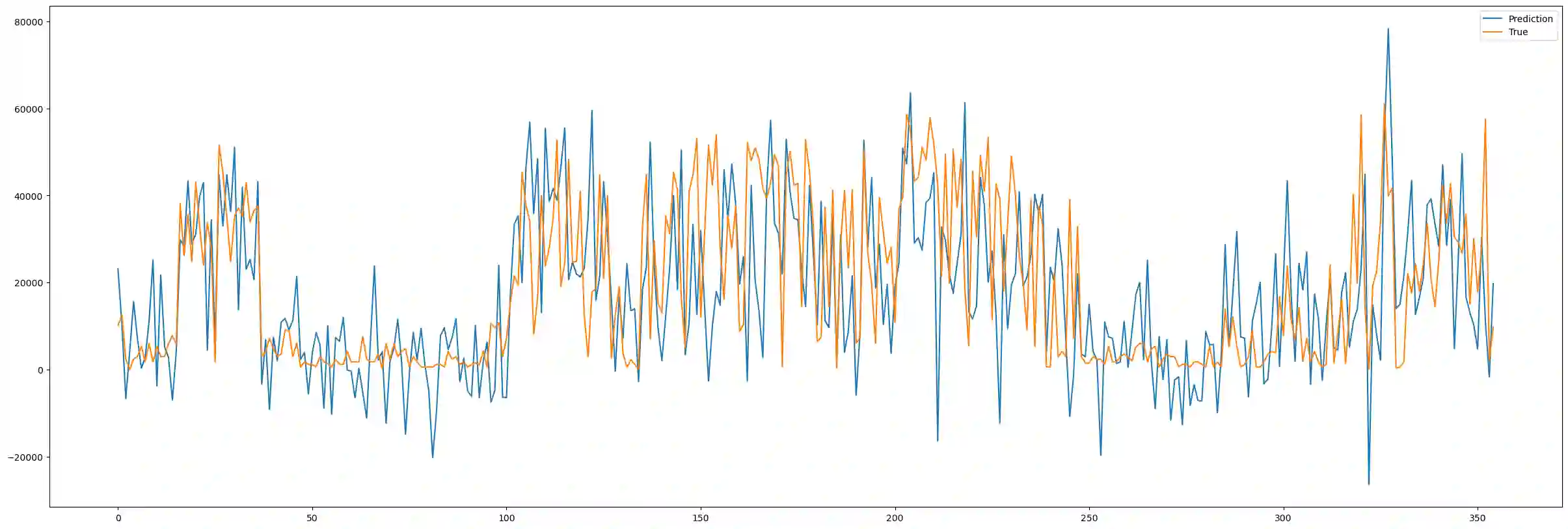

Multivariate time series have many applications, from healthcare and meteorology to life science. Although deep learning models have shown excellent predictive performance for time series, they have been criticised for being "black-boxes" or non-interpretable. This paper proposes a novel modular neural network model for multivariate time series prediction that is interpretable by construction. A recurrent neural network learns the temporal dependencies in the data while an attention-based feature selection component selects the most relevant features and suppresses redundant features used in the learning of the temporal dependencies. A modular deep network is trained from the selected features independently to show the users how features influence outcomes, making the model interpretable. Experimental results show that this approach can outperform state-of-the-art interpretable Neural Additive Models (NAM) and variations thereof in both regression and classification of time series tasks, achieving a predictive performance that is comparable to the top non-interpretable methods for time series, LSTM and XGBoost.

翻译:多元时间序列在医疗、气象学及生命科学等领域具有广泛应用。尽管深度学习模型在时间序列预测中展现出卓越性能,但其因存在“黑箱”特性或缺乏可解释性而备受诟病。本文提出一种新颖的模块化神经网络模型,通过架构设计实现多元时间序列预测的可解释性。该模型采用循环神经网络学习数据中的时序依赖关系,同时引入基于注意力机制的特征选择组件,筛选最相关特征并抑制冗余特征对时序依赖学习的干扰。通过从选定特征中独立训练模块化深度网络,模型可展示特征对输出结果的影响机制,从而具备可解释性。实验结果表明,该方法在时序回归与分类任务中均能超越当前最优的可解释性神经加性模型(NAM)及其变体,其预测性能可与时序领域顶尖的非可解释性方法(LSTM与XGBoost)相媲美。