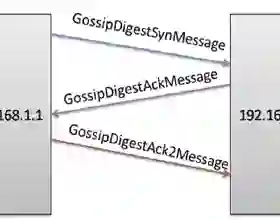

Decentralized Gradient Descent (D-GD) allows a set of users to perform collaborative learning without sharing their data by iteratively averaging local model updates with their neighbors in a network graph. The absence of direct communication between non-neighbor nodes might lead to the belief that users cannot infer precise information about the data of others. In this work, we demonstrate the opposite, by proposing the first attack against D-GD that enables a user (or set of users) to reconstruct the private data of other users outside their immediate neighborhood. Our approach is based on a reconstruction attack against the gossip averaging protocol, which we then extend to handle the additional challenges raised by D-GD. We validate the effectiveness of our attack on real graphs and datasets, showing that the number of users compromised by a single or a handful of attackers is often surprisingly large. We empirically investigate some of the factors that affect the performance of the attack, namely the graph topology, the number of attackers, and their position in the graph.

翻译:分散式梯度下降(D-GD)允许一组用户通过迭代地与网络图中的邻居平均本地模型更新来进行协作学习,而无需共享其数据。非邻居节点之间缺乏直接通信可能使用户认为无法推断他人数据的精确信息。在本工作中,我们通过提出针对D-GD的首个攻击证明了相反情况,该攻击使用户(或一组用户)能够重构其直接邻域之外其他用户的私有数据。我们的方法基于针对八卦平均协议的重构攻击,随后将其扩展以应对D-GD带来的额外挑战。我们在真实图和数据集上验证了攻击的有效性,表明单个或少数攻击者所危及的用户数量通常惊人地庞大。我们实证研究了影响攻击性能的一些因素,即图拓扑结构、攻击者数量及其在图中的位置。