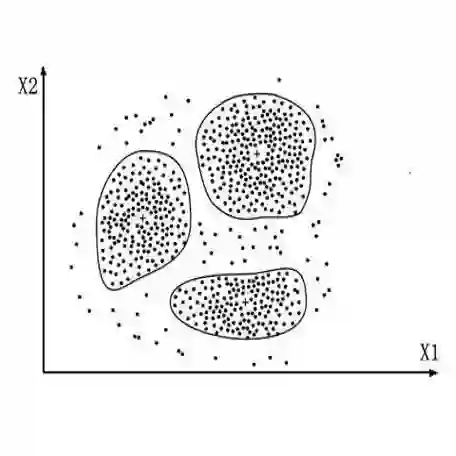

Contrastive learning has gained significant attention in short text clustering, yet it has an inherent drawback of mistakenly identifying samples from the same category as negatives and then separating them in the feature space (false negative separation), which hinders the generation of superior representations. To generate more discriminative representations for efficient clustering, we propose a novel short text clustering method, called Discriminative Representation learning via \textbf{A}ttention-\textbf{E}nhanced \textbf{C}ontrastive \textbf{L}earning for Short Text Clustering (\textbf{AECL}). The \textbf{AECL} consists of two modules which are the pseudo-label generation module and the contrastive learning module. Both modules build a sample-level attention mechanism to capture similarity relationships between samples and aggregate cross-sample features to generate consistent representations. Then, the former module uses the more discriminative consistent representation to produce reliable supervision information for assist clustering, while the latter module explores similarity relationships and consistent representations optimize the construction of positive samples to perform similarity-guided contrastive learning, effectively addressing the false negative separation issue. Experimental results demonstrate that the proposed \textbf{AECL} outperforms state-of-the-art methods. If the paper is accepted, we will open-source the code.

翻译:对比学习在短文本聚类中受到广泛关注,但其存在固有缺陷:错误地将同一类别的样本识别为负例并在特征空间中将其分离(伪负例分离),这阻碍了生成更优的表示。为生成更具判别性的表示以实现高效聚类,我们提出一种新颖的短文本聚类方法,称为基于**注**意力**增**强**对**比**学**习的短文本聚类判别性表示学习方法(**AECL**)。**AECL**包含两个模块:伪标签生成模块与对比学习模块。两个模块均构建了样本级注意力机制,以捕获样本间的相似性关系并聚合跨样本特征以生成一致性表示。随后,前一模块利用判别性更强的一致性表示生成可靠的监督信息以辅助聚类;而后一模块则探索相似性关系,并利用一致性表示优化正样本构建,以执行相似性引导的对比学习,从而有效解决伪负例分离问题。实验结果表明,所提出的**AECL**方法优于现有先进方法。若论文被录用,我们将开源代码。