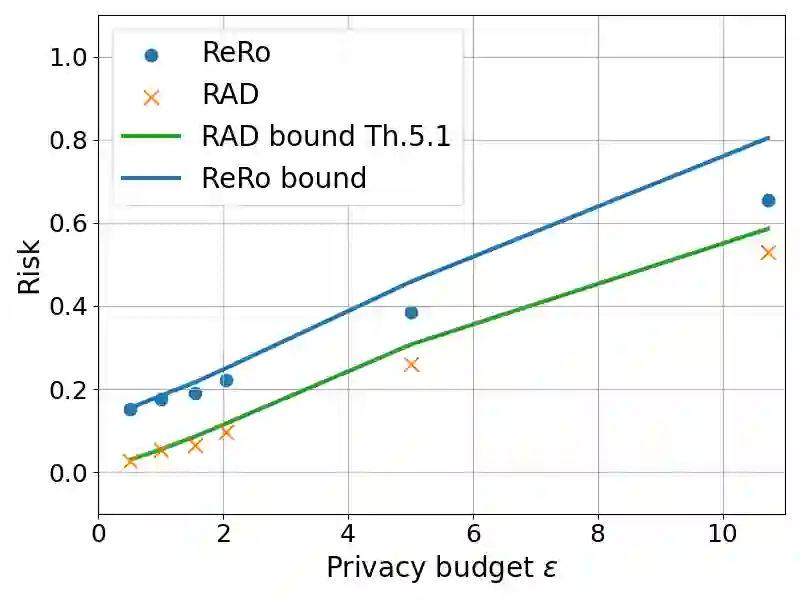

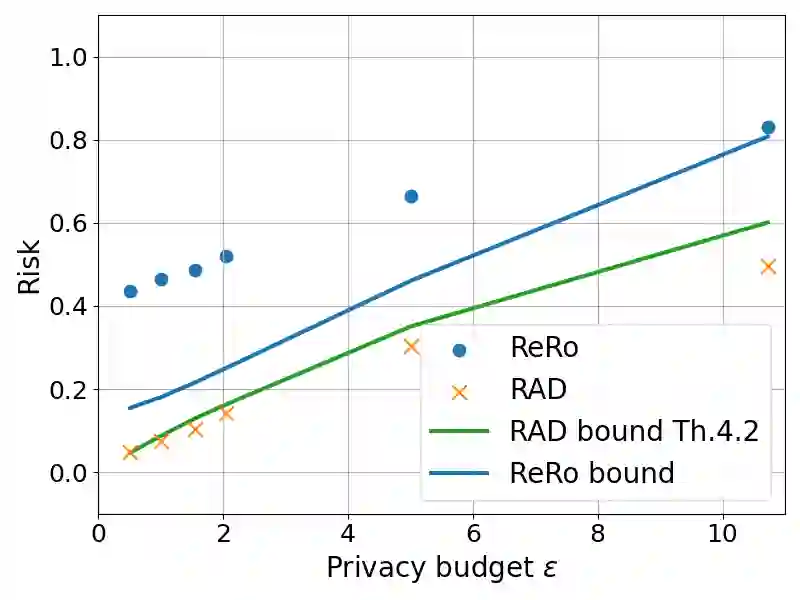

Differential Privacy (DP) is widely adopted in data management systems to enable data sharing with formal disclosure guarantees. A central systems challenge is understanding how DP noise translates into effective protection against inference attacks, since this directly determines achievable utility. Most existing analyses focus only on membership inference -- capturing only a threat -- or rely on reconstruction robustness (ReRo). However, under realistic assumptions, we show that ReRo can yield misleading risk estimates and violate claimed bounds, limiting their usefulness for principled DP calibration and auditing. This paper introduces reconstruction advantage, a unified risk metric that consistently captures risk across membership inference, attribute inference, and data reconstruction. We derive tight bounds that relate DP noise to adversarial advantage and characterize optimal adversarial strategies for arbitrary DP mechanisms and attacker knowledge. These results enable risk-driven noise calibration and provide a foundation for systematic DP auditing. We show that reconstruction advantage improves the accuracy and scope of DP auditing and enables more effective utility-privacy trade-offs in DP-enabled data management systems.

翻译:差分隐私(DP)已被广泛应用于数据管理系统,以在提供形式化披露保证的前提下实现数据共享。一个核心的系统挑战在于理解DP噪声如何转化为针对推断攻击的有效保护,因为这直接决定了可实现的效用。现有分析大多仅关注成员推断——仅捕捉单一威胁——或依赖于重构鲁棒性(ReRo)。然而,在现实假设下,我们证明ReRo可能产生误导性的风险估计并违反所声称的边界,从而限制了其在基于原则的DP校准与审计中的实用性。本文提出了重构优势这一统一风险度量,它能一致地刻画成员推断、属性推断和数据重构中的风险。我们推导了将DP噪声与对抗优势相关联的紧致边界,并刻画了针对任意DP机制和攻击者知识的最优对抗策略。这些结果实现了基于风险的噪声校准,并为系统化的DP审计奠定了基础。我们证明重构优势提高了DP审计的准确性与范围,并在支持DP的数据管理系统中实现了更有效的效用-隐私权衡。