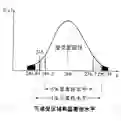

Absolute anonymization, conceived as an irreversible transformation that prevents re-identification and sensitive value disclosure, has proven to be a broken promise. Consequently, modern data protection must shift toward a privacy-utility trade-off grounded in risk mitigation. Differential Privacy (DP) offers a rigorous mathematical framework for balancing quantified disclosure risk with analytical usefulness. Nevertheless, widespread adoption remains limited, largely because effective translation of complex technical concepts, such as privacy-loss parameters, into forms meaningful to non-technical stakeholders has yet to be achieved. This difficulty arises from the inherent use of randomization: both legitimate analysts and potential adversaries must draw conclusions from uncertain observations rather than deterministic values. In this work, we propose a new interpretation of the privacy-utility trade-off based on hypothesis testing. This perspective explicitly accounts for the uncertainty introduced by randomized mechanisms in both membership inference scenarios and general data analysis. In particular, we introduce the concept of relative disclosure risk to quantify the maximum reduction in uncertainty an adversary can obtain from protected outputs, and we show that this measure is directly related to standard privacy-loss parameters. At the same time, we analyze how DP affects analytical validity by studying its impact on hypothesis tests commonly used to assess the statistical significance of empirical results. Finally, we provide practical guidance, accessible to non-experts, for navigating the privacy-utility trade-off, aiding in the selection of suitable protection mechanisms and the values for the privacy-loss parameters.

翻译:绝对匿名化,即被视为一种防止重新识别和敏感值泄露的不可逆转换,已被证明是一个无法兑现的承诺。因此,现代数据保护必须转向基于风险缓解的隐私-效用权衡。差分隐私(DP)提供了一个严格的数学框架,用于平衡量化的泄露风险与分析实用性。然而,其广泛采用仍然有限,主要是因为将复杂的技术概念(如隐私损失参数)有效转化为对非技术利益相关者有意义的表述尚未实现。这一困难源于随机化的固有使用:无论是合法的分析者还是潜在的对手,都必须从不确定的观测而非确定性值中得出结论。在本研究中,我们提出了一种基于假设检验的隐私-效用权衡新解释。这一视角明确考虑了随机化机制在成员推理场景和一般数据分析中引入的不确定性。具体而言,我们引入了相对泄露风险的概念,以量化对手从受保护输出中可获得的最大不确定性减少,并证明该度量与标准隐私损失参数直接相关。同时,我们通过研究DP对常用于评估实证结果统计显著性的假设检验的影响,分析了DP如何影响分析有效性。最后,我们为非专家提供了实用的指导,以帮助他们在隐私-效用权衡中进行导航,协助选择适当的保护机制和隐私损失参数值。