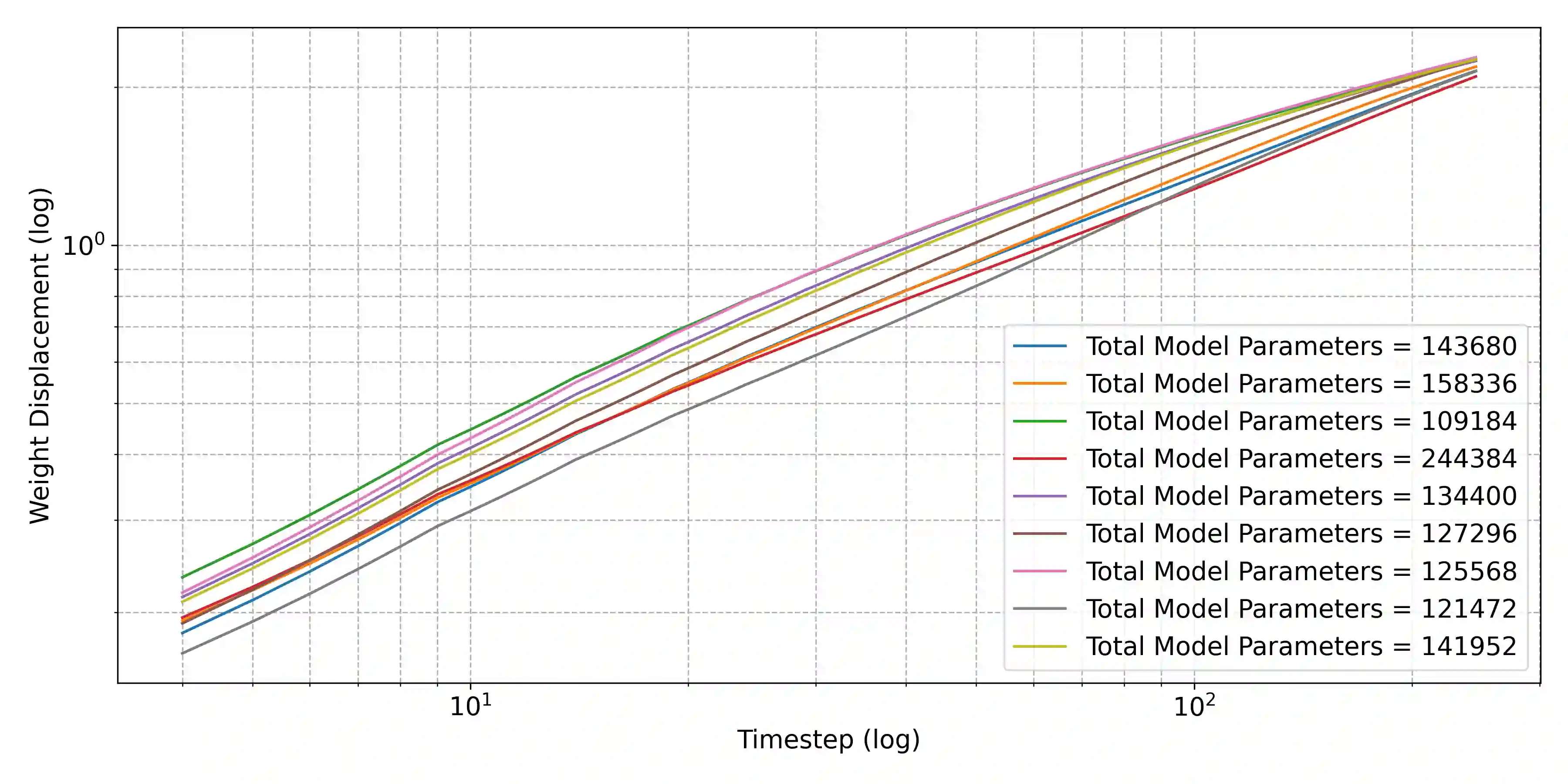

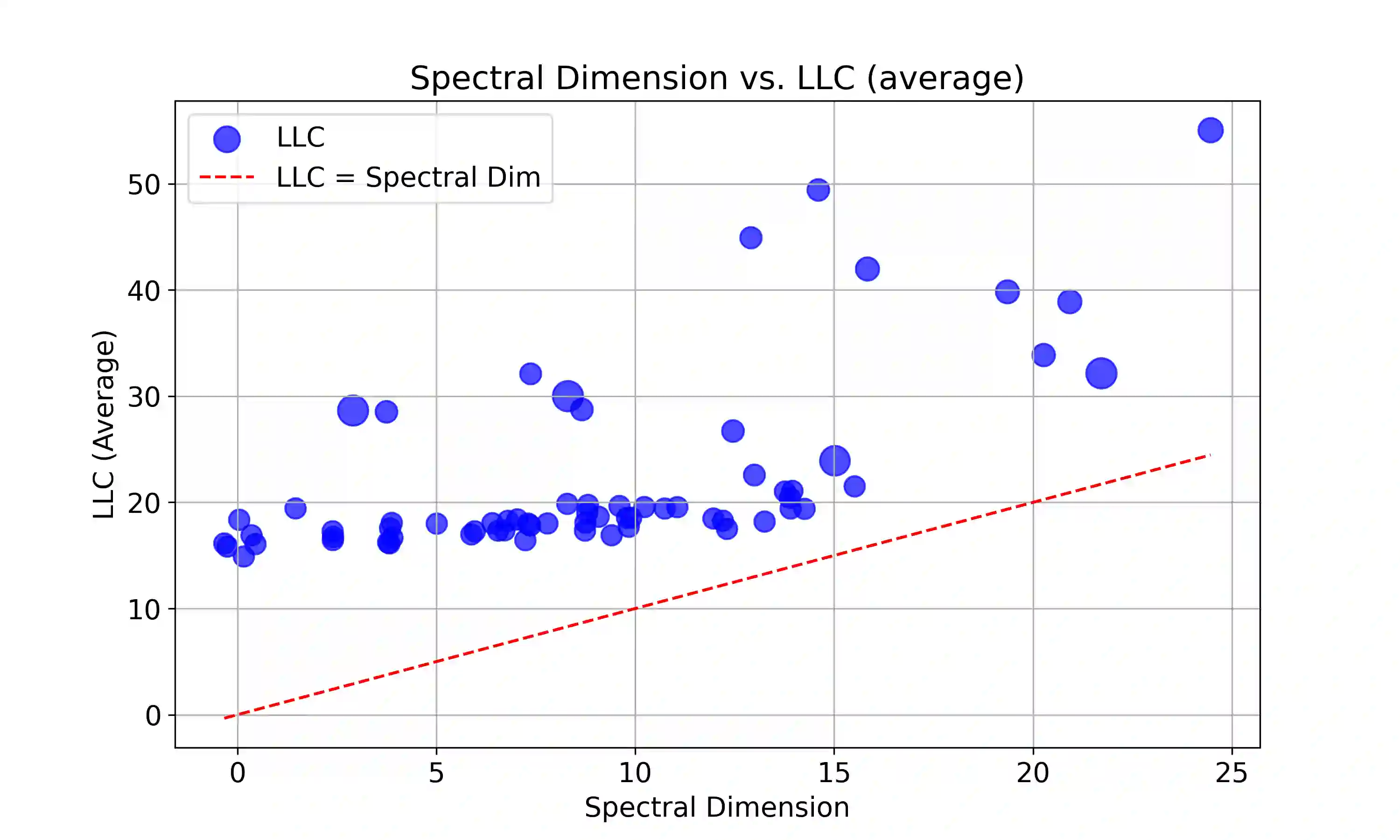

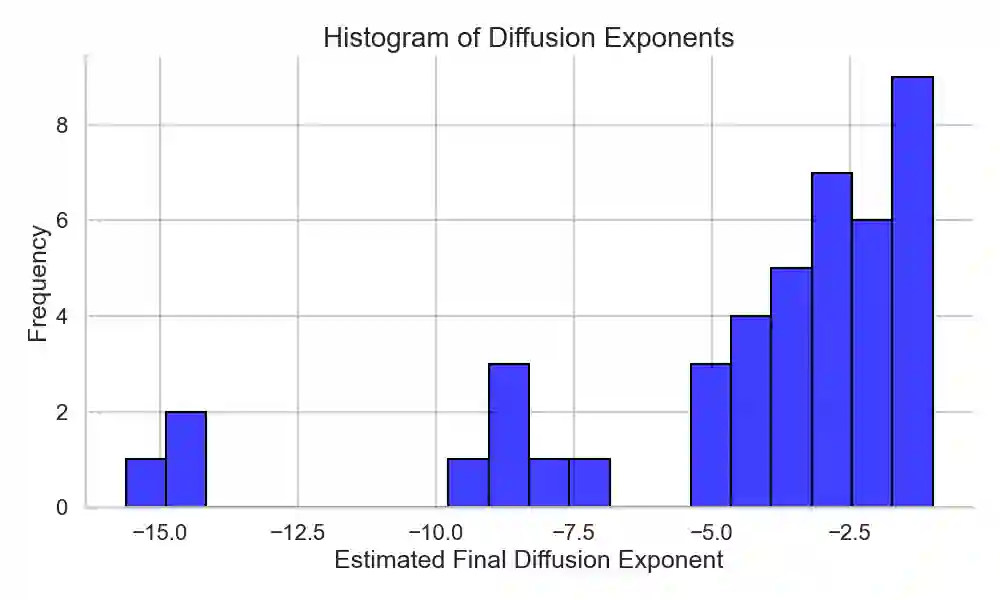

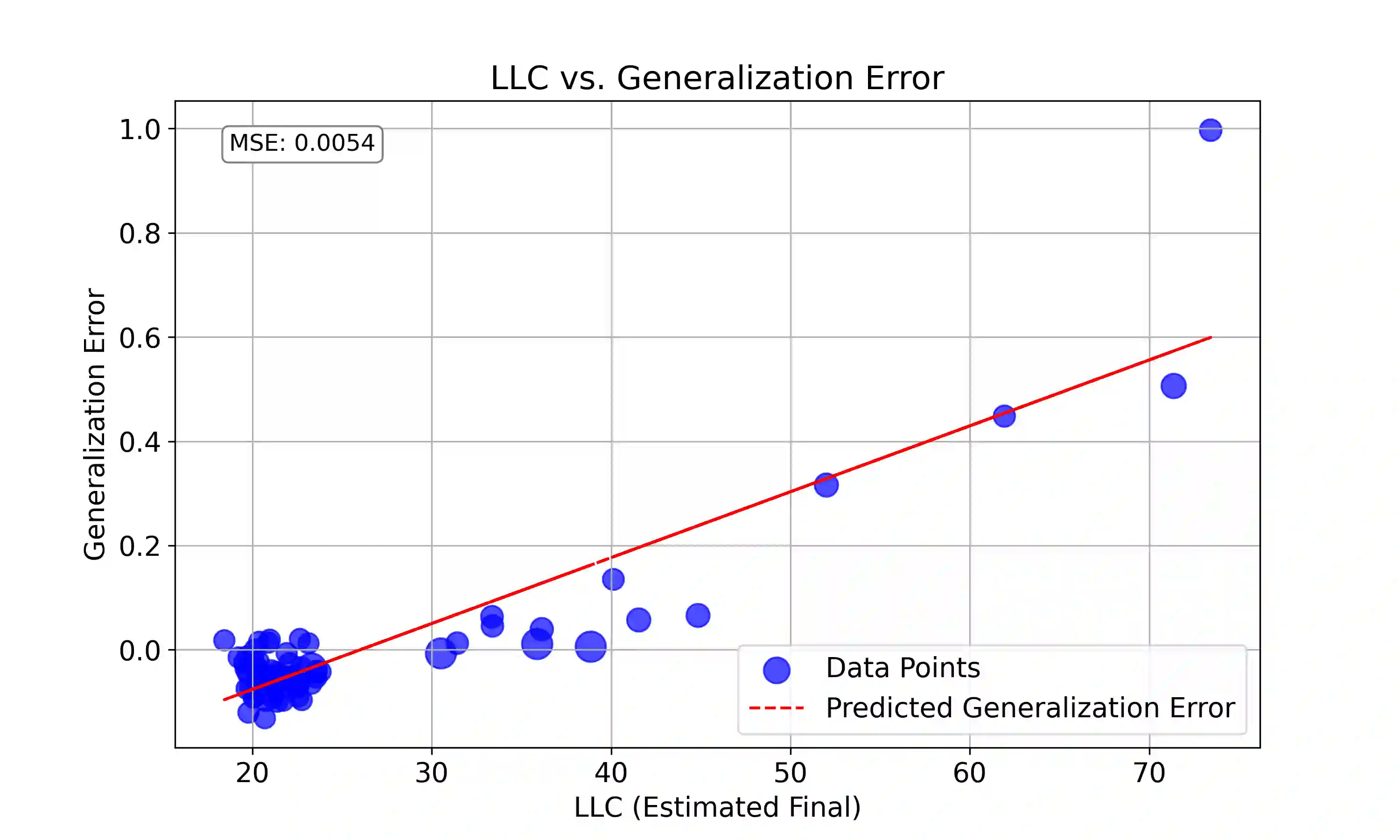

We show that the behavior of stochastic gradient descent is related to Bayesian statistics by showing that SGD is effectively diffusion on a fractal landscape, where the fractal dimension can be accounted for in a purely Bayesian way. By doing this we show that SGD can be regarded as a modified Bayesian sampler which accounts for accessibility constraints induced by the fractal structure of the loss landscape. We verify our results experimentally by examining the diffusion of weights during training. These results offer insight into the factors which determine the learning process, and seemingly answer the question of how SGD and purely Bayesian sampling are related.

翻译:我们通过证明随机梯度下降本质上是在分形景观上的扩散过程,揭示了其行为与贝叶斯统计的内在关联,其中分形维度可通过纯贝叶斯方式进行解释。由此我们证明,SGD可视为一种修正的贝叶斯采样器,该采样器考虑了损失景观分形结构所导致的可达性约束。我们通过实验观测训练过程中权重的扩散现象验证了理论结果。这些发现为理解学习过程的决定因素提供了新视角,并似乎解答了SGD与纯贝叶斯采样之间的关联性问题。