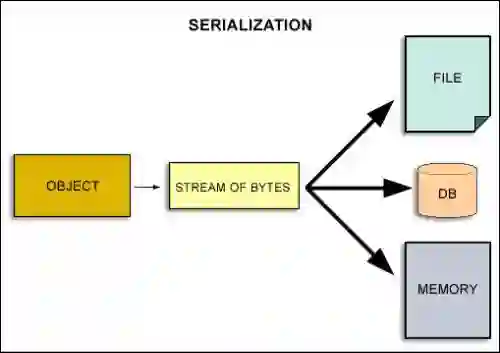

The rapid growth of Large Transformer-based models, specifically Large Language Models (LLMs), now scaling to trillions of parameters, has necessitated training across thousands of GPUs using complex hybrid parallelism strategies (e.g., data, tensor, and pipeline parallelism). Checkpointing this massive, distributed state is critical for a wide range of use cases, such as resilience, suspend-resume, investigating undesirable training trajectories, and explaining model evolution. However, existing checkpointing solutions typically treat model state as opaque binary blobs, ignoring the ``3D heterogeneity'' of the underlying data structures--varying by memory location (GPU vs. Host), number of ``logical'' objects sharded and split across multiple files, data types (tensors vs. Python objects), and their serialization requirements. This results in significant runtime overheads due to blocking device-to-host transfers, data-oblivious serialization, and storage I/O contention. In this paper, we introduce DataStates-LLM, a novel checkpointing architecture that leverages State Providers to decouple state abstraction from data movement. DataStates-LLM exploits the immutability of model parameters during the forward and backward passes to perform ``lazy'', non-blocking asynchronous snapshots. By introducing State Providers, we efficiently coalesce fragmented, heterogeneous shards and overlap the serialization of metadata with bulk tensor I/O. We evaluate DataStates-LLM on models up to 70B parameters on 256 A100-40GB GPUs. Our results demonstrate that DataStates-LLM achieves up to 4$\times$ higher checkpointing throughput and reduces end-to-end training time by up to 2.2$\times$ compared to state-of-the-art solutions, effectively mitigating the serialization and heterogeneity bottlenecks in extreme-scale LLM training.

翻译:基于Transformer的大型模型,特别是大语言模型(LLMs)的快速发展,其参数量现已达到万亿规模,这要求使用复杂的混合并行策略(如数据并行、张量并行和流水线并行)在数千个GPU上进行训练。对这种大规模分布式状态进行检查点保存对于多种应用场景至关重要,例如容错恢复、训练挂起与恢复、研究不良训练轨迹以及解释模型演化过程。然而,现有的检查点解决方案通常将模型状态视为不透明的二进制数据块,忽略了底层数据结构的“三维异构性”——这些结构在内存位置(GPU与主机)、跨多个文件分片与分割的“逻辑”对象数量、数据类型(张量与Python对象)及其序列化要求方面存在差异。这导致了显著的运行时开销,原因包括阻塞式的设备到主机数据传输、无视数据特性的序列化以及存储I/O争用。本文提出DataStates-LLM,一种新颖的检查点架构,它利用状态提供器将状态抽象与数据移动解耦。DataStates-LLM利用模型参数在前向传播和反向传播期间的不变性,执行“惰性”的、非阻塞的异步快照。通过引入状态提供器,我们高效地合并了碎片化的异构分片,并将元数据的序列化与批量张量I/O重叠进行。我们在256个A100-40GB GPU上对参数量高达700亿的模型评估了DataStates-LLM。实验结果表明,与最先进的解决方案相比,DataStates-LLM实现了高达4倍的检查点吞吐量提升,并将端到端训练时间减少了高达2.2倍,有效缓解了极端规模LLM训练中的序列化与异构性瓶颈。