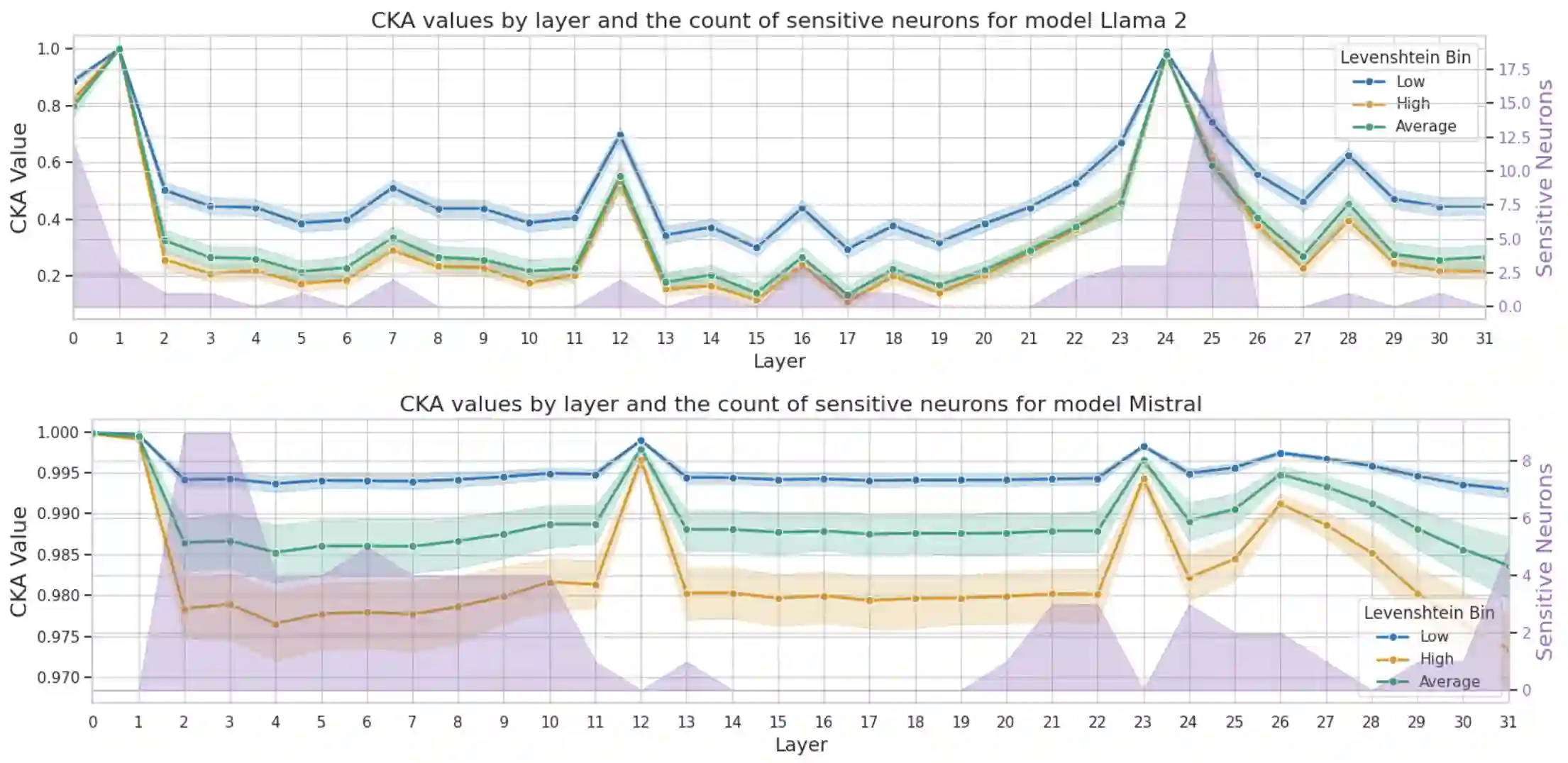

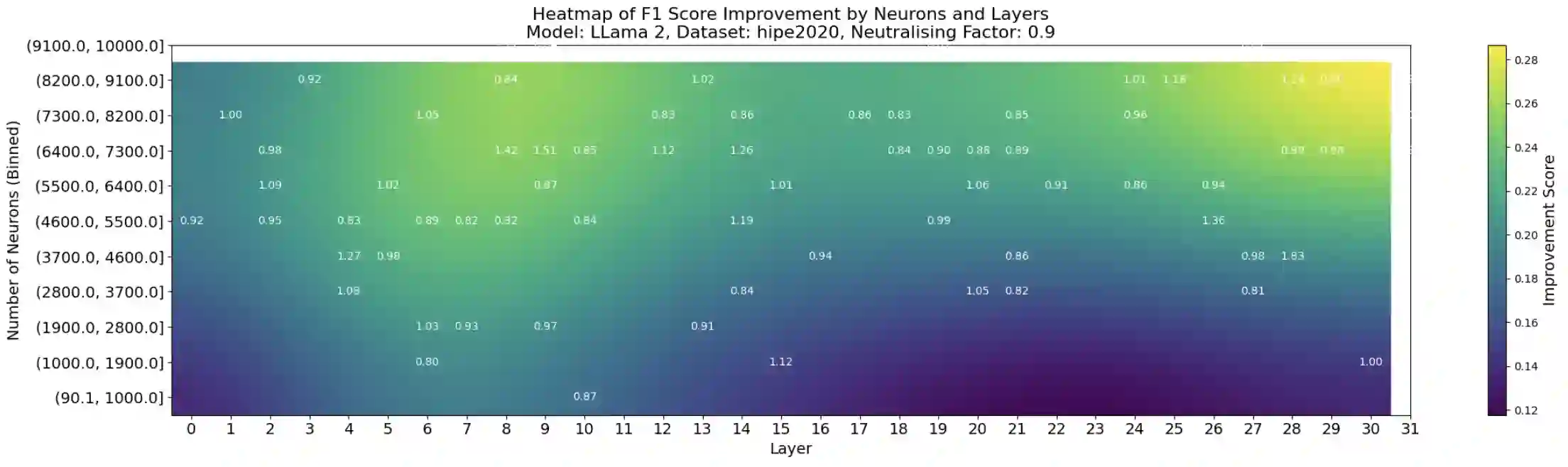

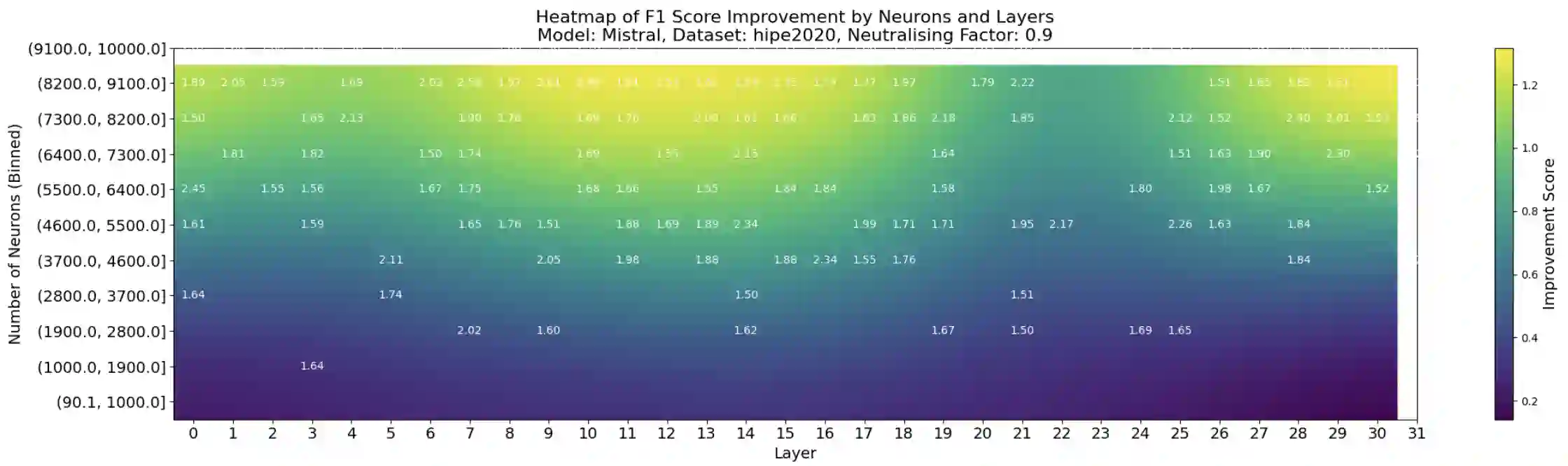

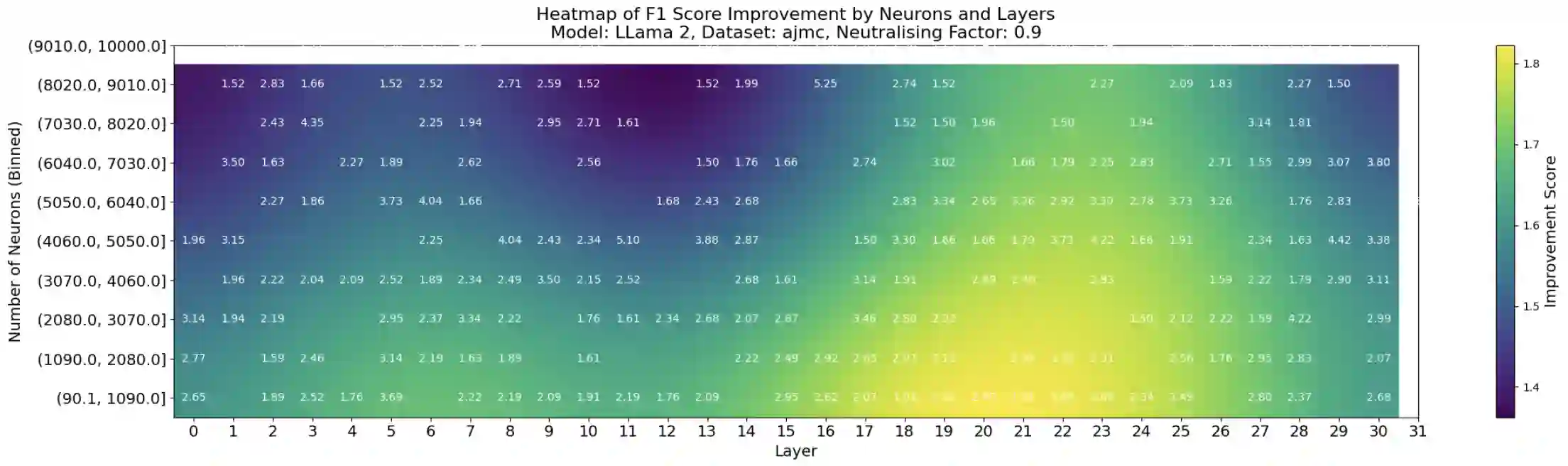

This paper investigates the presence of OCR-sensitive neurons within the Transformer architecture and their influence on named entity recognition (NER) performance on historical documents. By analysing neuron activation patterns in response to clean and noisy text inputs, we identify and then neutralise OCR-sensitive neurons to improve model performance. Based on two open access large language models (Llama2 and Mistral), experiments demonstrate the existence of OCR-sensitive regions and show improvements in NER performance on historical newspapers and classical commentaries, highlighting the potential of targeted neuron modulation to improve models' performance on noisy text.

翻译:本文研究了Transformer架构中OCR敏感神经元的存在及其对历史文档命名实体识别(NER)性能的影响。通过分析模型在干净文本与噪声文本输入下的神经元激活模式,我们识别并中和了OCR敏感神经元以提升模型性能。基于两个开源大语言模型(Llama2与Mistral)的实验证实了OCR敏感区域的存在,并在历史报纸与古典评论文本上展示了NER性能的提升,这凸显了针对性神经元调控在改善模型噪声文本处理能力方面的潜力。