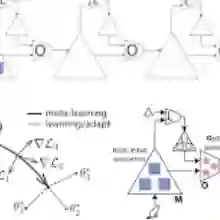

Estimating conditional average treatment effects (CATE) from observational data involves modeling decisions that differ from supervised learning, particularly concerning how to regularize model complexity. Previous approaches can be grouped into two primary "meta-learner" paradigms that impose distinct inductive biases. Indirect meta-learners first fit and regularize separate potential outcome (PO) models and then estimate CATE by taking their difference, whereas direct meta-learners construct and directly regularize estimators for the CATE function itself. Neither approach consistently outperforms the other across all scenarios: indirect learners perform well when the PO functions are simple, while direct learners outperform when the CATE is simpler than individual PO functions. In this paper, we introduce the Hybrid Learner (H-learner), a novel regularization strategy that interpolates between the direct and indirect regularizations depending on the dataset at hand. The H-learner achieves this by learning intermediate functions whose difference closely approximates the CATE without necessarily requiring accurate individual approximations of the POs themselves. We demonstrate that intentionally allowing suboptimal fits to the POs improves the bias-variance tradeoff in estimating CATE. Experiments conducted on semi-synthetic and real-world benchmark datasets illustrate that the H-learner consistently operates at the Pareto frontier, effectively combining the strengths of both direct and indirect meta-learners.

翻译:从观测数据中估计条件平均处理效应(CATE)涉及与监督学习不同的建模决策,尤其是在如何正则化模型复杂度方面。先前的方法可分为两种主要的"元学习器"范式,它们施加了不同的归纳偏置。间接元学习器首先拟合并正则化独立的潜在结果(PO)模型,然后通过取差值来估计CATE;而直接元学习器则构建并直接正则化CATE函数本身的估计器。这两种方法在所有场景中均未表现出绝对优势:当PO函数较为简单时,间接学习器表现良好;而当CATE比单个PO函数更简单时,直接学习器则更具优势。本文提出混合学习器(H-learner),这是一种新颖的正则化策略,可根据具体数据集在直接正则化与间接正则化之间进行插值。H-learner通过学习中间函数来实现这一目标,这些函数的差值能精确逼近CATE,而无需对PO本身进行精确的单独近似。我们证明,在估计CATE时,有意识地允许对PO进行次优拟合能够改善偏差-方差权衡。在半合成和真实世界基准数据集上进行的实验表明,H-learner始终运行在帕累托前沿,有效融合了直接与间接元学习器的优势。