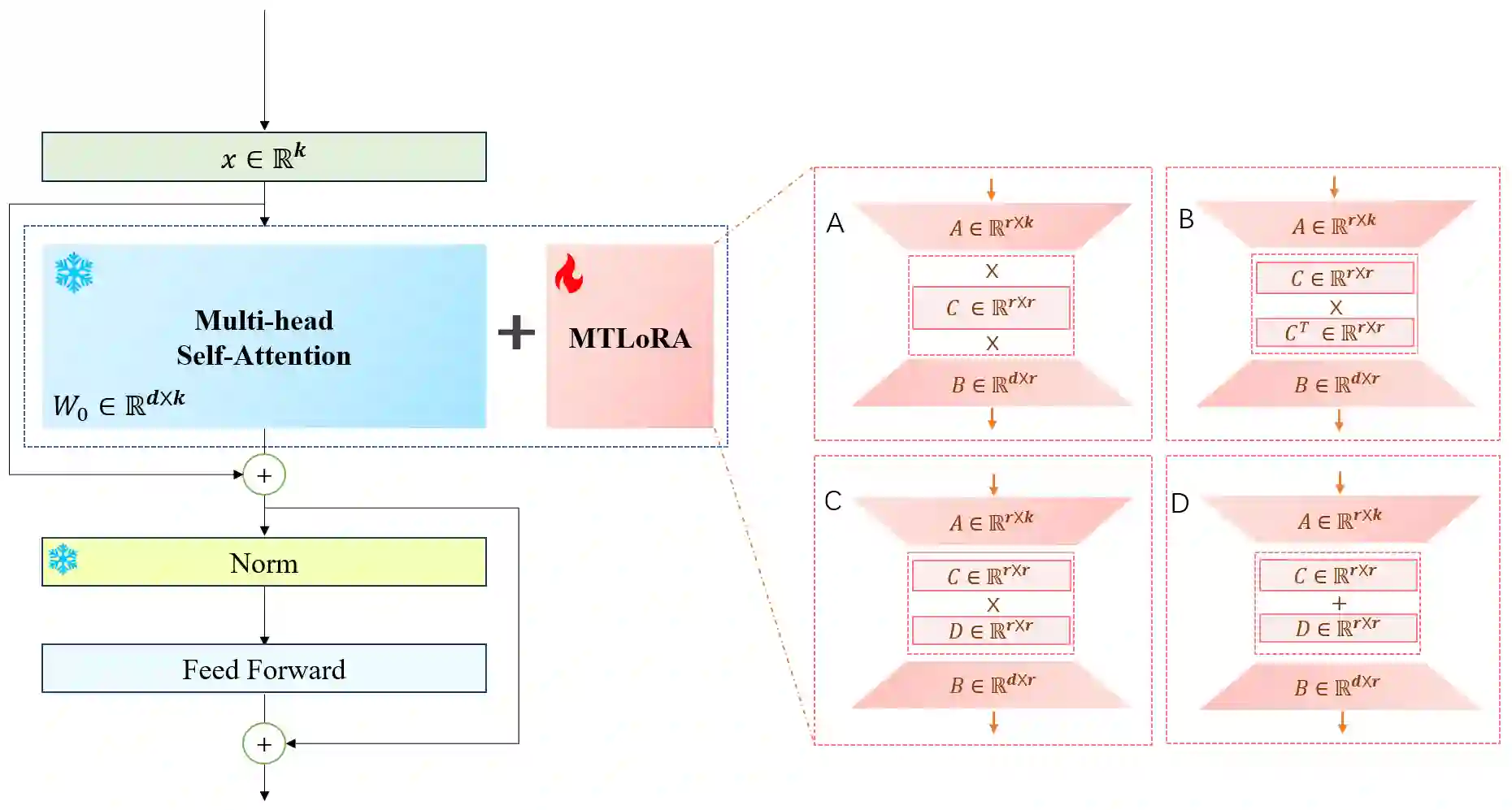

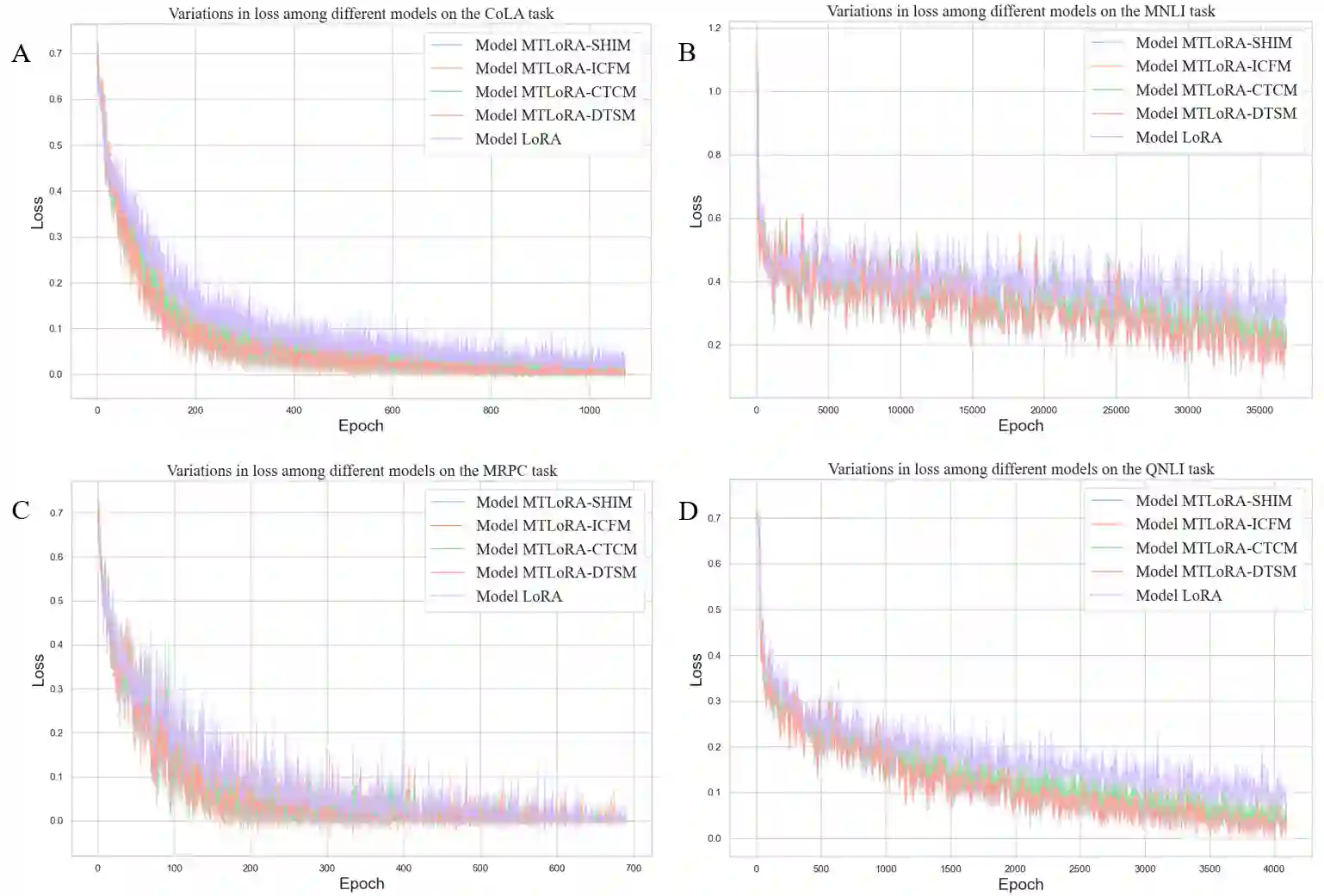

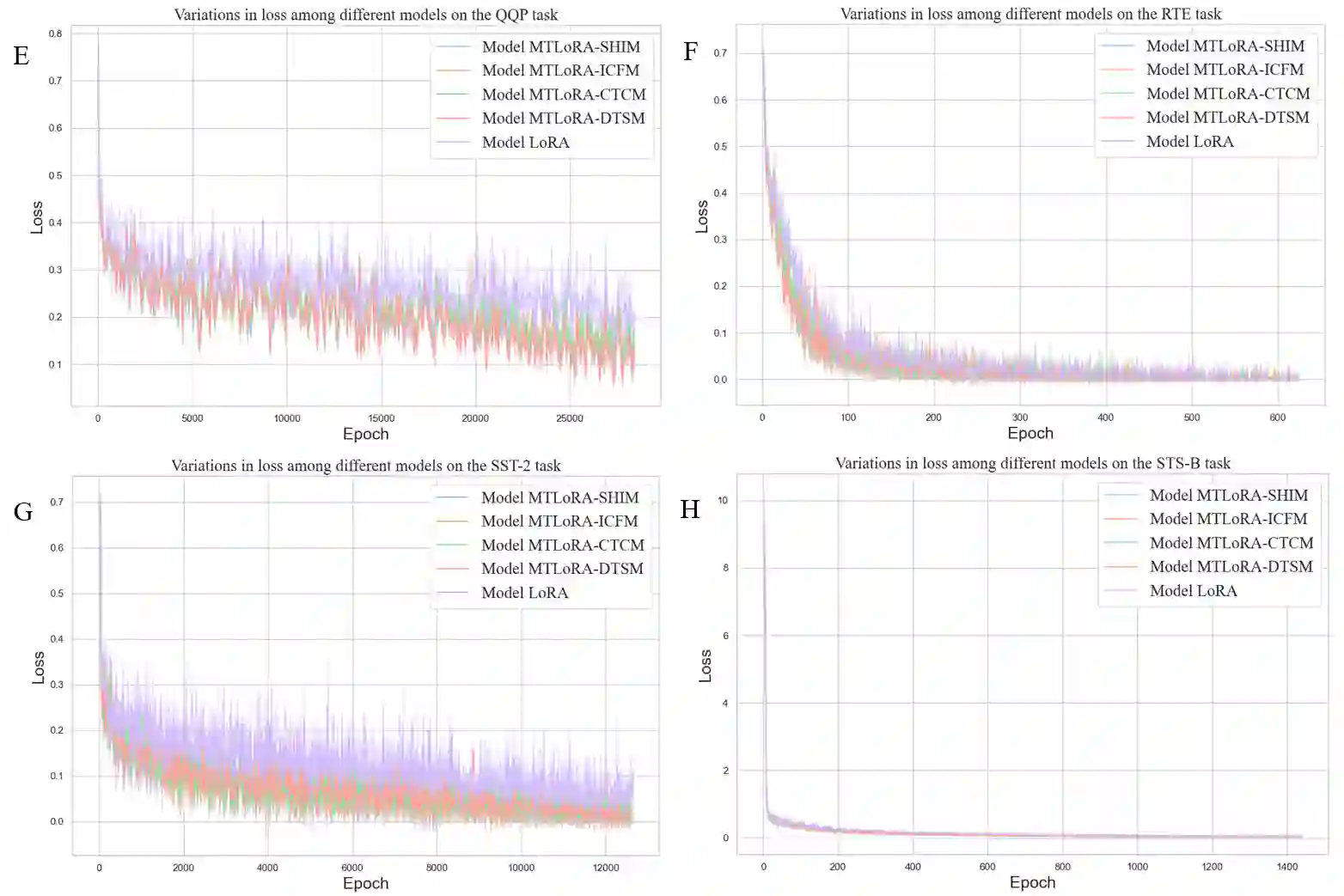

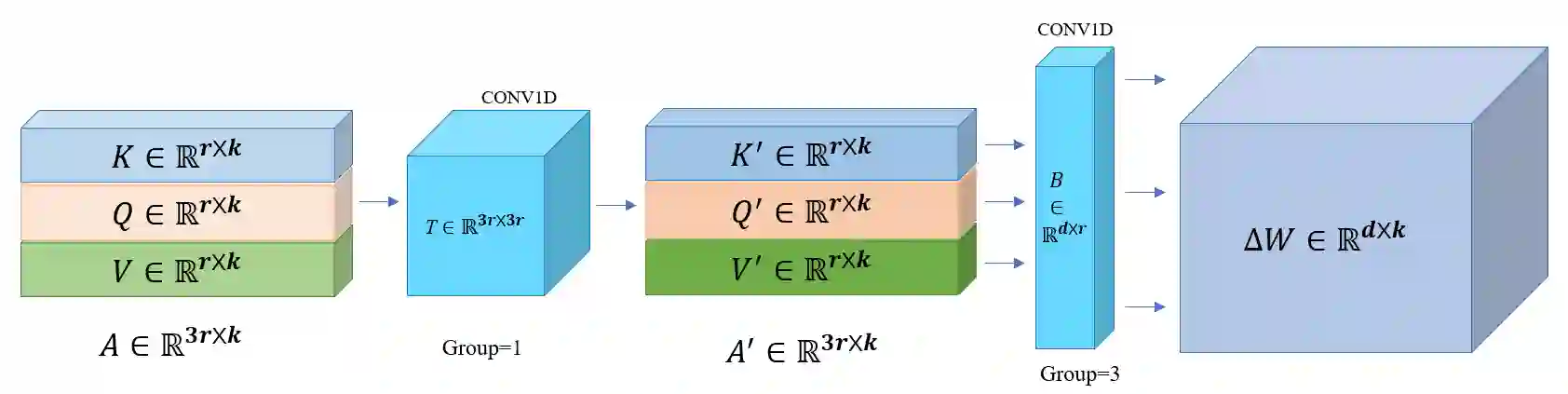

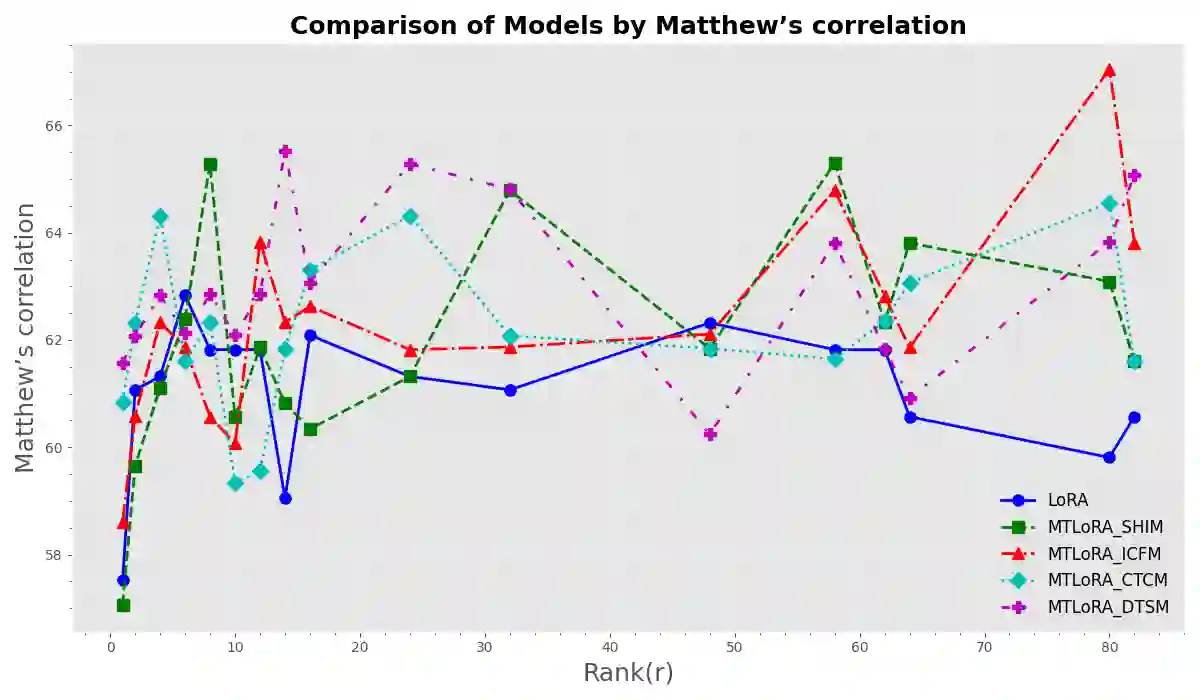

Fine-tuning techniques based on Large Pretrained Language Models (LPLMs) have been proven to significantly enhance model performance on a variety of downstream tasks and effectively control the output behaviors of LPLMs. Recent studies have proposed numerous methods for fine-tuning a small number of parameters based on open-source LPLMs, reducing the demand for computational and storage resources. Among these, reparameterization fine-tuning methods represented by LoRA (Low-Rank Adaptation) have gained popularity. We find that although these methods perform well in many aspects, there is still considerable room for improvement in terms of complex task adaptability, performance, stability, and algorithm complexity. In response to this, inspired by the idea that the functions of the brain are shaped by its geometric structure, this paper integrates this idea into LoRA technology and proposes a new matrix transformation-based reparameterization method for efficient fine-tuning, named Matrix-Transformation based Low-Rank Adaptation (MTLoRA). MTLoRA aims to dynamically alter its spatial geometric structure by applying a transformation-matrix T to perform linear transformations, such as rotation, scaling, and translation, on the task-specific parameter matrix, generating new matrix feature patterns (eigenvectors) to mimic the fundamental influence of complex geometric structure feature patterns in the brain on functions, thereby enhancing the model's performance in downstream tasks. In Natural Language Understanding (NLU) tasks, it is evaluated using the GLUE benchmark test, and the results reveal that MTLoRA achieves an overall performance increase of about 1.0% across eight tasks; in Natural Language Generation (NLG) tasks, MTLoRA improves performance by an average of 0.95% and 0.56% in the DART and WebNLG tasks, respectively.

翻译:基于大型预训练语言模型(LPLMs)的微调技术已被证明能显著提升模型在各种下游任务中的性能,并有效控制LPLMs的输出行为。近期研究提出了许多基于开源LPLMs微调少量参数的方法,降低了对计算和存储资源的需求。其中,以LoRA(低秩自适应)为代表的再参数化微调方法颇受欢迎。我们发现,尽管这些方法在许多方面表现良好,但在复杂任务适应性、性能、稳定性和算法复杂度方面仍有较大改进空间。为此,受大脑功能由其几何结构塑造这一思想的启发,本文将此思想融入LoRA技术,提出了一种新的基于矩阵变换的再参数化高效微调方法,命名为基于矩阵变换的低秩自适应(MTLoRA)。MTLoRA旨在通过应用变换矩阵T执行线性变换(如旋转、缩放和平移)来动态改变任务特定参数矩阵的空间几何结构,生成新的矩阵特征模式(特征向量),以模拟大脑中复杂几何结构特征模式对功能的基本影响,从而提升模型在下游任务中的性能。在自然语言理解(NLU)任务中,使用GLUE基准测试进行评估,结果显示MTLoRA在八项任务上实现了约1.0%的整体性能提升;在自然语言生成(NLG)任务中,MTLoRA在DART和WebNLG任务上分别平均提升了0.95%和0.56%的性能。