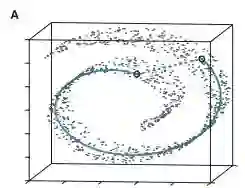

Modern generative modeling methods have demonstrated strong performance in learning complex data distributions from clean samples. In many scientific and imaging applications, however, clean samples are unavailable, and only noisy or linearly corrupted measurements can be observed. Moreover, latent structures, such as manifold geometries, present in the data are important to extract for further downstream scientific analysis. In this work, we introduce Riemannian AmbientFlow, a framework for simultaneously learning a probabilistic generative model and the underlying, nonlinear data manifold directly from corrupted observations. Building on the variational inference framework of AmbientFlow, our approach incorporates data-driven Riemannian geometry induced by normalizing flows, enabling the extraction of manifold structure through pullback metrics and Riemannian Autoencoders. We establish theoretical guarantees showing that, under appropriate geometric regularization and measurement conditions, the learned model recovers the underlying data distribution up to a controllable error and yields a smooth, bi-Lipschitz manifold parametrization. We further show that the resulting smooth decoder can serve as a principled generative prior for inverse problems with recovery guarantees. We empirically validate our approach on low-dimensional synthetic manifolds and on MNIST.

翻译:现代生成建模方法在从干净样本学习复杂数据分布方面已展现出强大性能。然而,在许多科学与成像应用中,干净样本无法获得,仅能观测到含噪声或线性损坏的测量值。此外,数据中存在的潜在结构(如流形几何)对于进一步下游科学分析至关重要。本文提出黎曼环境流——一种能够直接从损坏观测中同时学习概率生成模型与底层非线性数据流形的框架。该方法基于AmbientFlow的变分推断框架,结合了由标准化流诱导的数据驱动黎曼几何,通过拉回度量和黎曼自编码器实现流形结构提取。我们建立了理论保证,证明在适当的几何正则化与测量条件下,学习到的模型能以可控误差恢复底层数据分布,并产生光滑的双李普希茨流形参数化。进一步研究表明,所得光滑解码器可作为具有恢复保证的逆问题的原理性生成先验。我们在低维合成流形及MNIST数据集上进行了实证验证。