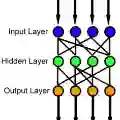

We show that feedforward neural networks with ReLU activation generalize on low complexity data, suitably defined. Given i.i.d. data generated from a simple programming language, the minimum description length (MDL) feedforward neural network which interpolates the data generalizes with high probability. We define this simple programming language, along with a notion of description length of such networks. We provide several examples on basic computational tasks, such as checking primality of a natural number, and more. For primality testing, our theorem shows the following. Suppose that we draw an i.i.d. sample of $\Theta(N^{\delta}\ln N)$ numbers uniformly at random from $1$ to $N$, where $\delta\in (0,1)$. For each number $x_i$, let $y_i = 1$ if $x_i$ is a prime and $0$ if it is not. Then with high probability, the MDL network fitted to this data accurately answers whether a newly drawn number between $1$ and $N$ is a prime or not, with test error $\leq O(N^{-\delta})$. Note that the network is not designed to detect primes; minimum description learning discovers a network which does so.

翻译:我们证明了具有ReLU激活函数的前馈神经网络能够在适当定义的低复杂度数据上实现泛化。给定从一种简单编程语言生成的独立同分布数据,能够插值这些数据的最小描述长度前馈神经网络以高概率实现泛化。我们定义了这种简单编程语言,并提出了此类网络的描述长度概念。我们在基础计算任务(如判断自然数是否为素数等)上提供了多个示例。对于素数检测,我们的定理表明:假设我们从1到N中均匀随机抽取Θ(N^δ ln N)个独立同分布样本,其中δ∈(0,1)。对于每个数字x_i,若x_i是素数则令y_i=1,否则为0。那么以高概率,拟合该数据的最小描述长度网络能够准确判断从1到N中新抽取的数字是否为素数,其测试误差≤O(N^{-δ})。值得注意的是,该网络并非专门设计用于检测素数;最小描述长度学习自动发现了具备该功能的网络。