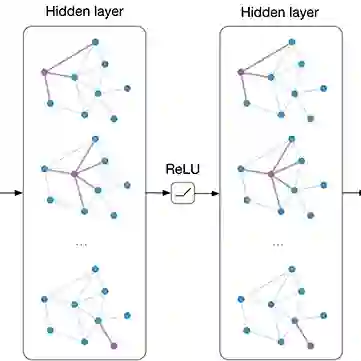

Vision-and-Language Navigation (VLN) is a challenging task where an agent is required to navigate to a natural language described location via vision observations. The navigation abilities of the agent can be enhanced by the relations between objects, which are usually learned using internal objects or external datasets. The relationships between internal objects are modeled employing graph convolutional network (GCN) in traditional studies. However, GCN tends to be shallow, limiting its modeling ability. To address this issue, we utilize a cross attention mechanism to learn the connections between objects over a trajectory, which takes temporal continuity into account, termed as Temporal Object Relations (TOR). The external datasets have a gap with the navigation environment, leading to inaccurate modeling of relations. To avoid this problem, we construct object connections based on observations from all viewpoints in the navigational environment, which ensures complete spatial coverage and eliminates the gap, called Spatial Object Relations (SOR). Additionally, we observe that agents may repeatedly visit the same location during navigation, significantly hindering their performance. For resolving this matter, we introduce the Turning Back Penalty (TBP) loss function, which penalizes the agent's repetitive visiting behavior, substantially reducing the navigational distance. Experimental results on the REVERIE, SOON, and R2R datasets demonstrate the effectiveness of the proposed method.

翻译:视觉与语言导航(VLN)是一项具有挑战性的任务,智能体需要根据自然语言描述的指令,通过视觉观测导航至指定位置。智能体的导航能力可通过物体间的关系增强,这类关系通常利用内部物体或外部数据集进行学习。传统研究中采用图卷积网络(GCN)对内部物体间关系进行建模,但GCN往往倾向浅层结构,限制了其建模能力。为解决该问题,我们利用交叉注意力机制学习轨迹中物体间的关联,该机制兼顾时间连续性,称为时域物体关系(TOR)。外部数据集与导航环境存在差异,导致关系建模不准确。为避免此问题,我们基于导航环境中所有视角的观测构建物体连接,确保完整的空间覆盖并消除差异,称为空域物体关系(SOR)。此外,我们观察到智能体在导航过程中可能重复访问同一位置,显著降低其性能。针对此问题,我们引入回溯惩罚(TBP)损失函数,通过惩罚智能体的重复访问行为有效缩短导航距离。在REVERIE、SOON和R2R数据集上的实验结果证明了所提方法的有效性。