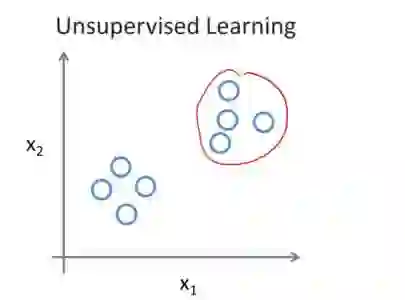

Learning actions that are relevant to decision-making and can be executed effectively is a key problem in autonomous robotics. Current state-of-the-art action representations in robotics lack proper effect-driven learning of the robot's actions. Although successful in solving manipulation tasks, deep learning methods also lack this ability, in addition to their high cost in terms of memory or training data. In this paper, we propose an unsupervised algorithm to discretize a continuous motion space and generate "action prototypes", each producing different effects in the environment. After an exploration phase, the algorithm automatically builds a representation of the effects and groups motions into action prototypes, where motions more likely to produce an effect are represented more than those that lead to negligible changes. We evaluate our method on a simulated stair-climbing reinforcement learning task, and the preliminary results show that our effect driven discretization outperforms uniformly and randomly sampled discretizations in convergence speed and maximum reward.

翻译:学习与决策相关且可有效执行的动作是自主机器人领域的一个关键问题。当前机器人领域中主流的动作表示方法缺乏对机器人动作效果的适当学习。尽管深度学习方法在解决操作任务方面取得了成功,但它们也缺乏这种能力,并且存在内存或训练数据成本高昂的问题。本文提出一种无监督算法,用于将连续运动空间离散化并生成“动作原型”,每个动作原型在环境中产生不同的效果。在探索阶段后,该算法自动构建效果表示并将运动分组为动作原型,其中更可能产生效果的运动被赋予比导致可忽略变化的运动更高的表示权重。我们在模拟的爬楼梯强化学习任务上评估了该方法,初步结果表明,基于效果驱动的离散化在收敛速度和最大奖励方面优于均匀和随机采样的离散化方法。