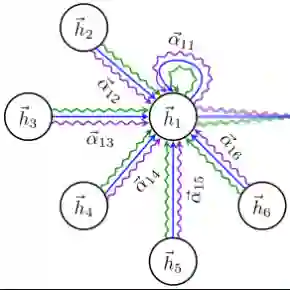

Time series analysis is critical for emerging net- work intelligent control and management functions. However, existing statistical-based and shallow machine learning models have shown limited prediction capabilities on multivariate time series. The intricate topological interdependency and complex temporal patterns in network data demand new model approaches. In this paper, based on a systematic multivariate time series model study, we present two deep learning models aiming for learning both temporal patterns and network topological correlations at the same time: a customized network-temporal graph attention network (GAT) model and a fine-tuned multi-modal large language model (LLM) with a clustering overture. Both models are studied against an LSTM model that already outperforms the statistical methods. Through extensive training and performance studies on a real-world network dataset, the LLM-based model demonstrates superior overall prediction and generalization performance, while the GAT model shows its strength in reducing prediction variance across the time series and horizons. More detailed analysis also reveals important insights into correlation variability and prediction distribution discrepancies over time series and different prediction horizons.

翻译:时间序列分析对于新兴网络智能控制与管理功能至关重要。然而,现有的基于统计方法和浅层机器学习模型在多变量时间序列预测方面表现出有限的能力。网络数据中复杂的拓扑依赖关系和时序模式需要新的建模方法。本文基于系统的多变量时间序列模型研究,提出了两种旨在同时学习时序模式和网络拓扑相关性的深度学习模型:一种定制的网络-时序图注意力网络(GAT)模型,以及一种经过微调并带有聚类序曲的多模态大语言模型(LLM)。这两种模型均与一个已超越统计方法性能的长短期记忆(LSTM)模型进行了对比研究。通过对真实世界网络数据集进行大量训练和性能研究,基于LLM的模型展现出卓越的整体预测和泛化性能,而GAT模型则在降低时间序列和预测跨度上的预测方差方面显示出其优势。更详细的分析还揭示了时间序列和不同预测跨度上相关性变异性和预测分布差异的重要见解。