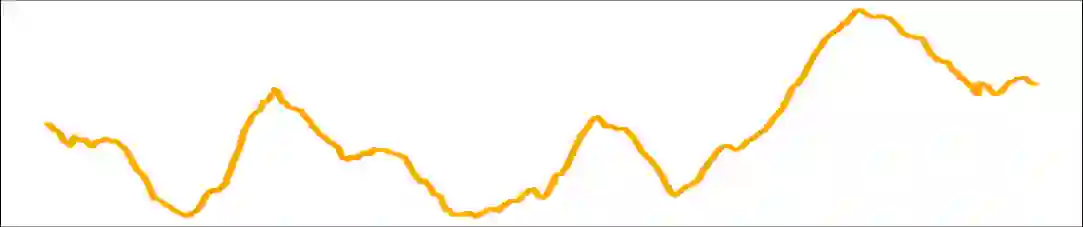

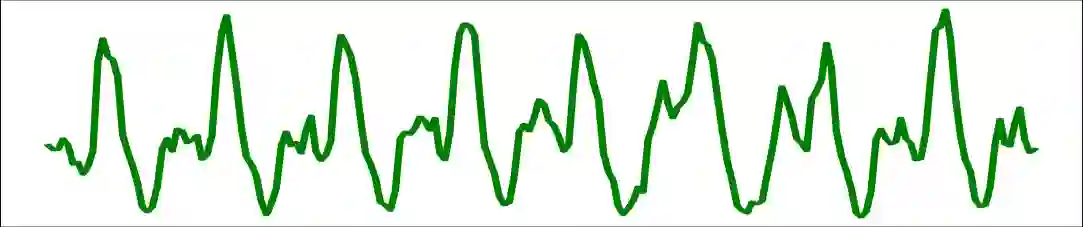

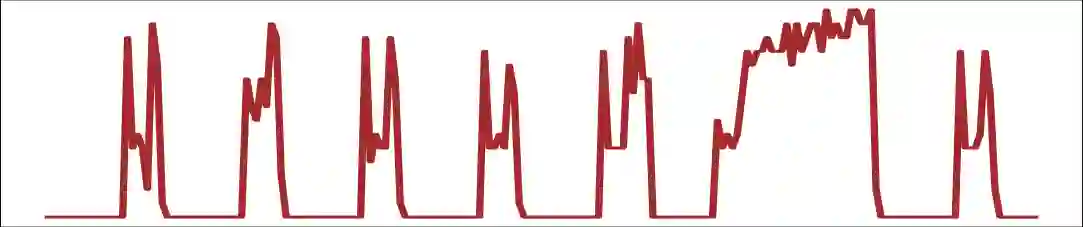

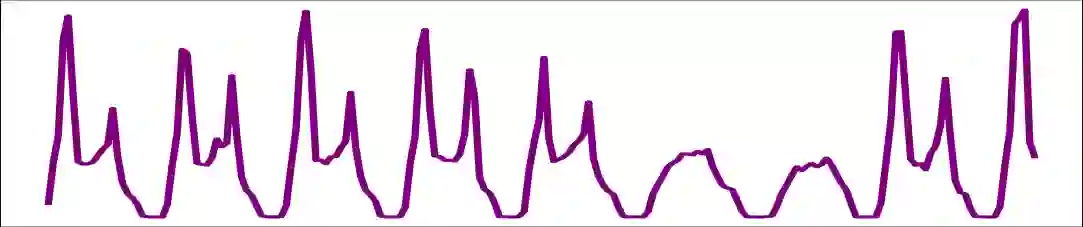

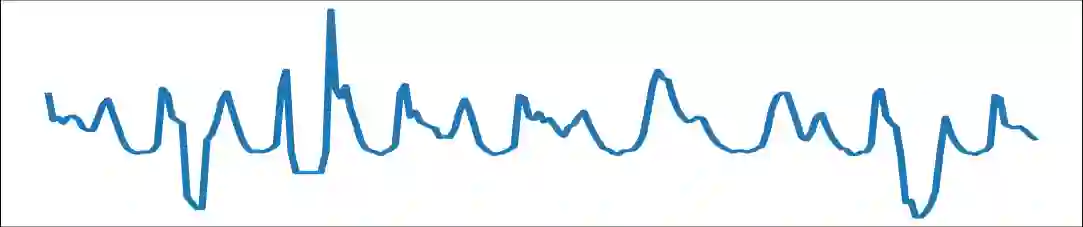

In the past few years, time series foundation models have achieved superior predicting accuracy. However, real-world time series often exhibit significant diversity in their temporal patterns across different time spans and domains, making it challenging for a single model architecture to fit all complex scenarios. In addition, time series data may have multiple variables exhibiting complex correlations between each other. Recent mainstream works have focused on modeling times series in a channel-independent manner in both pretraining and finetuning stages, overlooking the valuable inter-series dependencies. To this end, we propose Time Tracker for better predictions on multivariate time series data. Firstly, we leverage sparse mixture of experts (MoE) within Transformers to handle the modeling of diverse time series patterns, thereby alleviating the learning difficulties of a single model while improving its generalization. Besides, we propose Any-variate Attention, enabling a unified model structure to seamlessly handle both univariate and multivariate time series, thereby supporting channel-independent modeling during pretraining and channel-mixed modeling for finetuning.Furthermore, we design a graph learning module that constructs relations among sequences from frequency-domain features, providing more precise guidance to capture inter-series dependencies in channel-mixed modeling. Based on these advancements, Time Tracker achieves state-of-the-art performance in predicting accuracy, model generalization and adaptability.

翻译:近年来,时序基础模型在预测精度方面取得了显著进展。然而,现实世界中的时间序列数据往往在不同时间跨度和领域间呈现出显著的时序模式多样性,这使得单一模型架构难以适配所有复杂场景。此外,时序数据通常包含多个变量,这些变量之间可能存在复杂的相互关联。当前主流研究在预训练和微调阶段均采用通道独立的方式对时间序列进行建模,忽略了宝贵的序列间依赖关系。为此,我们提出时序追踪器(Time Tracker)以提升多元时序数据的预测性能。首先,我们在Transformer架构中引入稀疏专家混合(MoE)机制,以应对多样化时序模式的建模需求,从而缓解单一模型的学习难度并提升其泛化能力。此外,我们提出任意变量注意力机制(Any-variate Attention),使统一模型结构能够无缝处理单变量与多变量时间序列,进而支持预训练阶段的通道独立建模与微调阶段的通道混合建模。进一步地,我们设计了基于频域特征的图学习模块,通过构建序列间关系为通道混合建模中的序列依赖捕捉提供更精确的指导。基于这些创新,时序追踪器在预测精度、模型泛化能力和适应性方面均达到了最先进的性能水平。