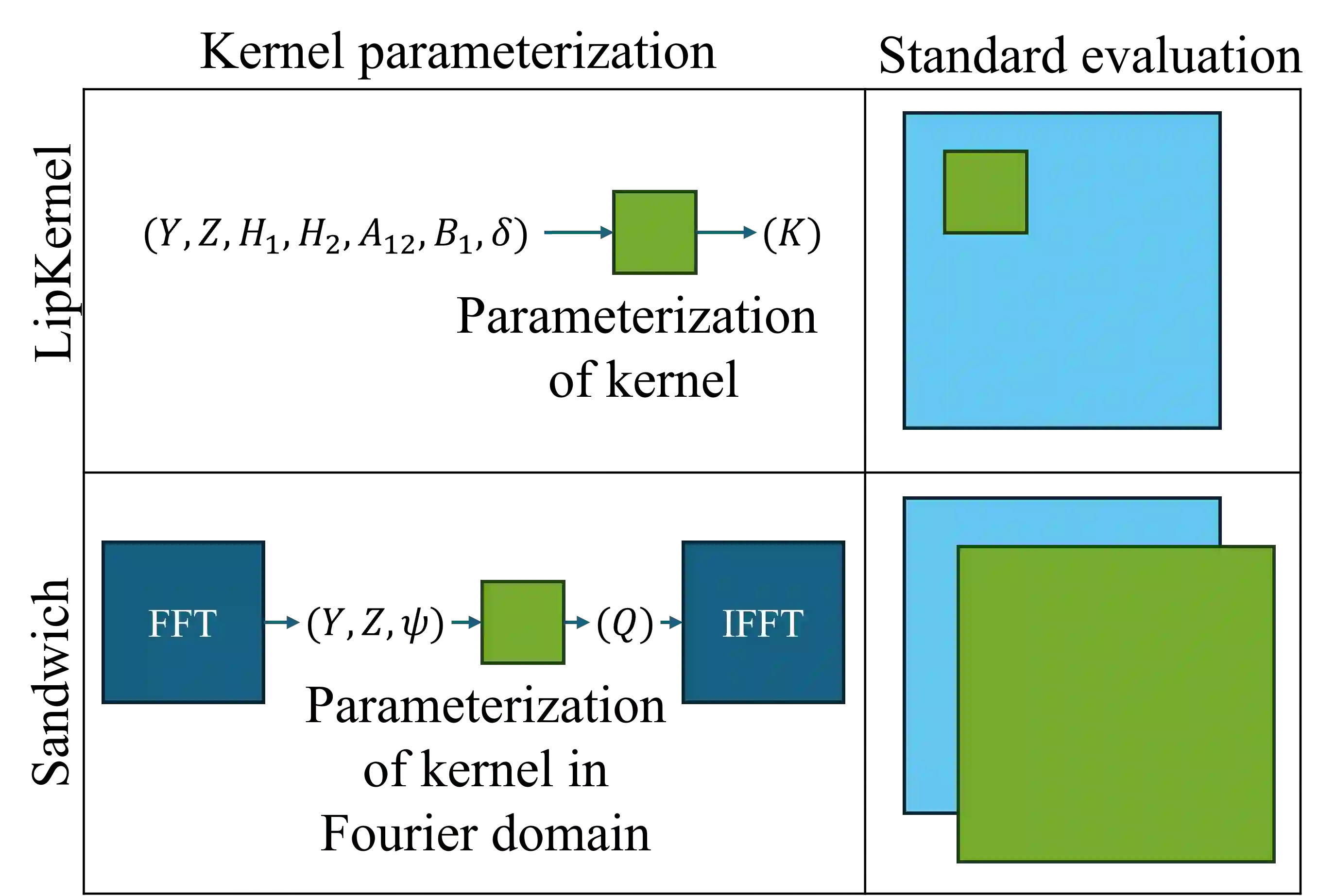

We propose a novel layer-wise parameterization for convolutional neural networks (CNNs) that includes built-in robustness guarantees by enforcing a prescribed Lipschitz bound. Each layer in our parameterization is designed to satisfy a linear matrix inequality (LMI), which in turn implies dissipativity with respect to a specific supply rate. Collectively, these layer-wise LMIs ensure Lipschitz boundedness for the input-output mapping of the neural network, yielding a more expressive parameterization than through spectral bounds or orthogonal layers. Our new method LipKernel directly parameterizes dissipative convolution kernels using a 2-D Roesser-type state space model. This means that the convolutional layers are given in standard form after training and can be evaluated without computational overhead. In numerical experiments, we show that the run-time using our method is orders of magnitude faster than state-of-the-art Lipschitz-bounded networks that parameterize convolutions in the Fourier domain, making our approach particularly attractive for improving robustness of learning-based real-time perception or control in robotics, autonomous vehicles, or automation systems. We focus on CNNs, and in contrast to previous works, our approach accommodates a wide variety of layers typically used in CNNs, including 1-D and 2-D convolutional layers, maximum and average pooling layers, as well as strided and dilated convolutions and zero padding. However, our approach naturally extends beyond CNNs as we can incorporate any layer that is incrementally dissipative.

翻译:本文提出一种新颖的逐层参数化方法,用于构建具有预设Lipschitz有界性保证的卷积神经网络(CNNs)。该参数化中每一层的设计均满足线性矩阵不等式(LMI),进而保证了相对于特定供给率的耗散性。这些逐层LMI共同确保了神经网络输入输出映射的Lipschitz有界性,相比基于谱约束或正交层的方法,本方法提供了更具表达能力的参数化形式。我们提出的新方法LipKernel直接采用二维Roesser型状态空间模型对耗散卷积核进行参数化。这意味着训练后卷积层以标准形式呈现,且无需额外计算开销即可执行推理。数值实验表明,本方法的运行速度比当前最先进的在傅里叶域参数化卷积的Lipschitz有界网络快数个数量级,使得该方法特别适用于提升机器人、自动驾驶车辆或自动化系统中基于学习的实时感知或控制任务的鲁棒性。本文聚焦于CNNs,与先前工作不同,本方法兼容CNNs中广泛使用的多种层类型,包括一维和二维卷积层、最大池化与平均池化层,以及跨步卷积、空洞卷积和零填充操作。然而,本方法自然可扩展至CNNs之外,因为任何具有增量耗散性的层均可纳入该框架。