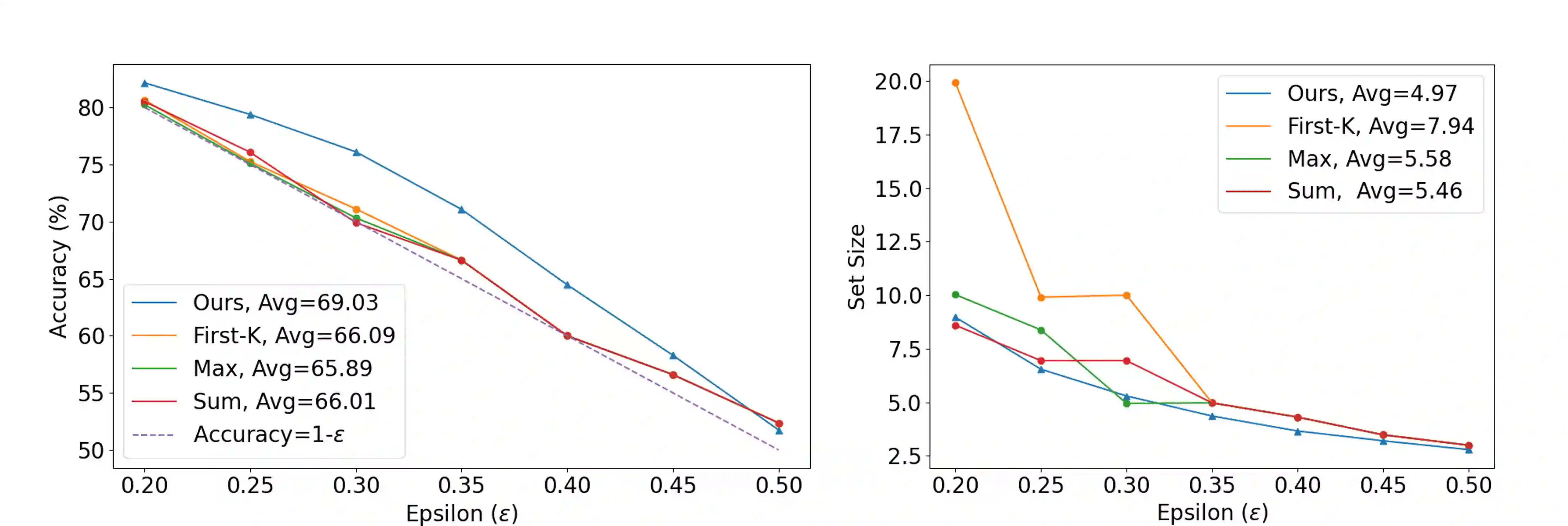

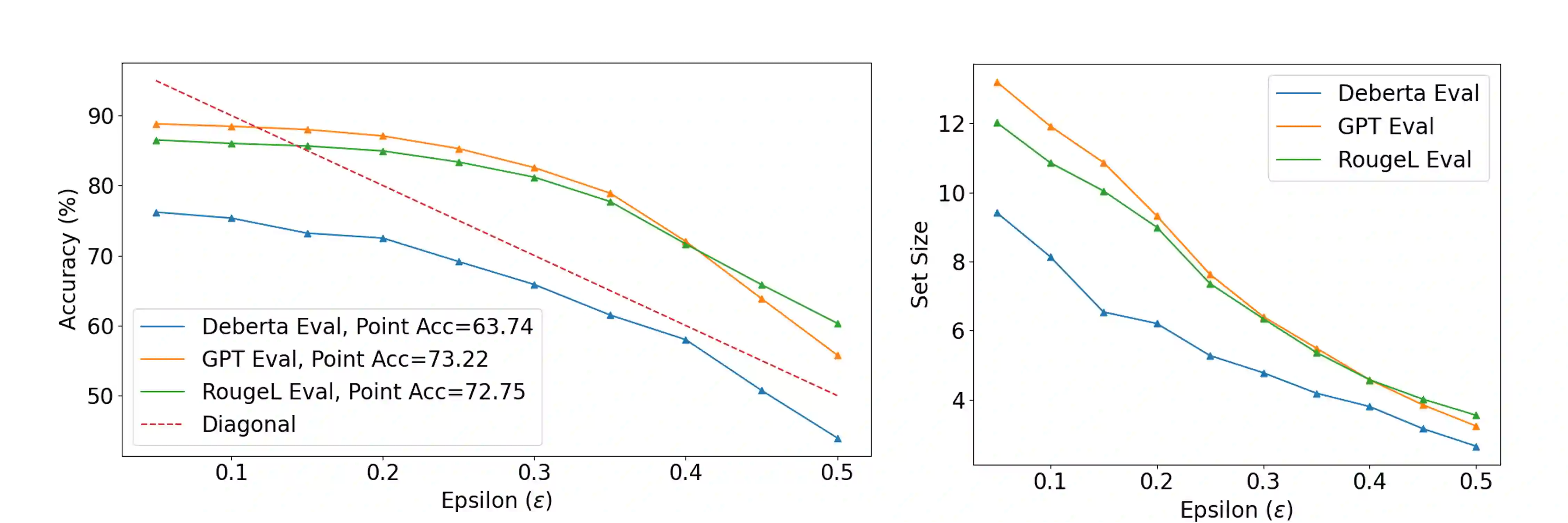

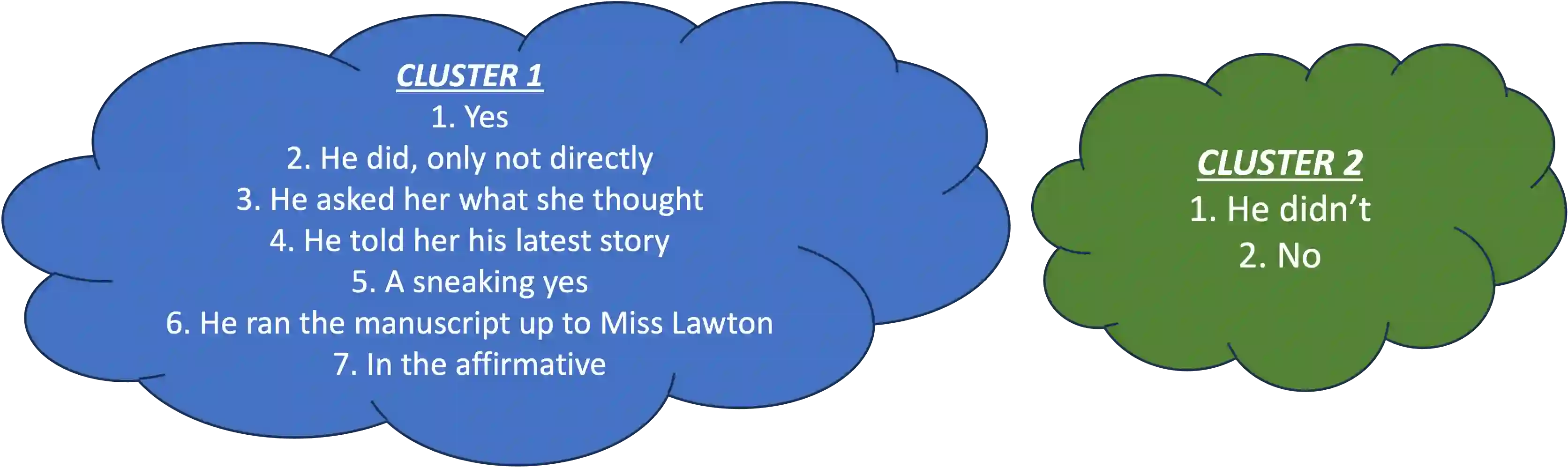

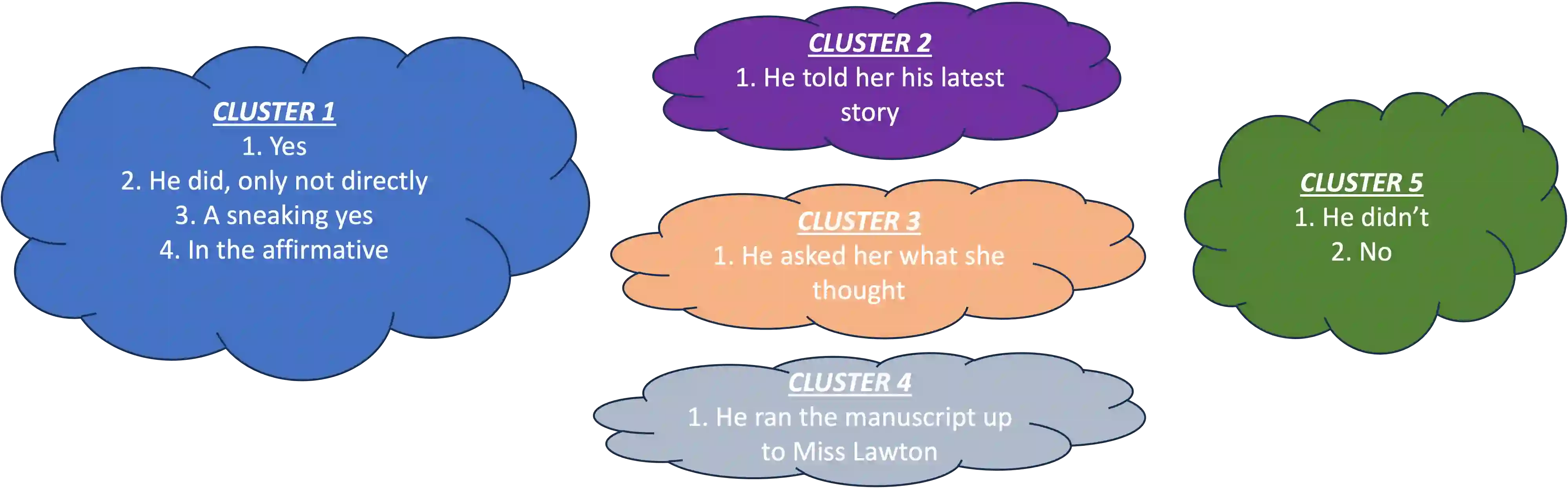

In this paper, we present a dynamic semantic clustering approach inspired by the Chinese Restaurant Process, aimed at addressing uncertainty in the inference of Large Language Models (LLMs). We quantify uncertainty of an LLM on a given query by calculating entropy of the generated semantic clusters. Further, we propose leveraging the (negative) likelihood of these clusters as the (non)conformity score within Conformal Prediction framework, allowing the model to predict a set of responses instead of a single output, thereby accounting for uncertainty in its predictions. We demonstrate the effectiveness of our uncertainty quantification (UQ) technique on two well known question answering benchmarks, COQA and TriviaQA, utilizing two LLMs, Llama2 and Mistral. Our approach achieves SOTA performance in UQ, as assessed by metrics such as AUROC, AUARC, and AURAC. The proposed conformal predictor is also shown to produce smaller prediction sets while maintaining the same probabilistic guarantee of including the correct response, in comparison to existing SOTA conformal prediction baseline.

翻译:本文提出一种受中式餐厅过程启发的动态语义聚类方法,旨在解决大语言模型推理中的不确定性问题。我们通过计算生成语义簇的熵值来量化LLM在给定查询上的不确定性。进一步,我们提出利用这些语义簇的(负)似然作为共形预测框架中的(非)适应性评分,使模型能够预测一组响应而非单一输出,从而在其预测中纳入不确定性考量。我们在两个知名问答基准数据集COQA和TriviaQA上,使用Llama2和Mistral两种LLM验证了所提不确定性量化技术的有效性。经AUROC、AUARC和AURAC等指标评估,该方法在UQ任务中达到了最先进的性能表现。与现有SOTA共形预测基线相比,所提出的共形预测器在保持相同正确响应包含概率保证的前提下,能生成更小的预测集合。