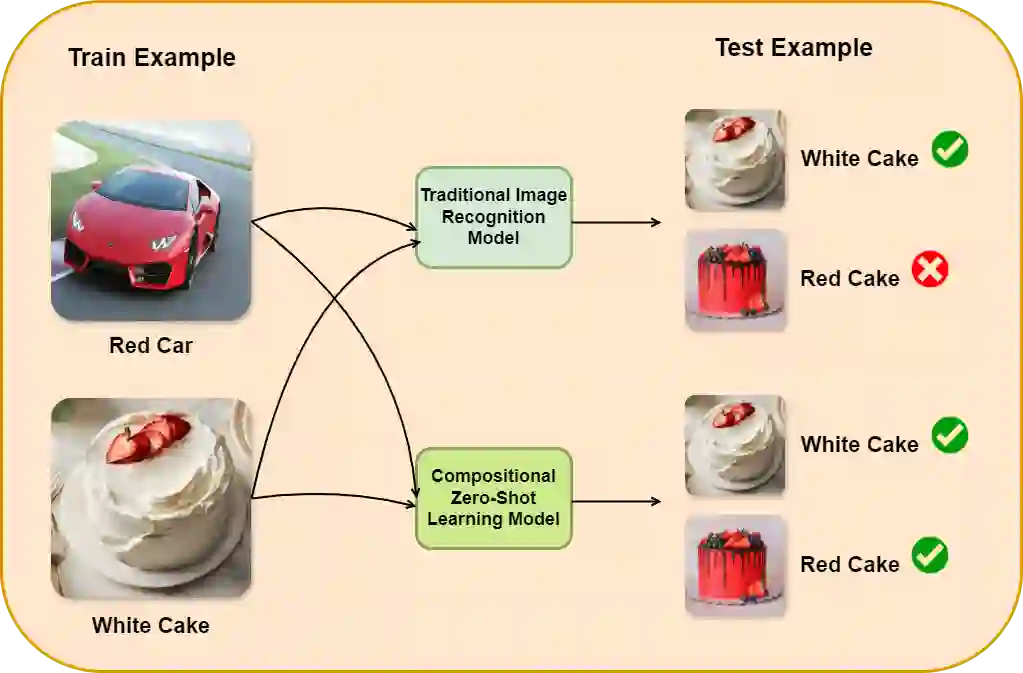

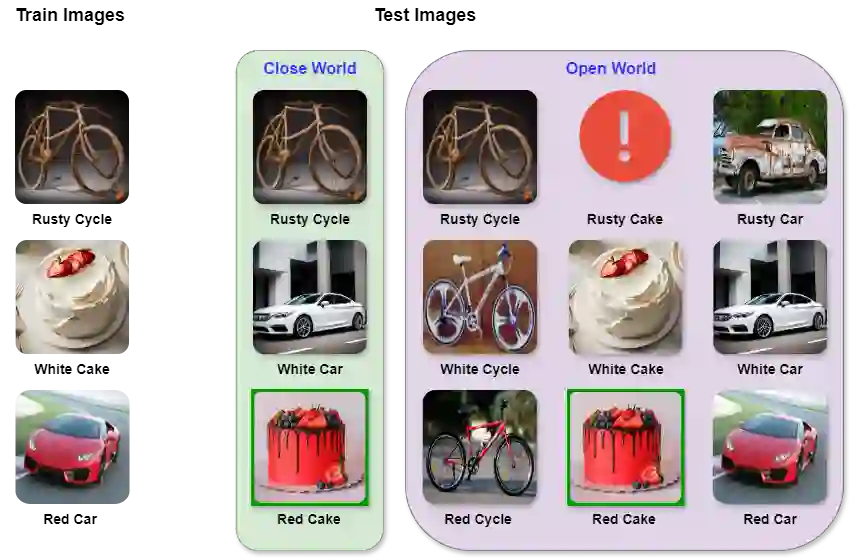

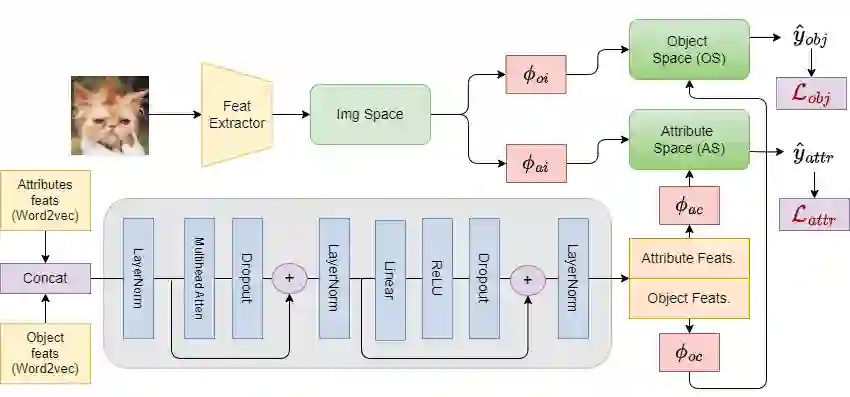

Compositional Zero-Shot Learning (CZSL) aims to predict unknown compositions made up of attribute and object pairs. Predicting compositions unseen during training is a challenging task. We are exploring Open World Compositional Zero-Shot Learning (OW-CZSL) in this study, where our test space encompasses all potential combinations of attributes and objects. Our approach involves utilizing the self-attention mechanism between attributes and objects to achieve better generalization from seen to unseen compositions. Utilizing a self-attention mechanism facilitates the model's ability to identify relationships between attribute and objects. The similarity between the self-attended textual and visual features is subsequently calculated to generate predictions during the inference phase. The potential test space may encompass implausible object-attribute combinations arising from unrestricted attribute-object pairings. To mitigate this issue, we leverage external knowledge from ConceptNet to restrict the test space to realistic compositions. Our proposed model, Attention-based Simple Primitives (ASP), demonstrates competitive performance, achieving results comparable to the state-of-the-art.

翻译:组合零样本学习(CZSL)旨在预测由属性和对象对构成的未知组合。预测训练期间未见过的组合是一项具有挑战性的任务。本研究探索开放世界组合零样本学习(OW-CZSL),其中我们的测试空间包含所有潜在的属性和对象组合。我们的方法涉及利用属性和对象之间的自注意力机制,以实现从已见组合到未见组合的更好泛化。利用自注意力机制有助于模型识别属性与对象之间的关系。在推理阶段,随后计算经过自注意力处理的文本特征和视觉特征之间的相似性以生成预测。由于不受限制的属性-对象配对,潜在的测试空间可能包含不合理的对象-属性组合。为了缓解这个问题,我们利用来自ConceptNet的外部知识,将测试空间限制在现实的组合上。我们提出的模型,基于注意力的简单基元(ASP),展示了具有竞争力的性能,取得了与最先进方法相当的结果。