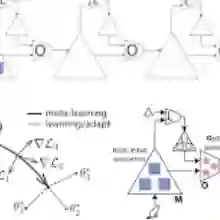

Current meta-learning methods are constrained to narrow task distributions with fixed feature and label spaces, limiting applicability. Moreover, the current meta-learning literature uses key terms like "universal" and "general-purpose" inconsistently and lacks precise definitions, hindering comparability. We introduce a theoretical framework for meta-learning which formally defines practical universality and introduces a distinction between algorithm-explicit and algorithm-implicit learning, providing a principled vocabulary for reasoning about universal meta-learning methods. Guided by this framework, we present TAIL, a transformer-based algorithm-implicit meta-learner that functions across tasks with varying domains, modalities, and label configurations. TAIL features three innovations over prior transformer-based meta-learners: random projections for cross-modal feature encoding, random injection label embeddings that extrapolate to larger label spaces, and efficient inline query processing. TAIL achieves state-of-the-art performance on standard few-shot benchmarks while generalizing to unseen domains. Unlike other meta-learning methods, it also generalizes to unseen modalities, solving text classification tasks despite training exclusively on images, handles tasks with up to 20$\times$ more classes than seen during training, and provides orders-of-magnitude computational savings over prior transformer-based approaches.

翻译:当前的元学习方法局限于具有固定特征空间和标签空间的狭窄任务分布,限制了其适用性。此外,当前元学习文献对“通用”和“通用目的”等关键术语的使用不一致且缺乏精确定义,阻碍了可比性。我们提出了一个元学习的理论框架,该框架形式化地定义了实用性通用性,并引入了算法显式学习与算法隐式学习的区分,为推理通用元学习方法提供了一个原则性的词汇体系。在此框架指导下,我们提出了TAIL,一种基于Transformer的算法隐式元学习器,能够在不同领域、模态和标签配置的任务中运行。TAIL相较于先前基于Transformer的元学习器具有三项创新:用于跨模态特征编码的随机投影、可外推至更大标签空间的随机注入标签嵌入,以及高效的内联查询处理。TAIL在标准少样本基准测试中取得了最先进的性能,同时能够泛化到未见过的领域。与其他元学习方法不同,它还能泛化到未见过的模态,例如尽管仅在图像上训练却能解决文本分类任务,处理训练期间所见类别数多达20倍的任务,并且相较于先前的基于Transformer的方法实现了数量级的计算节省。