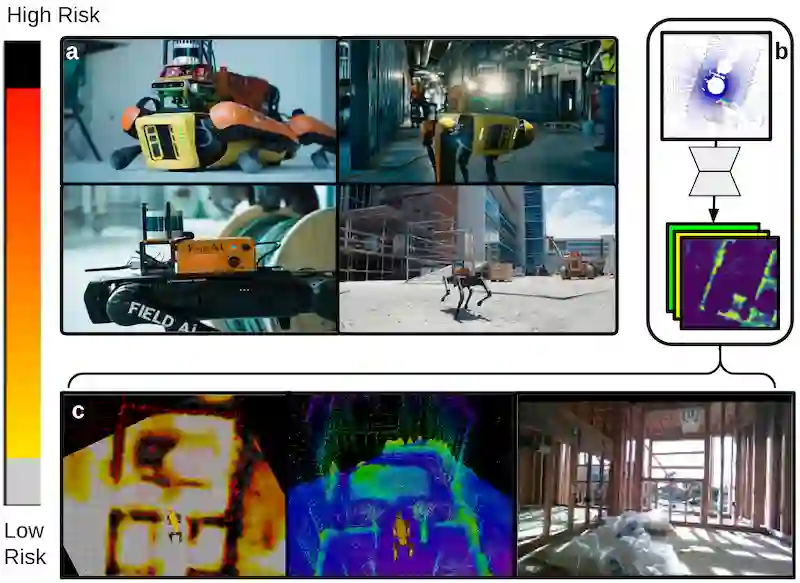

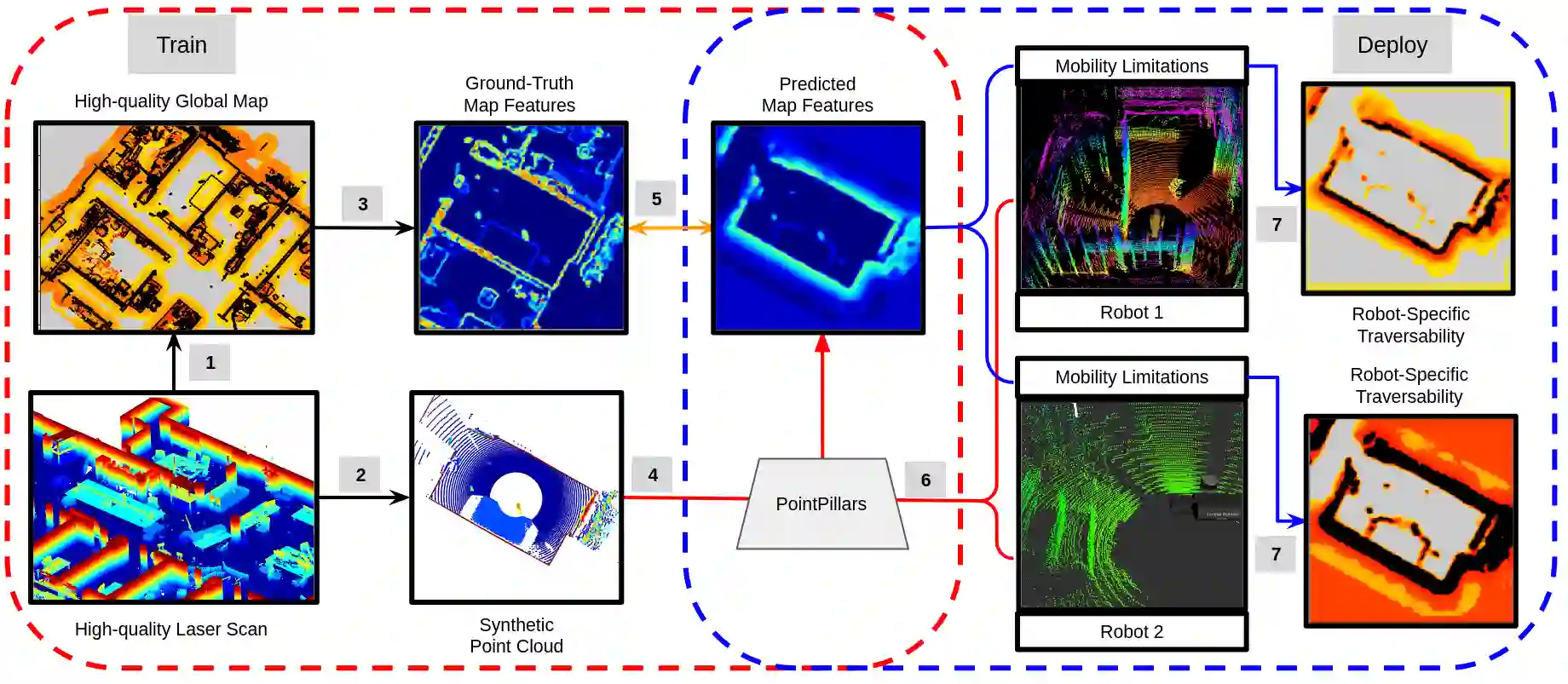

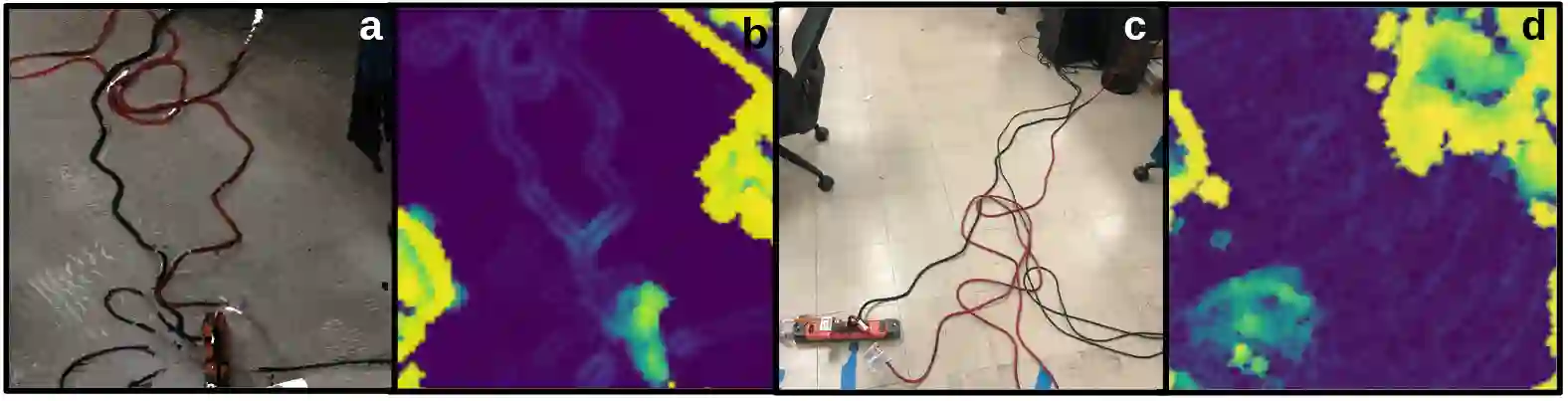

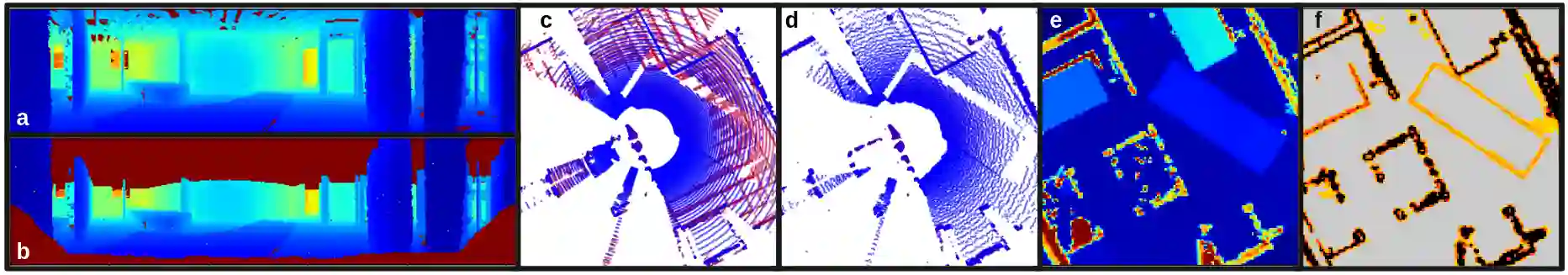

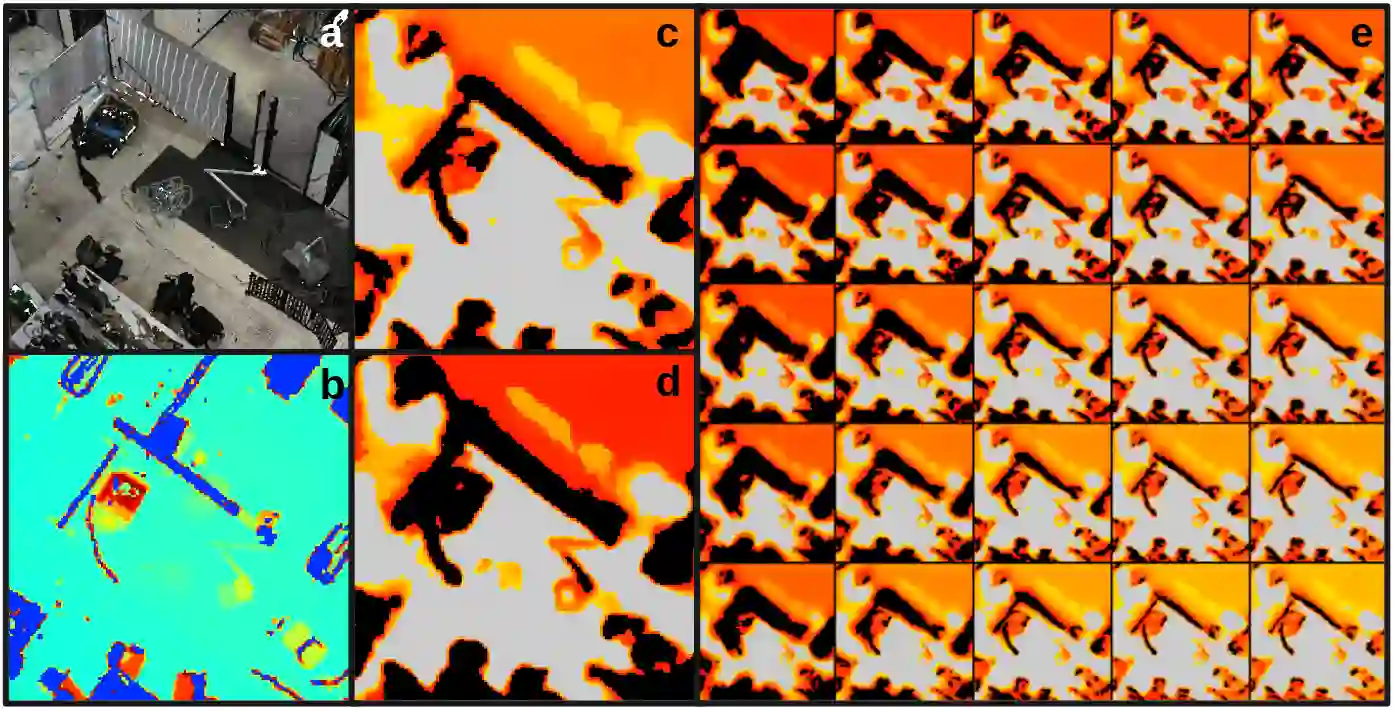

Traversability estimation in rugged, unstructured environments remains a challenging problem in field robotics. Often, the need for precise, accurate traversability estimation is in direct opposition to the limited sensing and compute capability present on affordable, small-scale mobile robots. To address this issue, we present a novel method to learn [u]ncertainty-aware [n]avigation features from high-fidelity scans of [real]-world environments (UNRealNet). This network can be deployed on-robot to predict these high-fidelity features using input from lower-quality sensors. UNRealNet predicts dense, metric-space features directly from single-frame lidar scans, thus reducing the effects of occlusion and odometry error. Our approach is label-free, and is able to produce traversability estimates that are robot-agnostic. Additionally, we can leverage UNRealNet's predictive uncertainty to both produce risk-aware traversability estimates, and refine our feature predictions over time. We find that our method outperforms traditional local mapping and inpainting baselines by up to 40%, and demonstrate its efficacy on multiple legged platforms.

翻译:在崎岖、非结构化的环境中进行可通行性估计仍然是野外机器人学中的一个具有挑战性的问题。通常,精确、准确的可通行性估计需求与低成本、小规模移动机器人有限的传感和计算能力直接矛盾。为解决这一问题,我们提出了一种从真实世界环境的高保真扫描中学习不确定性感知导航特征的新方法(UNRealNet)。该网络可部署在机器人上,利用来自低质量传感器的输入来预测这些高保真特征。UNRealNet直接从单帧激光雷达扫描中预测密集的度量空间特征,从而减少了遮挡和里程计误差的影响。我们的方法无需标注,能够产生与机器人无关的可通行性估计。此外,我们可以利用UNRealNet的预测不确定性来生成风险感知的可通行性估计,并随时间推移优化我们的特征预测。我们发现,我们的方法优于传统的局部建图和修复基线方法,性能提升高达40%,并在多个腿式机器人平台上验证了其有效性。