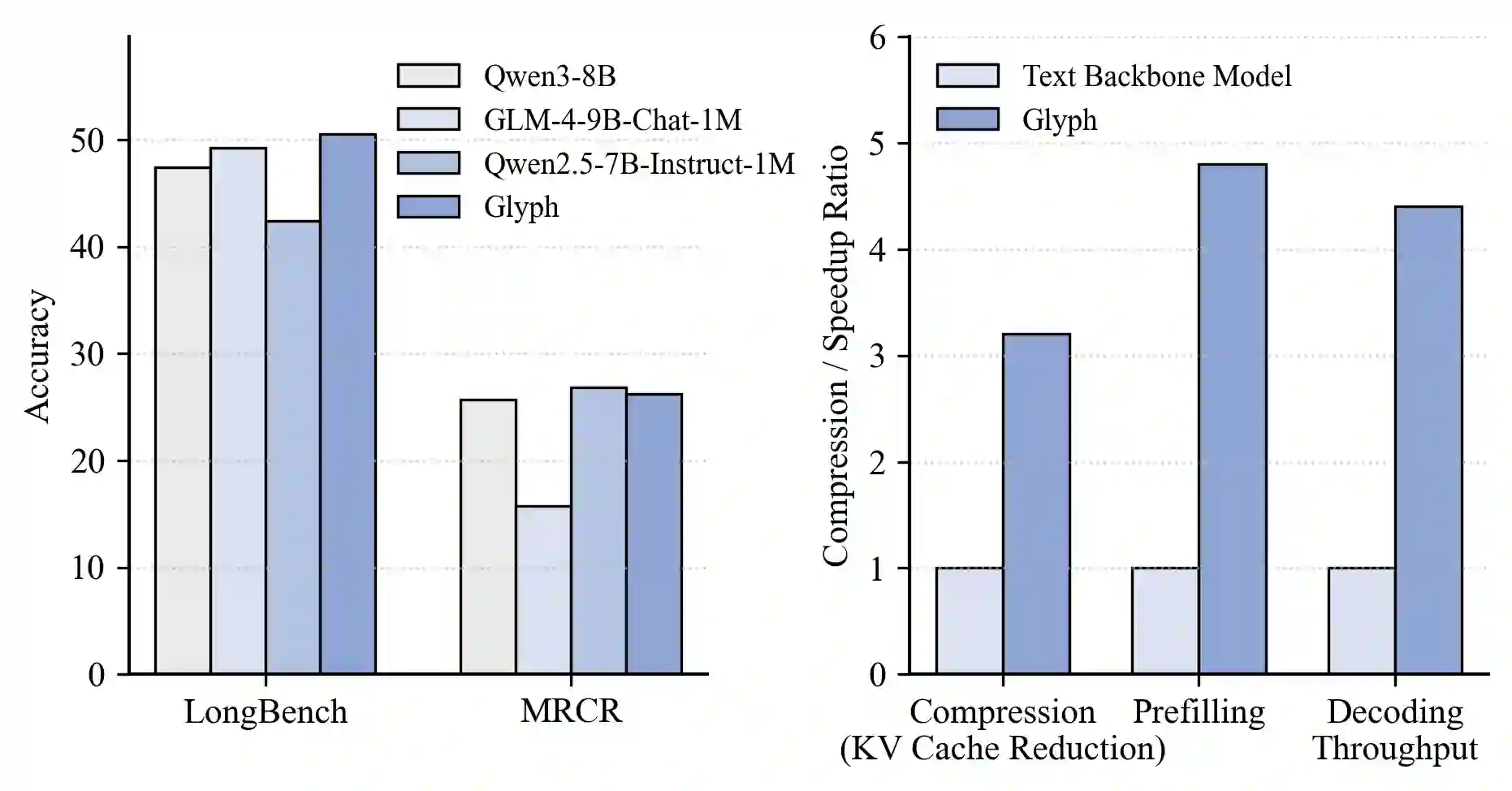

Large language models (LLMs) increasingly rely on long-context modeling for tasks such as document understanding, code analysis, and multi-step reasoning. However, scaling context windows to the million-token level brings prohibitive computational and memory costs, limiting the practicality of long-context LLMs. In this work, we take a different perspective-visual context scaling-to tackle this challenge. Instead of extending token-based sequences, we propose Glyph, a framework that renders long texts into images and processes them with vision-language models (VLMs). This approach substantially compresses textual input while preserving semantic information, and we further design an LLM-driven genetic search to identify optimal visual rendering configurations for balancing accuracy and compression. Through extensive experiments, we demonstrate that our method achieves 3-4x token compression while maintaining accuracy comparable to leading LLMs such as Qwen3-8B on various long-context benchmarks. This compression also leads to around 4x faster prefilling and decoding, and approximately 2x faster SFT training. Furthermore, under extreme compression, a 128K-context VLM could scale to handle 1M-token-level text tasks. In addition, the rendered text data benefits real-world multimodal tasks, such as document understanding. Our code and model are released at https://github.com/thu-coai/Glyph.

翻译:大型语言模型(LLMs)日益依赖长上下文建模来处理文档理解、代码分析和多步推理等任务。然而,将上下文窗口扩展至百万令牌级别会带来极高的计算和内存开销,限制了长上下文LLMs的实际应用。本研究采用一种不同的视角——视觉上下文扩展——来应对这一挑战。我们提出Glyph框架,该框架将长文本渲染为图像,并使用视觉语言模型(VLMs)进行处理,而非扩展基于令牌的序列。该方法在保留语义信息的同时,显著压缩了文本输入;我们进一步设计了基于LLM驱动的遗传搜索,以识别在准确性与压缩率之间达到平衡的最优视觉渲染配置。通过大量实验,我们证明该方法在多种长上下文基准测试(如Qwen3-8B)上实现了3-4倍的令牌压缩,同时保持了与主流LLMs相当的准确性。这种压缩还带来了约4倍的预填充和解码加速,以及约2倍的监督微调(SFT)训练加速。此外,在极端压缩条件下,一个128K上下文的VLM可扩展至处理百万令牌级别的文本任务。同时,渲染后的文本数据也有益于现实世界的多模态任务,例如文档理解。我们的代码和模型已发布于 https://github.com/thu-coai/Glyph。