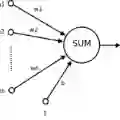

Neural networks are often regarded as "black boxes" due to their complex functions and numerous parameters, which poses significant challenges for interpretability. This study addresses these challenges by introducing methods to enhance the understanding of neural networks, focusing specifically on models with a single hidden layer. We establish a theoretical framework by demonstrating that the neural network estimator can be interpreted as a nonparametric regression model. Building on this foundation, we propose statistical tests to assess the significance of input neurons and introduce algorithms for dimensionality reduction, including clustering and (PCA), to simplify the network and improve its interpretability and accuracy. The key contributions of this study include the development of a bootstrapping technique for evaluating artificial neural network (ANN) performance, applying statistical tests and logistic regression to analyze hidden neurons, and assessing neuron efficiency. We also investigate the behavior of individual hidden neurons in relation to out-put neurons and apply these methodologies to the IDC and Iris datasets to validate their practical utility. This research advances the field of Explainable Artificial Intelligence by presenting robust statistical frameworks for interpreting neural networks, thereby facilitating a clearer understanding of the relationships between inputs, outputs, and individual network components.

翻译:神经网络因其复杂的函数和众多参数常被视为"黑箱",这对可解释性提出了重大挑战。本研究通过引入增强神经网络理解的方法来应对这些挑战,特别聚焦于单隐藏层模型。我们通过证明神经网络估计器可被解释为非参数回归模型,建立了理论框架。在此基础上,我们提出统计检验方法来评估输入神经元的重要性,并引入包括聚类和主成分分析(PCA)在内的降维算法,以简化网络结构并提升其可解释性与准确性。本研究的主要贡献包括:开发用于评估人工神经网络(ANN)性能的自举技术,应用统计检验和逻辑回归分析隐藏神经元并评估神经元效率。我们还研究了单个隐藏神经元相对于输出神经元的行为特征,并将这些方法应用于IDC和Iris数据集以验证其实用价值。本研究通过提出用于解释神经网络的稳健统计框架,推动了可解释人工智能领域的发展,从而促进了对输入、输出及网络各组件间关系的更清晰理解。