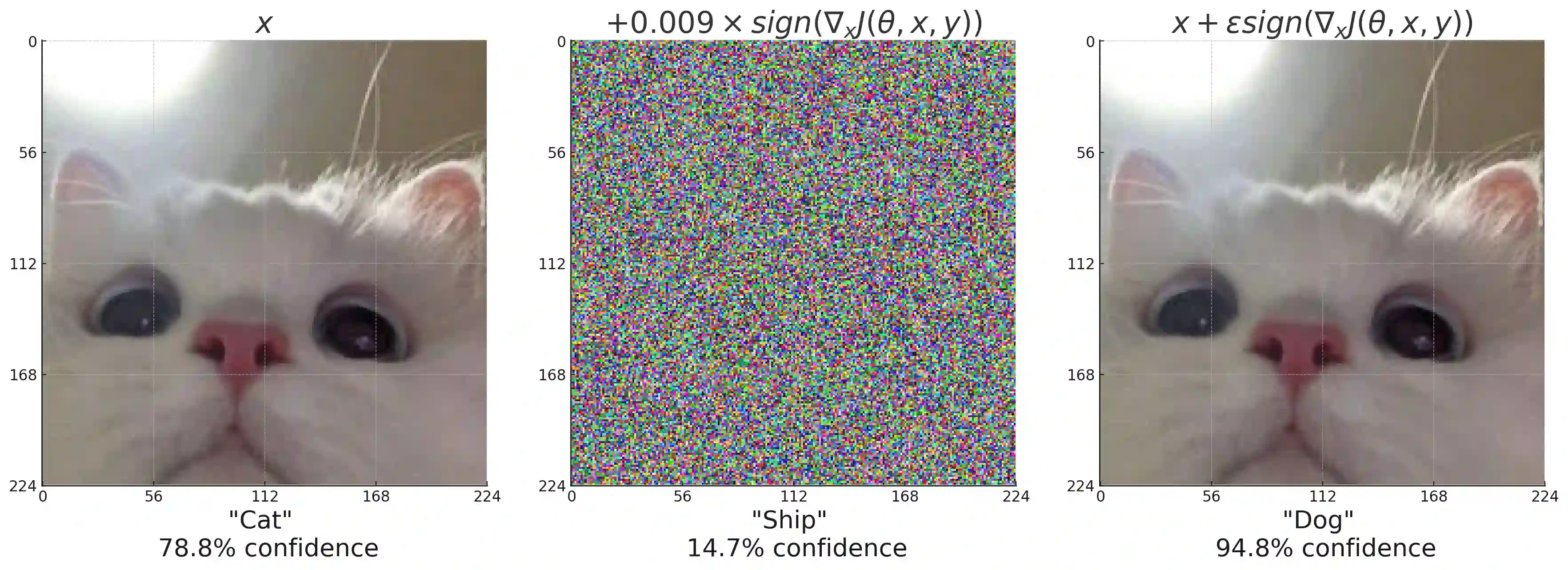

Deep learning has transformed AI applications but faces critical security challenges, including adversarial attacks, data poisoning, model theft, and privacy leakage. This survey examines these vulnerabilities, detailing their mechanisms and impact on model integrity and confidentiality. Practical implementations, including adversarial examples, label flipping, and backdoor attacks, are explored alongside defenses such as adversarial training, differential privacy, and federated learning, highlighting their strengths and limitations. Advanced methods like contrastive and self-supervised learning are presented for enhancing robustness. The survey concludes with future directions, emphasizing automated defenses, zero-trust architectures, and the security challenges of large AI models. A balanced approach to performance and security is essential for developing reliable deep learning systems.

翻译:深度学习已变革了人工智能应用,但面临严峻的安全挑战,包括对抗攻击、数据投毒、模型窃取和隐私泄露。本综述系统审视了这些脆弱性,详细阐述了其作用机制以及对模型完整性和机密性的影响。文中探讨了对抗样本、标签翻转和后门攻击等实际攻击手段,同时分析了对抗训练、差分隐私和联邦学习等防御方法,并着重指出了各类方法的优势与局限。此外,介绍了对比学习和自监督学习等先进方法以提升模型鲁棒性。综述最后展望了未来研究方向,强调自动化防御、零信任架构以及大型AI模型面临的安全挑战。实现性能与安全的平衡对于开发可靠的深度学习系统至关重要。