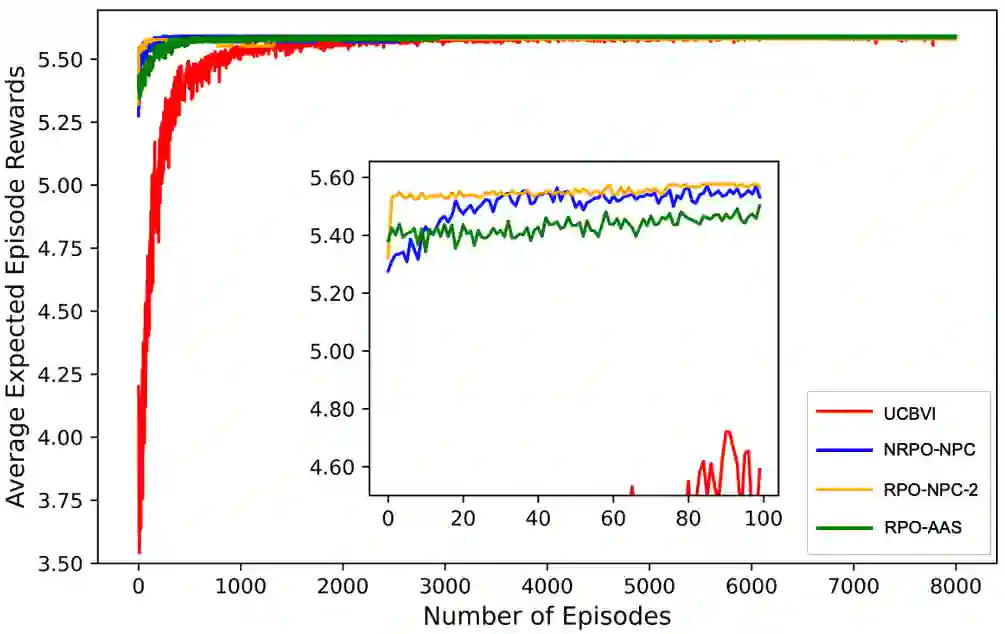

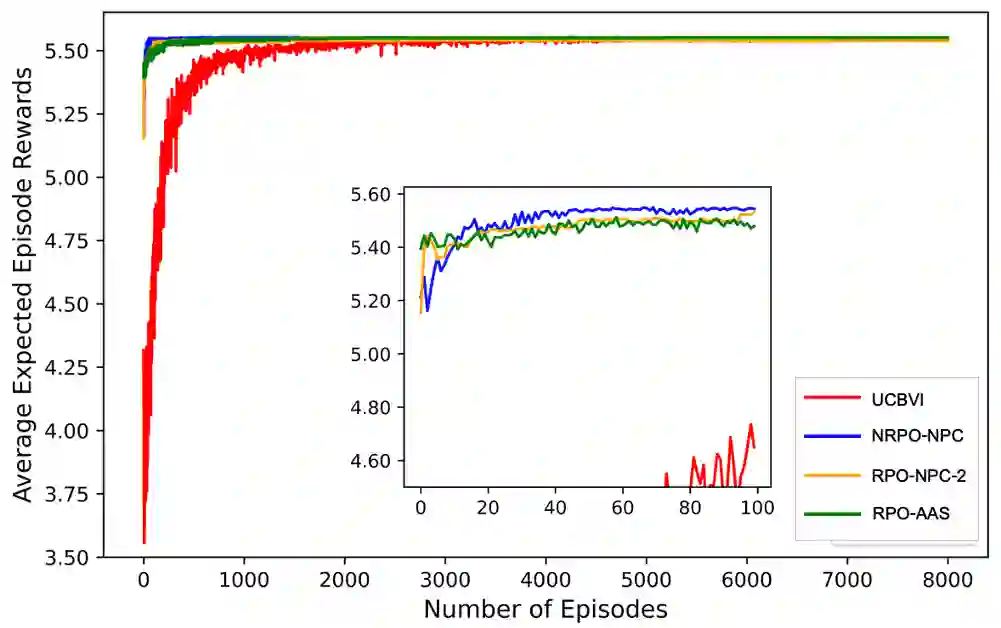

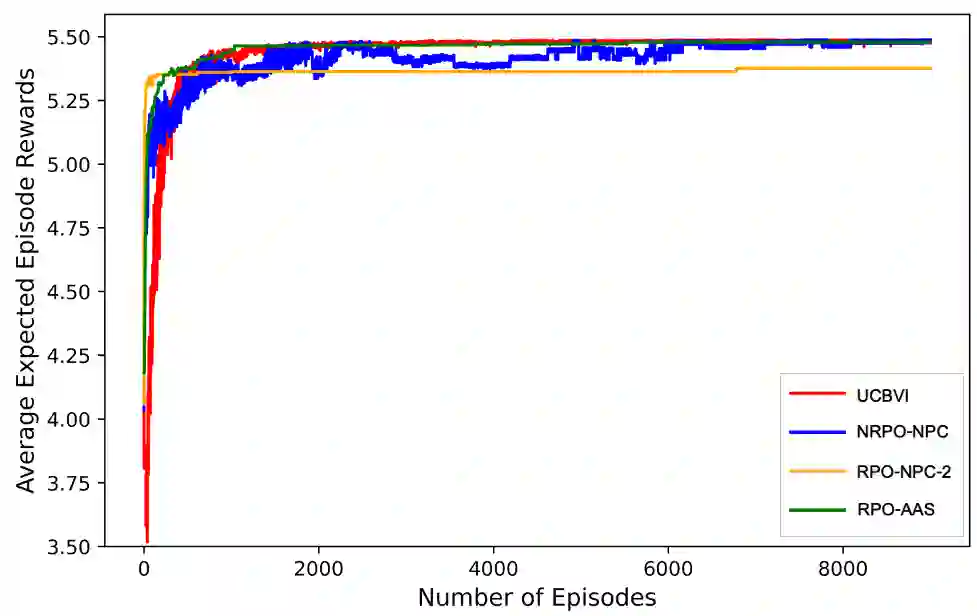

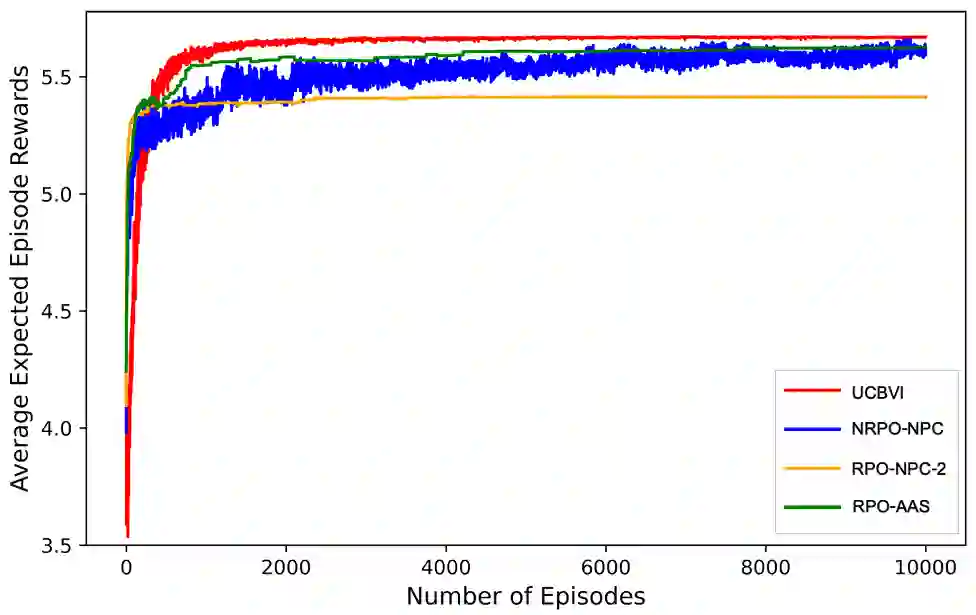

In this work, we consider an online robust Markov Decision Process (MDP) where we have the information of finitely many prototypes of the underlying transition kernel. We consider an adaptively updated ambiguity set of the prototypes and propose an algorithm that efficiently identifies the true underlying transition kernel while guaranteeing the performance of the corresponding robust policy. To be more specific, we provide a sublinear regret of the subsequent optimal robust policy. We also provide an early stopping mechanism and a worst-case performance bound of the value function. In numerical experiments, we demonstrate that our method outperforms existing approaches, particularly in the early stage with limited data. This work contributes to robust MDPs by considering possible prior information about the underlying transition probability and online learning, offering both theoretical insights and practical algorithms for improved decision-making under uncertainty.

翻译:本文研究了一种在线鲁棒马尔可夫决策过程(MDP),其中我们已知有限多个底层转移核的原型信息。我们考虑一个基于原型自适应更新的模糊集,并提出一种算法,该算法在保证相应鲁棒策略性能的同时,能有效识别真实的底层转移核。具体而言,我们为后续的最优鲁棒策略提供了次线性遗憾界。我们还提出了早期停止机制以及值函数的最坏情况性能界。在数值实验中,我们证明了所提方法优于现有方法,尤其在数据有限的早期阶段表现突出。本工作通过考虑关于底层转移概率的可能先验信息并结合在线学习,为鲁棒MDP领域提供了理论洞见与实用算法,从而提升了不确定性下的决策性能。