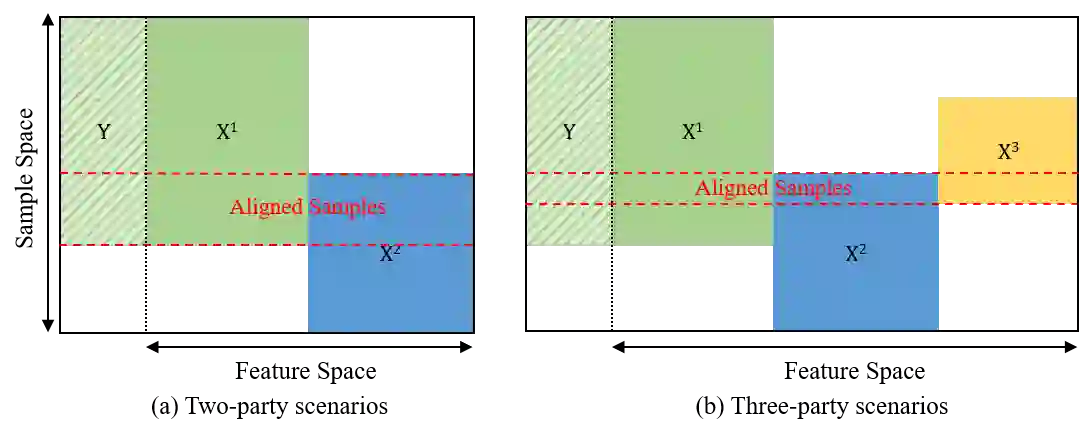

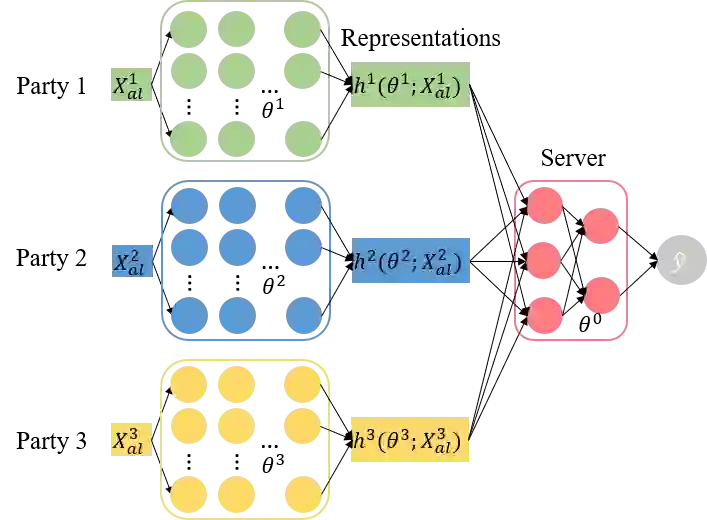

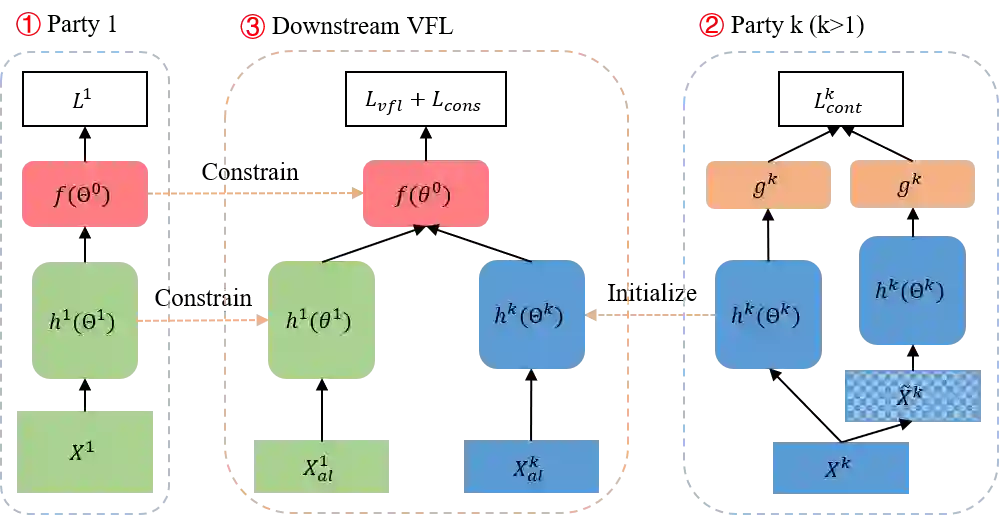

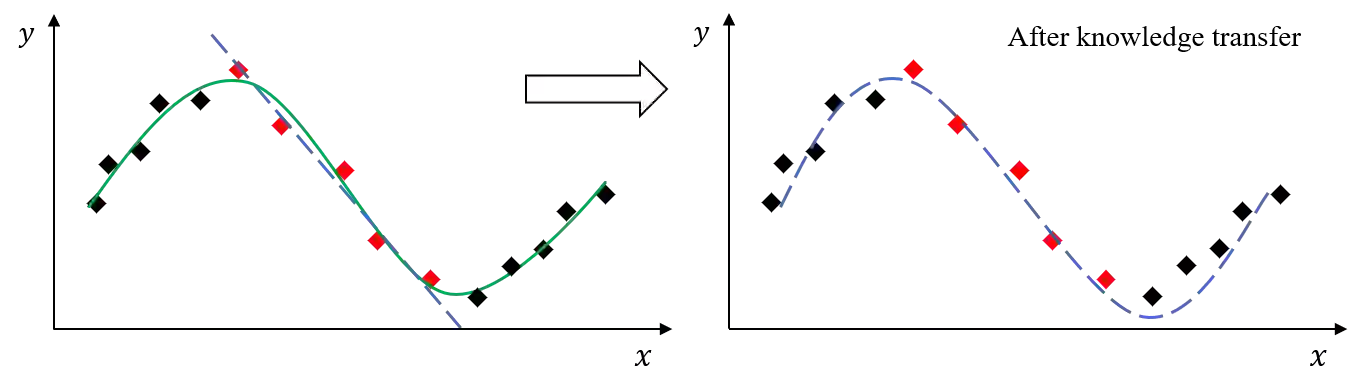

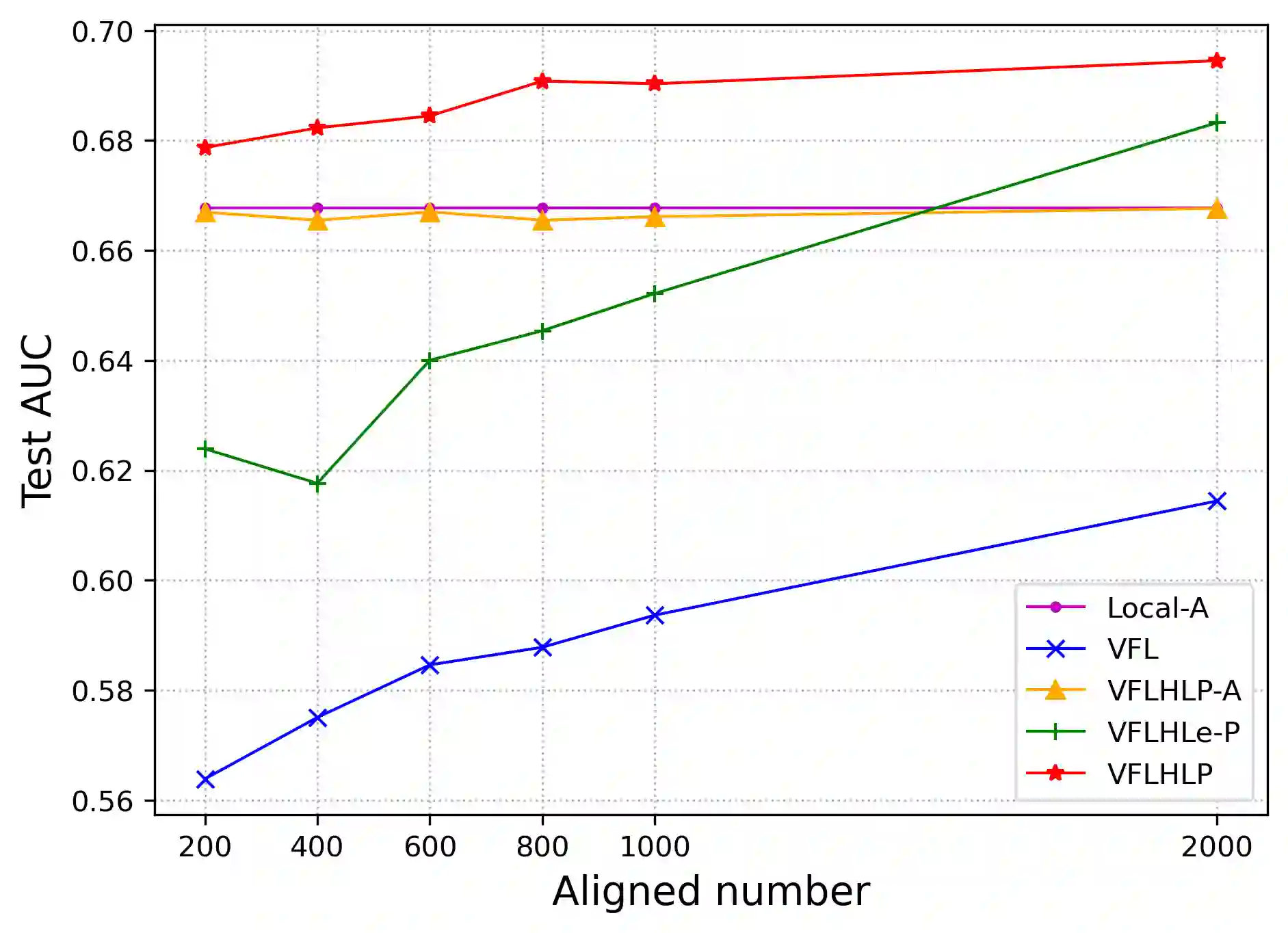

Vertical Federated Learning (VFL), which has a broad range of real-world applications, has received much attention in both academia and industry. Enterprises aspire to exploit more valuable features of the same users from diverse departments to boost their model prediction skills. VFL addresses this demand and concurrently secures individual parties from exposing their raw data. However, conventional VFL encounters a bottleneck as it only leverages aligned samples, whose size shrinks with more parties involved, resulting in data scarcity and the waste of unaligned data. To address this problem, we propose a novel VFL Hybrid Local Pre-training (VFLHLP) approach. VFLHLP first pre-trains local networks on the local data of participating parties. Then it utilizes these pre-trained networks to adjust the sub-model for the labeled party or enhance representation learning for other parties during downstream federated learning on aligned data, boosting the performance of federated models. The experimental results on real-world advertising datasets, demonstrate that our approach achieves the best performance over baseline methods by large margins. The ablation study further illustrates the contribution of each technique in VFLHLP to its overall performance.

翻译:纵向联邦学习(VFL)在现实世界中具有广泛的应用场景,近年来受到学术界和工业界的广泛关注。企业渴望利用不同部门中同一用户的更多有价值特征来提升模型预测能力。VFL满足了这一需求,同时保护各参与方免于暴露原始数据。然而,传统VFL面临瓶颈:它仅利用对齐样本,随着参与方增加,对齐样本规模逐渐缩小,导致数据稀缺和未对齐数据的浪费。为解决这一问题,我们提出了一种新颖的纵向联邦学习混合局部预训练(VFLHLP)方法。VFLHLP首先在参与方的本地数据上预训练局部网络,随后利用这些预训练网络调整带标签方的子模型,或增强其他参与方在对齐数据上的下游联邦学习过程中的表示学习,从而提升联邦模型的性能。在真实广告数据集上的实验结果表明,我们的方法在基线方法上取得了显著更优的性能。消融研究进一步阐明了VFLHLP中各技术对整体性能的贡献。