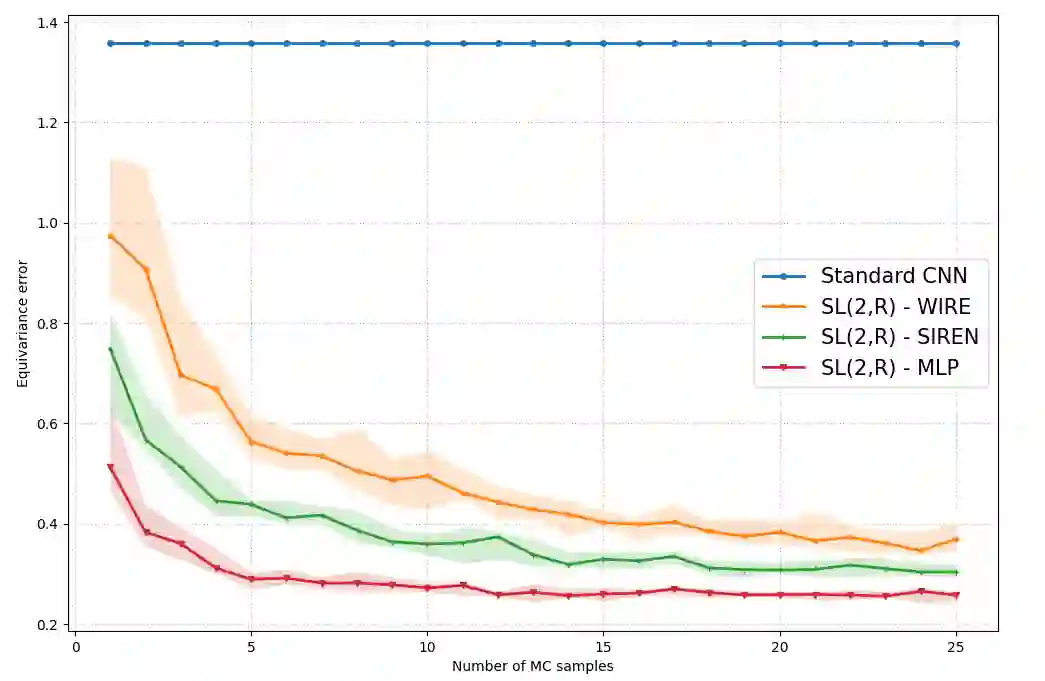

Invariance and equivariance to geometrical transformations have proven to be very useful inductive biases when training (convolutional) neural network models, especially in the low-data regime. Much work has focused on the case where the symmetry group employed is compact or abelian, or both. Recent work has explored enlarging the class of transformations used to the case of Lie groups, principally through the use of their Lie algebra, as well as the group exponential and logarithm maps. The applicability of such methods is limited by the fact that depending on the group of interest $G$, the exponential map may not be surjective. Further limitations are encountered when $G$ is neither compact nor abelian. Using the structure and geometry of Lie groups and their homogeneous spaces, we present a framework by which it is possible to work with such groups primarily focusing on the groups $G = \text{GL}^{+}(n, \mathbb{R})$ and $G = \text{SL}(n, \mathbb{R})$, as well as their representation as affine transformations $\mathbb{R}^{n} \rtimes G$. Invariant integration as well as a global parametrization is realized by a decomposition into subgroups and submanifolds which can be handled individually. Under this framework, we show how convolution kernels can be parametrized to build models equivariant with respect to affine transformations. We evaluate the robustness and out-of-distribution generalisation capability of our model on the benchmark affine-invariant classification task, outperforming previous proposals.

翻译:几何变换的不变性和等变性已被证明是训练(卷积)神经网络模型时非常有用的归纳偏置,特别是在低数据量场景下。现有研究主要集中于所用对称群为紧致群或阿贝尔群(或两者兼具)的情形。近期研究尝试将变换类别扩展至李群,主要通过利用其李代数以及群指数映射和对数映射。此类方法的适用性受限于以下事实:根据目标群$G$的不同,指数映射可能不是满射。当$G$既非紧致也非阿贝尔群时,则会遇到更多限制。利用李群及其齐次空间的结构与几何性质,我们提出一个处理此类群的框架,主要聚焦于群$G = \text{GL}^{+}(n, \mathbb{R})$和$G = \text{SL}(n, \mathbb{R})$,以及它们作为仿射变换$\mathbb{R}^{n} \rtimes G$的表示形式。通过将群分解为可独立处理的子群与子流形,实现了不变积分与全局参数化。在此框架下,我们展示了如何参数化卷积核以构建对仿射变换具有等变性的模型。我们在基准仿射不变分类任务上评估了模型的鲁棒性与分布外泛化能力,其性能优于现有方案。