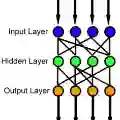

We introduce the Input-Connected Multilayer Perceptron (IC-MLP), a feedforward neural network architecture in which each hidden neuron receives, in addition to the outputs of the preceding layer, a direct affine connection from the raw input. We first study this architecture in the univariate setting and give an explicit and systematic description of IC-MLPs with an arbitrary finite number of hidden layers, including iterated formulas for the network functions. In this setting, we prove a universal approximation theorem showing that deep IC-MLPs can approximate any continuous function on a closed interval of the real line if and only if the activation function is nonlinear. We then extend the analysis to vector-valued inputs and establish a corresponding universal approximation theorem for continuous functions on compact subsets of $\mathbb{R}^n$.

翻译:本文提出输入连接多层感知机(IC-MLP),这是一种前馈神经网络架构,其中每个隐藏神经元除了接收前一层的输出外,还接收来自原始输入的仿射连接。我们首先在单变量设定下研究该架构,并给出具有任意有限数量隐藏层的IC-MLP的显式系统描述,包括网络函数的迭代公式。在此设定下,我们证明了一个通用逼近定理:当且仅当激活函数为非线性时,深层IC-MLP能够逼近实轴闭区间上的任意连续函数。随后我们将分析扩展至向量值输入情形,并针对$\mathbb{R}^n$紧子集上的连续函数建立了相应的通用逼近定理。